NICHOLAS JORDAN AND JENNIFER MAPP

JUNE 14, 2023 COMMENTARY

If logistics wins or loses wars, what wins or loses logistics? U.S. military doctrine has the answer: “The U.S. military supply chain (to include the defense industrial base) represents a major competitive advantage that underpins deterrence and allows the United States to project power.” Despite being established in doctrine, it took a global pandemic for the Department of Defense to take notice of the fragility of its supply chains and the full impact of China’s global economic expansion. However, acknowledging vulnerability, understanding it, and doing something about it are not mutually inclusive. While awareness in the Department of Defense is rising, the lack of visibility into defense supply chains makes easy targets for adversaries seeking to insert undetected risks into supply chains — silently biding time until they choose to exploit them. It is difficult to fix what you can’t see. It is time for the Department of Defense to take bold steps to gain full visibility into defense supply chains to help mitigate the risk of acquiring U.S. equipment from foreign adversaries and/or shoddy suppliers.

Supply chains underpin the global market. Every product has a network of interconnected companies that must come together at the right time and place to deliver a timely product. The more complex the product, the more nodes the network has. Each node has its own material, logistics, personnel, processes and stakeholder challenges. A disruption in one node will sweep quickly throughout the entire system and upend the supply chain.

The military supply chain is a complex system comprised of a network of suppliers, expanding beyond the known large defense contractors out to thousands of low-tier suppliers. Each tier of the network is critical to the success of the tiers above and below it. Within these networks, there are nexus suppliers critical to the success of the entire system. We argue that it is not too hard and not too expensive to gain full visibility over all of these supply chain nodes. To do so, the Department of Defense should capitalize on proven commercial supply chain software suites, work with Congress to set visibility mandates and reimagine its approach to supply chain management in the 21st century.

The Department of Defense’s Supply Chain Crisis

The Department of Defense has an incentive to map and fully understand its supply chain — and the suppliers that support it. It is currently facing two issues. The first, and most critical, is that the department does not know who provides parts for suppliers below a certain supplier threshold. An example from the private sector is illustrative of the supply chain and its nodes. The Center for Advanced Purchasing Studies mapped the American Honda Motor Company’s supply network. The supply chain included 10,832 suppliers: 245 first tier, 1,643 second tier, 4,605 third tier and 4,330 fourth tier. For Honda, over 97 percent of its suppliers were below tier one. This is the level of supplier visibility the Department of Defense admitted it was unable to attain in a 2022 report to the president.

The second challenge is that the suppliers the Department of Defense depends on may be clustered together in similar geographic regions. This makes them vulnerable to “kill shots,” a metaphor used for military targets that, if attacked, can render an adversary crippled and unable to respond. For example, the Living Supply Chain, a 2017 book on supply chain management written by Robert Handfield and Tom Linton, offers an illustration. Elementum mapped a client’s revenue streams to supplier locations, determining that 86 percent of the company’s revenues were tied to suppliers in one small geographic area. A single major disaster could ultimately impact 86 percent of the company’s revenues. Imagine if a similar geographic risk resides within the U.S. defense supply chain and how the clustering of a vital industry makes the United States vulnerable to attack.

The 2022 National Defense Strategy directs the Department of Defense to “fortify the defense industrial base, logistical systems, and relevant global supply chains” in response to the observed rise in supply chain impacts. For example, COVID-19-related supply chain disruptions caused delays in 48 major defense acquisition programs. Of those, 22 programs continue to experience delays, suggesting that things have not improved even as COVID-19-related concerns have subsided.

The U.S. Air Force’s acquisition chief has said: “Ongoing supply chain issues affecting subcontractors [sic] were largely responsible for the more than year-and-a-half delay” to the KC-46’s remote vision system. And the U.S. Navy’s Columbia-class submarine is already struggling to meet current program demands, highlighting that the “supply chain is the number 1 risk.” Beyond delays, the lack of visibility into all elements of the supply chain prevents the Department of Defense from identifying disruptions early — and planning for them proactively.

The second, and ongoing concern, is about quality control. There is a real risk of inferior or counterfeit components being injected into the Department of Defense’s supply chain. In June 2020, 1st Lt. David Schmitz was killed in an F-16 accident. In a related federal civil lawsuit, it was revealed that the Air Force identified several transistors and microchips that failed. The lawsuit alleges some of these components were counterfeit. Separately, in 2022, the Department of Justice filedcriminal charges against an individual for selling approximately $1 billion of Chinese-made counterfeit Cisco components to companies within the United States and an undisclosed list of government agencies, including the Department of Defense.

Supply Chain Risk Management: The Department of Defense’s History of Neglect

Unfortunately, the fragility of the Department of Defense’s supply chain is not a new issue. Over five years ago, a Government Accountability Office report highlighted that the information required to effectively manage supply chain risks within the defense sector was lacking. In response to this report, the Department of Defense “completed a detailed assessment of key sectors … and identified major risks … and included discussion of gaps or vulnerabilities and mitigation strategies.” However, the growing trend of supply chain disruptions suggests the Department of Defense’s mitigation steps were either never fully implemented or that they have failed to mitigate the problems.

The F-35’s supply chain is a good example. The United States is 100 percent import-reliant on 16 minerals deemed “critical” to national security. China accounts for “63 percent of the world’s rare earth mining, 85 percent of rare earth processing, and 92 percent of rare earth magnet production.” Yet, it still took the Department of Defense 12 years to recognize “every one of the more than 825 F-35[s] … delivered contains a component made with a Chinese alloy that is prohibited by both U.S. law and Pentagon regulations.”

To address this lack of visibility, President Biden issued an executive orderdirecting the Department of Defense to “submit a report identifying risks in the supply chain [sic] and policy recommendations to address these risks.” In response, the department admitted it had limited visibility, stating it “[does] not track these vulnerabilities … As supply chains have become more global in scale, prime contractors have lost some visibility into the sub-tiers of their supply chains, especially below third-tier levels.” The Department of Defense is currently reviewing these impacts. If history repeats, the department might see measurable progress in about 10 years.

Supply Chain Risk Management: A Tale of Two Industries

Supply chain risk management is not unique to the defense sector and the need for speed, agility and resilience is just as critical to profit margins as it is to national security. While private industry has evolved, the Department of Defense continues to view it through a very logistics-focused lens. Decades ago, industry began consolidating responsibility for the end-to-end value stream, establishing senior managerial positions for procurement and supply officers. In contrast, the Department of Defense continues to silo its functions of procurement, logistics, and operations. Each functional area assesses its lens of supply chain risk (e.g., cyber, acquisition, logistics, etc.), but no one organization is considering the inherent collective risks to the entire defense industrial base.

Private industry has also embraced the importance of proactive supply chain risk management and integrated it as a critical part of operations. Handfield and Linton offer that global supply chains are living systems “subject to biological rules.” And rule number one is that firms survive by adapting to changes in the ecosystem by “embracing real-time data, velocity, transparency, and rapid response.” Certain firms utilize commercial software solutions using big data, AI and machine learning to map supply chains. Others have implemented flow-down requirements mandating the use of commercial software solutions that rely on data directly from suppliers to create verified supply chain maps. In both cases, many then employ additional software to conduct real-time monitoring of the mapped network using publicly available information. Everstream, Resilinc, Craft, BlueVoyant, Deloitte CentralSight and many more offer software solutions in this space. Becton Dickenson, one of the world’s largest medical technology firms, utilized Everstream to map a product line in three days with over 90 percent accuracy. Previous internal attempts took Becton Dickenson four years to accomplish. Using Resilinc’s software to build a validated supplier map, IBM received real-time supplier updates during the COVID-19 pandemic. This allowed IBM to proactively mitigate issues, resulting in zero missed product deliveries due to pandemic-related disruptions.

Despite the leaps made in the commercial sector, the defense sector has barely taken its first steps toward gaining real visibility into its supply chains. It has been over a year since the Department of Defense said it would apply analytical tools to identify vulnerabilities. To date, nothing of significant value has been fielded. The organic solutions being explored are not only costly but also directly conflict with the department’s own call to action to “act as a fast-follower” with regard to commercial technologies.

Recommendation and Conclusion

U.S. national security relies on the strength and resilience of its defense supply chains, and failing to understand their limits will be catastrophic in conflict with a near-peer adversary. The Russian invasion of Ukraine has already resulted in the United States struggling to meet munition needs for Ukrainian forces for a regionalized conflict. In the Pacific, the United States faces a $19-billion backlog of planned weapon transfers to the Taiwanese military, while delaying 66 new F-16V aircraftdeliveries due to supply chain disruptions. In Taiwan Strait conflict wargames, the Center for Strategic and International Studies determined that “the United States would likely run out of some munitions — such as long-range, precision-guided munitions — in less than one week.” The report also stated: “The U.S. defense industrial base is not adequately prepared for the international security environment that now exists.”

This stark performance and assessment of the U.S. industrial base should alarm policymakers in the Department of Defense and Congress. While there is growing awareness of this crisis within the Pentagon, it appears the Department is trending toward historical patterns of organizational change versus accountability. Dr. William LaPlante, the Department of Defense’s top procurement official, issued a memo in March 2023 establishing a Joint Production Accelerator Cell. It will be responsible for “building enduring industrial production capacity, resiliency, and surge capability for key defense weapon systems and supplies.” This initiative calls into question the broader effectiveness of the Department of Defense’s existing Industrial Base Policy office and should be a pause for strategic reflection. Why create a new organization when the Department of Defense has an established office already charged with sustaining “a robust, secure, and resilient industrial base”?

To drive more enduring impacts in fortifying the defense industrial base, the Department of Defense should first understand the scope of the problem it is trying to fix. We recommend the Department of Defense immediately execute an experimentation plan to test widely available commercial supply chain visibility solutions and select a common suite for the department. The Department of Defense should avoid replicating commercial applications, seeing that previous attempts by the department struggled to reach the field or later were scrapped after spending millions of dollars. Custom solutions also fail to leverage proven commercial applications already deployed at scale. Additionally, a common suite ensures the department is able to gain visibility across programs. Allowing each program to address supply chain visibility individually will answer program-specific questions, but it will not answer the broader question of the “kill shots” within the defense industrial base.

The Department of Defense should also work with Congress to implement visibility mandates for industry. This mandate should include a standard of visibility and government data access for all government contractors to give the Department of Defense the authority to hold industry accountable for supply chain risk management. This legislation already exists for rare earth elements for permanent magnets (10 U.S. Code § 857) and sensitive materials from non-allied foreign nations (10 U.S. Code § 4872). To imply the government is not able to gain visibility due to contract or cost constraints neglects to acknowledge the myriad standards the government already levies on industry. National security depends on resilient supply chains and visibility is the first step in fortifying them.

More broadly, the Pentagon should reconsider how it thinks about supply chain management. The majority of the focus on supply chain management and supply chain risk management is through the lens of logistics. This approach completely ignores what is happening in the supply chains as a system is in development or production. Too often, Department of Defense program offices relegate weapon system supply chain monitoring and oversight to logistics or to the contractor, often with little oversight or attention from program leadership until there is a problem. This is a dated approach. Supply chains impact almost every aspect of a system. The risk exposure to the defense ecosystem from horizontal integration and global diversification remains unknown.

Therefore, supply chain accountability must garner proactive attention from the earliest stages of weapon system development. For example, the full network of suppliers should be evaluated by the Department of Defense prior to codifying a weapon system design. The acquisition community must reimagine how “cost, schedule, performance” is defined. Rather than focus on obligation rates and updating baselines to bring programs back on schedule, program leaders must recognize the integrated impacts of the supply chain to all three areas if they are to remain relevant in the 21st century.

It is time for the Department of Defense to act swiftly. The CIA has said they are aware of Chinese intentions: General Secretary Xi Jinping told his army to “be ready by 2027” to invade Taiwan. One thing is certain today: Department of Defense supply chains lack the resiliency for a large-scale protracted conflict and they are not on track to be better positioned in 2027. The Department of Defense’s approach toward strengthening the defense industrial base and securing its supply chains is failing to move at the speed of relevance. As many Department of Defense leaders remain hyper-focused on exquisite technology, they should not forget that “none of this technical overmatch matters if America can’t build enough of it, sustain it, or get it to the fight in the first place.” Defense supply chains are under assault and the United States is losing. It is time for the Department of Defense to stop the studies and take immediate and real action to identify “kill shots” at risk for exploitation. Supply chain visibility across the defense industrial base is foundational for national security, and it requires a united implementation strategy.

Lt. Col. Nicholas Jordan has over 18 years of Air Force program management and acquisition experience. He is a student at the Eisenhower School of National Security and Resource Strategy at the National Defense University in Washington, D.C.

Lt. Col. Jennifer Mapp has over 22 years of Air Force contracting and acquisition experience. She is a national defense fellow at Georgetown University in Washington, D.C.

The views expressed in this article are those of the authors and do not reflect the official policy or position of the United States Air Force, National Defense University, Department of Defense, or U.S. government.

Image: Capt. Travis Mueller

Article link: https://warontherocks.com/2023/06/in-the-dark-how-the-pentagons-limited-supplier-visibility-risks-u-s-national-security/

By CHRIS HUGHES JUNE 4, 2021

The order calls for modernizing the cloud-security program and opens the door for other frameworks to be used for authorization.

The Biden administration’s recently released cybersecurity-focused executive ordermentions a key cloud security program known as FedRAMP several times as it emphasizes the need for federal agencies to quickly but securely adopt cloud computing.

Section 3 of the executive order, titled “Modernizing Federal Government Cybersecurity,” states that within 60 days of the order, the General Services Administration in consultation with the director of the Office of Management and Budget and heads of other agencies shall begin modernizing the Federal Risk and Authorization Management Program. This includes “identifying relevant compliance frameworks, mapping those frameworks onto requirements in the FedRAMP authorization process, and allowing those frameworks to be used as a substitute for the relevant portion of the authorization process, as appropriate.”

FedRAMP validates the security of cloud products—infrastructure, platforms, software applications—being sold to federal agencies. If a product meets FedRAMP’s controls, it gets certified with a provisional authority to operate, or P-ATO.

But it’s no secret that FedRAMP—best intentions aside—has long served as a bottleneck to getting innovative cloud service offerings to federal system/mission owners and agencies. FedRAMP began in 2011, roughly a decade ago, and currently has about 225 authorized cloud service offerings listed on its marketplace. To put this in perspective, there are roughly 15,000 software-as-a-service companies in the market.

FedRAMP timelines vary depending on several factors—some related to the cloud service providers themselves, and others related to the FedRAMP Joint Authorization Board and program management office, or sponsoring agencies. That said, general timelines for a FedRAMP JAB P-ATO can take seven to nine months to complete. Agency authorizations can take anywhere from four to six months to complete. Some cases have taken much longer than this.

Part of the issue is that the FedRAMP JAB can only handle so many authorizations a year. On average, the JAB prioritizes 12 cloud service offerings each year. It evaluates cloud service offerings through a process called FedRAMP Connect, which they use to prioritize what cloud service offerings will be selected for the given year.

Among other methods, the executive order opens the door for considering relevant compliance frameworks mapped to FedRAMP and allowing them to serve as a substitute for relevant portions of the FedRAMP process

With this clear challenge between the number of as-a-service offerings in the market and FedRAMP’s limited ability to scale to authorize, other compliance frameworks are being considered. But it’s yet to be determined what those alternative frameworks may be and what could be the challenges associated with them.

Some cybersecurity professionals have suggested one such alternative may be the Cloud Security Alliance’s Cloud Control Matrix (CCM), which provides 197 controls and 17 domains. It is also mapped to industry frameworks, including FedRAMP. However, some challenges associated with CCM is that it does not have the same third-party assessor rigor that FedRAMP has and allows for companies to self-attest their products meet the standards.

There are also cascading effects of opening the door to FedRAMP alternatives within the defense industrial base. Defense companies have to deal with regulations such as the Defense Department’s vendor certification program called Cybersecurity Maturity Model Certification and acquisition rule 7012, which provides guidance to defense contractors using cloud services when dealing with covered defense information. There has been no shortage of talk of reciprocity between FedRAMP and CMMC. If FedRAMP opens the door for reciprocity with other control frameworks, this then creates a potentially transitive situation with anything FedRAMP would use as an alternative framework. In other words, if alternative frameworks are accepted in place of FedRAMP for federal cloud use, then theoretically FedRAMP alternatives would also potentially have reciprocity with CMMC. This creates a lot of questions and challenges for the Defense Department, the defense industry and CMMC that would need to be explored.

While there are no easy answers, it is clear that the government’s consumption and utilization of cloud service offerings are only accelerating and were further exacerbated by the COVID pandemic. Given this reality, it is clear that the current model of authorization and approval of cloud services simply hasn’t—and won’t—scale to meet the demand and creates a situation to explore alternative options. That said, alternatives can’t come at the expense of the security of federal and defense data.

Chris Hughes is an industry consultant, an adjunct professor with the University of Maryland Global Campus and Capitol Technology University, and co-host of the Resilient Cyber podcast. He previously served in the U.S. Air Force, as a federal civilian with Naval Information Warfare Systems Atlantic, and as a member of the General Services Administration’s Joint Authorization Board for FedRAMP.

Article link: https://www.nextgov.com/ideas/2021/06/executive-order-hints-fedramp-alternatives/174505/

A quantum computer came up with better answers to a physics problem than a conventional supercomputer.

- June 14, 2023Updated 11:51 a.m. ET

Quantum computers today are small in computational scope — the chip inside your smartphone contains billions of transistors while the most powerful quantum computer contains a few hundred of the quantum equivalent of a transistor. They are also unreliable. If you run the same calculation over and over, they will most likely churn out different answers each time.

But with their intrinsic ability to consider many possibilities at once, quantum computers do not have to be very large to tackle certain prickly problems of computation, and on Wednesday, IBM researchers announced that they had devised a method to manage the unreliability in a way that would lead to reliable, useful answers.

“What IBM showed here is really an amazingly important step in that direction of making progress towards serious quantum algorithmic design,” said Dorit Aharonov, a professor of computer science at the Hebrew University of Jerusalem who was not involved with the research.

While researchers at Google in 2019 claimed that they had achieved “quantum supremacy” — a task performed much more quickly on a quantum computer than a conventional one — IBM’s researchers say they have achieved something new and more useful, albeit more modestly named.

“We’re entering this phase of quantum computing that I call utility,” said Jay Gambetta, a vice president of IBM Quantum. “The era of utility.”

A team of IBM scientists who work for Dr. Gambetta described their results in a paper published on Wednesday in the journal Nature.

Present-day computers are called digital, or classical, because they deal with bits of information that are either 1 or 0, on or off. A quantum computer performs calculations on quantum bits, or qubits, that capture a more complex state of information. Just as a thought experiment by the physicist Erwin Schrödinger postulated that a cat could be in a quantum state that is both dead and alive, a qubit can be both 1 and 0 simultaneously.

That allows quantum computers to make many calculations in one pass, while digital ones have to perform each calculation separately. By speeding up computation, quantum computers could potentially solve big, complex problems in fields like chemistry and materials science that are out of reach today. Quantum computers could also have a darker side by threatening privacy through algorithms that break the protections used for passwords and encrypted communications.

When Google researchers made their supremacy claim in 2019, they said their quantum computer performed a calculation in 3 minutes 20 seconds that would take about 10,000 years on a state-of-the-art conventional supercomputer.

But some other researchers, including those at IBM, discounted the claim, saying the problem was contrived. “Google’s experiment, as impressive it was, and it was really impressive, is doing something which is not interesting for any applications,” said Dr. Aharonov, who also works as the chief strategy officer of Qedma, a quantum computing company.

The Google computation also turned out to be less impressive than it first appeared. A team of Chinese researchers was able to perform the same calculation on a non-quantum supercomputer in just over five minutes, far quicker than the 10,000 years the Google team had estimated.

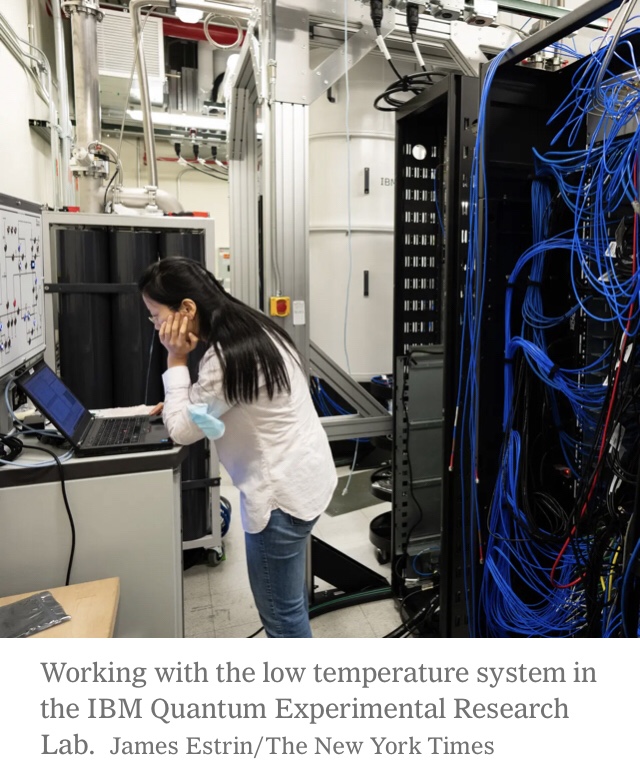

The IBM researchers in the new study performed a different task, one that interests physicists. They used a quantum processor with 127 qubits to simulate the behavior of 127 atom-scale bar magnets — tiny enough to be governed by the spooky rules of quantum mechanics — in a magnetic field. That is a simple system known as the Ising model, which is often used to study magnetism.

This problem is too complex for a precise answer to be calculated even on the largest, fastest supercomputers.

On the quantum computer, the calculation took less than a thousandth of a second to complete. Each quantum calculation was unreliable — fluctuations of quantum noise inevitably intrude and induce errors — but each calculation was quick, so it could be performed repeatedly.

ndeed, for many of the calculations, additional noise was deliberately added, making the answers even more unreliable. But by varying the amount of noise, the researchers could tease out the specific characteristics of the noise and its effects at each step of the calculation.

“We can amplify the noise very precisely, and then we can rerun that same circuit,” said Abhinav Kandala, the manager of quantum capabilities and demonstrations at IBM Quantum and an author of the Nature paper. “And once we have results of these different noise levels, we can extrapolate back to what the result would have been in the absence of noise.”

In essence, the researchers were able to subtract the effects of noise from the unreliable quantum calculations, a process they call error mitigation.

“You have to bypass that by inventing very clever ways to mitigate the noise,” Dr. Aharonov said. “And this is what they do.”

Altogether, the computer performed the calculation 600,000 times, converging on an answer for the overall magnetization produced by the 127 bar magnets.

But how good was the answer?

For help, the IBM team turned to physicists at the University of California, Berkeley. Although an Ising model with 127 bar magnets is too big, with far too many possible configurations, to fit in a conventional computer, classical algorithms can produce approximate answers, a technique similar to how compression in JPEG images throws away less crucial data to reduce the size of the file while preserving most of the image’s details.

Michael Zaletel, a physics professor at Berkeley and an author of the Nature paper, said that when he started working with IBM, he thought his classical algorithms would do better than the quantum ones.

“It turned out a little bit differently than I expected,” Dr. Zaletel said.

Certain configurations of the Ising model can be solved exactly, and both the classical and quantum algorithms agreed on the simpler examples. For more complex but solvable instances, the quantum and classical algorithms produced different answers, and it was the quantum one that was correct.

Thus, for other cases where the quantum and classical calculations diverged and no exact solutions are known, “there is reason to believe that the quantum result is more accurate,” said Sajant Anand, a graduate student at Berkeley who did much of the work on the classical approximations.

It is not clear that quantum computing is indisputably the winner over classical techniques for the Ising model.

Mr. Anand is currently trying to add a version of error mitigation for the classical algorithm, and it is possible that could match or surpass the performance of the quantum calculations.

“It’s not obvious that they’ve achieved quantum supremacy here,” Dr. Zaletel said.

In the long run, quantum scientists expect that a different approach, error correction, will be able to detect and correct calculation mistakes, and that will open the door for quantum computers to speed ahead for many uses.

Error correction is already used in conventional computers and data transmission to fix garbles. But for quantum computers, error correction is likely years away, requiring better processors able to process many more qubits.

Error mitigation, the IBM scientists believe, is an interim solution that can be used now for increasingly complex problems beyond the Ising model.

“This is one of the simplest natural science problems that exists,” Dr. Gambetta said. “So it’s a good one to start with. But now the question is, how do you generalize it and go to more interesting natural science problems?”

Those might include figuring out the properties of exotic materials, accelerating drug discovery and modeling fusion reactions.

Kenneth Chang has been at The Times since 2000, writing about physics, geology, chemistry, and the planets. Before becoming a science writer, he was a graduate student whose research involved the control of chaos. @kchangnyt

Article link: https://www.nytimes.com/2023/06/14/science/ibm-quantum-computing.html

Jun 14, 2023, 7:45 AM

- The European parliament has voted to take steps to regulate AI technology such as ChatGPT.

- The proposed rules would require such systems to indicate that content was AI-generated.

- Talks with EU member states will shape the precise wording of the legislation known as the AI Act.

The European Union has taken the first steps towards regulating artificial intelligence, with its parliament backing a ban on the technology for biometric surveillance, emotion recognition, and predictive policing.

Europe will also seek to require systems such as ChatGPT to indicate that content was generated by AI, and deem AI systems used to influence voters to be “high-risk.”

The rules “aim to promote the uptake of human-centric and trustworthy AI and protect the health, safety, fundamental rights and democracy from its harmful effects,” per a press release from the European parliament on Wednesday.

The measures were passed by 499 votes to 28, with 93 abstentions. Talks will begin with EU member states on the precise wording of the legislation known as the AI Act.

The rules aim to ensure that AI developed and used in Europe complied with EU rights and values including human oversight, safety, privacy, transparency, non-discrimination, and social and environmental wellbeing.

Sam Altman, the CEO of ChatGPT maker OpenAI, met several European leaders in May to discuss the potential impact of AI on society. He said he had no plans to pull ChatGPT out of Europe despite saying proposed EU rules to govern AI could backfire.

As Insider previously reported, one of Altman’s main concerns with the EU’s proposed law centers on its definition of “high-risk” systems and could ensnare ChatGPT.

European parliament members voted to deem AI systems that “pose significant harm to people’s health, safety, fundamental rights or the environment” as high-risk, per the press release. AI systems used to influence voters and the outcome of elections, and recommender systems used by social-media platforms are also on the “high-risk” list.

Co-rapporteur Brando Benifei of Italy said Europe had devised a “concrete response” to the potential dangers posed by AI. “We want AI’s positive potential for creativity and productivity to be harnessed but we will also fight to protect our position and counter dangers to our democracies and freedoms,” he said.

Co-rapporteur Dragos Tudorache of Romania said Europe’s AI Act would shape the development and governance of AI globally and ensure it was used in line with the “European values of democracy, fundamental rights, and the rule of law.”

OpenAI, which is heavily backed by Microsoft, released ChatGPT in November, and became the fastest-growing internet app. Its popularity has fueled massive consumer and investor interest in generative AI, as well as concerns from lawmakers about the technology’s potential impact on jobs, elections, and the media.

Article link: https://www.businessinsider.com/europe-plans-to-put-guardrails-on-chatgpt-other-ai-apps-2023-6

From the Magazine (January–February 2016)

Summary.

Collaboration is taking over the workplace. According to data collected by the authors over the past two decades, the time spent by managers and employees in collaborative activities has ballooned by 50% or more. There is much to applaud about these developments—but when consumption of a valuable resource spikes that dramatically, it should also give us pause.

At many companies, people spend around 80% of their time in meetings or answering colleagues’ requests, leaving little time for all the critical work they must complete on their own. What’s more, research the authors have done across more than 300 organizations shows that the apportionment of collaborative work is often extremely lopsided. In most cases, 20% to 35% of value-added collaborations come from only 3% to 5% of employees. The avalanche of demands for input or advice, access to resources, or sometimes just presence in a meeting causes performance to suffer. Employees take assignments home, and soon burnout and turnover become real risks.

Leaders must start to manage collaboration more effectively in two ways: (1) by mapping the supply and demand in their organizations and redistributing the work more evenly among employees, and (2) by incentivizing people to collaborate more efficiently.

Collaboration is taking over the workplace.

As business becomes increasingly global and cross-functional, silos are breaking down, connectivity is increasing, and teamwork is seen as a key to organizational success. According to data we have collected over the past two decades, the time spent by managers and employees in collaborative activities has ballooned by 50% or more.

Certainly, we find much to applaud in these developments. However, when consumption of a valuable resource spikes that dramatically, it should also give us pause. Consider a typical week in your own organization. How much time do people spend in meetings, on the phone, and responding to e-mails? At many companies the proportion hovers around 80%, leaving employees little time for all the critical work they must complete on their own. Performance suffers as they are buried under an avalanche of requests for input or advice, access to resources, or attendance at a meeting. They take assignments home, and soon, according to a large body of evidence on stress, burnout and turnover become real risks.

What’s more, research we’ve done across more than 300 organizations shows that the distribution of collaborative work is often extremely lopsided. In most cases, 20% to 35% of value-added collaborations come from only 3% to 5% of employees. As people become known for being both capable and willing to help, they are drawn into projects and roles of growing importance. Their giving mindset and desire to help others quickly enhances their performance and reputation. As a recent study led by Ning Li, of the University of Iowa, shows, a single “extra miler”—an employee who frequently contributes beyond the scope of his or her role—can drive team performance more than all the other members combined.

But this “escalating citizenship,” as the University of Oklahoma professor Mark Bolino calls it, only further fuels the demands placed on top collaborators. We find that what starts as a virtuous cycle soon turns vicious. Soon helpful employees become institutional bottlenecks: Work doesn’t progress until they’ve weighed in. Worse, they are so overtaxed that they’re no longer personally effective. And more often than not, the volume and diversity of work they do to benefit others goes unnoticed, because the requests are coming from other units, varied offices, or even multiple companies. In fact, when we use network analysis to identify the strongest collaborators in organizations, leaders are typically surprised by at least half the names on their lists. In our quest to reap the rewards of collaboration, we have inadvertently created open markets for it without recognizing the costs. What can leaders do to manage these demands more effectively?

Precious Personal Resources

First, it’s important to distinguish among the three types of “collaborative resources” that individual employees invest in others to create value: informational, social, and personal. Informational resources are knowledge and skills—expertise that can be recorded and passed on. Social resources involve one’s awareness, access, and position in a network, which can be used to help colleagues better collaborate with one another. Personal resources include one’s own time and energy.

These three resource types are not equally efficient. Informational and social resources can be shared—often in a single exchange—without depleting the collaborator’s supply. That is, when I offer you knowledge or network awareness, I also retain it for my own use. But an individual employee’s time and energy are finite, so each request to participate in or approve decisions for a project leaves less available for that person’s own work.

Up to a third of value-added collaborations come from only 3% to 5% of employees.

Unfortunately, personal resources are often the default demand when people want to collaborate. Instead of asking for specific informational or social resources—or better yet, searching in existing repositories such as reports or knowledge libraries—people ask for hands-on assistance they may not even need. An exchange that might have taken five minutes or less turns into a 30-minute calendar invite that strains personal resources on both sides of the request.

Consider a case study from a blue-chip professional services firm. When we helped the organization map the demands facing a group of its key employees, we found that the top collaborator—let’s call him Vernell—had 95 connections based on incoming requests. But only 18% of the requesters said they needed more personal access to him to achieve their business goals; the rest were content with the informational and social resources he was providing. The second most connected person was Sharon, with 89 people in her network, but her situation was markedly different, and more dangerous, because 40% of them wanted more time with her—a significantly greater draw on her personal resources.

We find that as the percentage of requesters seeking more access moves beyond about 25, it hinders the performance of both the individual and the group and becomes a strong predictor of voluntary turnover. As well-regarded collaborators are overloaded with demands, they may find that no good deed goes unpunished.

The exhibit “In Demand, Yet Disengaged,” reflecting data on business unit line leaders across a sample of 20 organizations, illustrates the problem. People at the top center and right of the chart—that is, those seen as the best sources of information and in highest demand as collaborators in their companies—have the lowest engagement and career satisfaction scores, as represented by the size of their bubbles. Our research shows that this ultimately results in their either leaving their organizations (taking valuable knowledge and network resources with them) or staying and spreading their growing apathy to their colleagues.

Leaders can solve this problem in two ways: by streamlining and redistributing responsibilities for collaboration and by rewarding effective contributions.

Redistributing the Work

Any effort to increase your organization’s collaborative efficiency should start with an understanding of the existing supply and demand. Employee surveys, electronic communications tracking, and internal systems such as 360-degree feedback and CRM programs can provide valuable data on the volume, type, origin, and destination of requests, as can more in-depth network analyses and tools. For example, Do.com monitors calendars and provides daily and weekly reports to both individual employees and managers about time spent in meetings versus on solo work. The idea is to identify the people most at risk for collaborative overload. Once that’s been done, you can focus on three levers:

Encourage behavioral change.

Show the most active and overburdened helpers how to filter and prioritize requests; give them permission to say no (or to allocate only half the time requested); and encourage them to make an introduction to someone else when the request doesn’t draw on their own unique contributions. The latest version of the team-collaboration software Basecamp now offers a notification “snooze button” that encourages employees to set stronger boundaries around their incoming information flow. It’s also worth suggesting that when they do invest personal resources, it be in value-added activities that they find energizing rather than exhausting. In studying employees at one Fortune 500 technology company, we found that although 60% wanted to spend less time responding to ad hoc collaboration requests, 40% wanted to spend more time training, coaching, and mentoring. After their contributions were shifted to those activities, employees were less prone to stress and disengagement.

To stem the tide of incoming requests, help seekers, too, must change their behavior. Resetting norms regarding when and how to initiate e-mail requests or meeting invitations can free up a great deal of wasted time. As a step in this direction, managers at Dropbox eliminated all recurring meetings for a two-week period. That forced employees to reassess the necessity of those gatherings and, after the hiatus, helped them become more vigilant about their calendars and making sure each meeting had an owner and an agenda. Rebecca Hinds and Bob Sutton, of Stanford, found that although the company tripled the number of employees at its headquarters over the next two years, its meetings were shorter and more productive.

In addition, requests for time-sapping reviews and approvals can be reduced in many risk-averse cultures by encouraging people to take courageous action on decisions they should be making themselves, rather than constantly checking with leaders or stakeholders.

Leverage technology and physical space to make informational and social resources more accessible and transparent.

Relevant technical tools include Slack and Salesforce.com’s Chatter, with their open discussion threads on various work topics; and Syndio and VoloMetrix (recently acquired by Microsoft), which help individuals assess networks and make informed decisions about collaborative activities. Also rethink desk or office placement. A study led by the Boston University assistant professor Stine Grodal documented the detrimental effects of team meetings and e-mails on the development and maintenance of productive helping relationships. When possible, managers should colocate highly interdependent employees to facilitate brief and impromptu face-to-face collaborations, resulting in a more efficient exchange of resources.

Consider structural changes.

Can you shift decision rights to more-appropriate people in the network? It may seem obvious that support staff or lower-level managers should be authorized to approve small capital expenditures, travel, and some HR activities, but in many organizations they aren’t. Also consider whether you can create a buffer against demands for collaboration. Many hospitals now assign each unit or floor a nurse preceptor, who has no patient care responsibilities and is therefore available to respond to requests as they emerge. The result, according to research that one of us (Adam Grant) conducted with David Hofmann and Zhike Lei, is fewer bottlenecks and quicker connections between nurses and the right experts. Other types of organizations might also benefit from designating “utility players”—which could lessen demand for the busiest employees—and possibly rotating the role among team members while freeing up personal resources by reducing people’s workloads.

Rewarding Effective Collaboration

We typically see an overlap of only about 50% between the top collaborative contributors in an organization and those employees deemed to be the top performers. As we’ve explained, many helpers underperform because they’re overwhelmed; that’s why managers should aim to redistribute work. But we also find that roughly 20% of organizational “stars” don’t help; they hit their numbers (and earn kudos for it) but don’t amplify the success of their colleagues. In these cases, as the former Goldman Sachs and GE chief learning officer Steve Kerr once wrote, leaders are hoping for A (collaboration) while rewarding B (individual achievement). They must instead learn how to spot and reward people who do both.

Why Women Bear More of the Burden

The lion’s share of collaborative work tends to …

Consider professional basketball, hockey, and soccer teams. They don’t just measure goals; they also track assists. Organizations should do the same, using tools such as network analysis, peer recognition programs, and value-added performance metrics. We helped one life sciences company use these tools to assess its workforce during a multibillion-dollar acquisition. Because the deal involved consolidating facilities around the world and relocating many employees, management was worried about losing talent. A well-known consultancy had recommended retention bonuses for leaders. But this approach failed to consider those very influential employees deep in the acquired company who had broad impact but no formal authority. Network analytics allowed the company to pinpoint those people and distribute bonuses more fairly.

Efficient sharing of informational, social, and personal resources should also be a prerequisite for positive reviews, promotions, and pay raises. At one investment bank, employees’ annual performance reviews include feedback from a diverse group of colleagues, and only those people who are rated as strong collaborators (that is, able to cross-sell and provide unique customer value to transactions) are considered for the best promotions, bonuses, and retention plans. Corning, the glass and ceramics manufacturer, uses similar metrics to decide which of its scientists and engineers will be named fellows—a high honor that guarantees a job and a lab for life. One criterion is to be the first author on a patent that generates at least $100 million in revenue. But another is whether the candidate has worked as a supporting author on colleagues’ patents. Corning grants status and power to those who strike a healthy balance between individual accomplishment and collaborative contribution. (Disclosure: Adam Grant has done consulting work for Corning.)

Collaboration is indeed the answer to many of today’s most pressing business challenges. But more isn’t always better. Leaders must learn to recognize, promote, and efficiently distribute the right kinds of collaborative work, or their teams and top talent will bear the costs of too much demand for too little supply. In fact, we believe that the time may have come for organizations to hire chief collaboration officers. By creating a senior executive position dedicated to collaboration, leaders can send a clear signal about the importance of managing teamwork thoughtfully and provide the resources necessary to do it effectively. That might reduce the odds that the whole becomes far less than the sum of its parts.

A version of this article appeared in the January–February 2016 issue (pp.74–79) of Harvard Business Review.

Article link: https://hbr.org/2016/01/collaborative-overload?

- Rob Cross is the Edward A. Madden Professor of Global Leadership at Babson College and the cofounder and director of the Connected Commons. He is also coauthor of The Microstress Effect: How Little Things Pile Up and Create Big Problems — and What to Do About It (Harvard Business Review Press, 2023) and the author of Beyond Collaboration Overload (Harvard Business Review Press, 2021).

- Reb Rebele is a researcher for Wharton People Analytics and teaches in the Master of Applied Positive Psychology (MAPP) program at the University of Pennsylvania.

- Adam Grant is an organizational psychologist at Wharton and the author of Think Again: The Power of Knowing What You Don’t Know(Viking, 2021).

By CHRIS RIOTTA JUNE 6, 2023

The agency’s CHIPS Research and Development Office aims to advance U.S. research and development efforts and “ensure America’s global leadership” in the semiconductor sector, officials said.

The National Institute of Standards and Technology has compiled a new team of leaders to help spearhead federal research and development efforts that aim to boost U.S. leadership in semiconductor manufacturing.

NIST Director Laura Locascio said Tuesday that the heads of the CHIPS Research and Development Office “will propel CHIPS for America and the nation’s semiconductor sector forward” by overseeing four new programs dedicated to advancing U.S. semiconductor technology and innovation.

The office was established after Congress passed the Creating Helpful Incentives to Produce Semiconductors — or CHIPS — Act last year, which included over $50 billion for domestic semiconductor research and development.

Lora Weiss, senior vice president for research at Penn State University, will serve as director of the office. Weiss, who also serves as president of the Penn State Research Foundation, oversees research efforts across the university’s 12 academic colleges, as well as seven interdisciplinary research institutes and a university-affiliated research center for the Navy.

Eric Lin, former director of the NIST Material Measurement Laboratory, will serve as deputy director, and Neil Alderoty, former executive administrator of the laboratory, will serve as executive officer.

The CHIPS Research and Development Office is tasked with managing four integrated semiconductor programs, including the National Semiconductor Technology Center and the National Advanced Packaging Manufacturing Program.

The office will also oversee up to three new manufacturing institutes focused on semiconductor technologies, as well as the CHIPS Metrology research and development program, which conducts measurement science to help develop new materials and production methods for U.S. semiconductors.

Richard-Duane Chambers will take on the role of associate director for integration and policy after serving as a lead staffer on the Senate Committee on Commerce, Science and Transportation’s subcommittee on space and science. Maria Dowell will assume the role of director of the CHIPS Metrology Research and Development program, after previously serving as director of the NIST Communications Technology Laboratory.

Secretary of Commerce Gina Raimondo said the new research and development programs “will ensure America’s global leadership by creating a robust semiconductor R&D ecosystem.

“These leaders bring exactly the depth and breadth of organizational, programmatic and technical leadership experience that CHIPS needs to stand up new, transformational R&D programs,” Raimondo said in a statement.

The U.S. currently produces an estimated 10% of the world’s supply of semiconductors, while East Asia is responsible for more than 75% of global production. In addition to the nearly $50 billion included in the CHIPS Act for research and development, the bill also featured a $10 billion investment in regional innovation and technology hubs nationwide, as well as funding to support science, technology, engineering and mathematics education and workforce development activities.

Article link: https://www.nextgov.com/policy/2023/06/nist-unveils-new-leadership-team-drive-semiconductor-innovation/387176/

December 04, 2018

Summary.

“Full-stack” data scientist means mastery of machine learning, statistics, and analytics. Today’s fashion in data science favors flashy sophistication with a dash of sci-fi, making AI and machine learning the darlings of the job market. Alternative challengers for the alpha spot come from statistics, thanks to a century-long reputation for rigor and mathematical superiority. What about analysts?Whereas excellence in statistics is about rigor and excellence in machine learning is about performance, excellence in analytics is all about speed. Analysts are your best bet for coming up with those hypotheses in the first place. As analysts mature, they’ll begin to get the hang of judging what’s important in addition to what’s interesting, allowing decision-makers to step away from the middleman role. Of the three breeds, analysts are the most likely heirs to the decision throne.

The top trophy hire in data science is elusive, and it’s no surprise: a “full-stack” data scientist has mastery of machine learning, statistics, and analytics. When teams can’t get their hands on a three-in-one polymath, they set their sights on luring the most impressive prize among the single-origin specialists. Which of those skills gets the pedestal?

Today’s fashion in data science favors flashy sophistication with a dash of sci-fi, making AI and machine learning the darlings of the job market. Alternative challengers for the alpha spot come from statistics, thanks to a century-long reputation for rigor and mathematical superiority. What about analysts?

Analytics as a second-class citizen

If your primary skill is analytics (or data-mining or business intelligence), chances are that your self-confidence has taken a beating as machine learning and statistics have become prized within companies, the job market, and the media.

But what the uninitiated rarely grasp is that the three professions under the data science umbrella are completely different from one another. They may use some of the same methods and equations, but that’s where the similarity ends. Far from being a lesser version of the other data science breeds, good analysts are a prerequisite for effectiveness in your data endeavors. It’s dangerous to have them quit on you, but that’s exactly what they’ll do if you under-appreciate them.

Instead of asking an analyst to develop their statistics or machine learning skills, consider encouraging them to seek the heights of their own discipline first. In data science, excellence in one area beats mediocrity in two. So, let’s examine what it means to be truly excellent in each of the data science disciplines, what value they bring, and which personality traits are required to survive each job. Doing so will help explain why analysts are valuable, and how organizations should use them.

Excellence in statistics: rigor

Statisticians are specialists in coming to conclusions beyond your data safely — they are your best protection against fooling yourself in an uncertain world. To them, inferring something sloppily is a greater sin than leaving your mind a blank slate, so expect a good statistician to put the brakes on your exuberance. They care deeply about whether the methods applied are right for the problem and they agonize over which inferences are valid from the information at hand.

The result? A perspective that helps leaders make important decisions in a risk-controlled manner. In other words, they use data to minimize the chance that you’ll come to an unwise conclusion.

Excellence in machine learning: performance

You might be an applied machine learning/AI engineer if your response to “I bet you couldn’t build a model that passes testing at 99.99999% accuracy” is “Watch me.” With the coding chops to build both prototypes and production systems that work and the stubborn resilience to fail every hour for several years if that’s what it takes, machine learning specialists know that they won’t find the perfect solution in a textbook. Instead, they’ll be engaged in a marathon of trial-and-error. Having great intuition for how long it’ll take them to try each new option is a huge plus and is more valuable than an intimate knowledge of how the algorithms work (though it’s nice to have both). Performance means more than clearing a metric — it also means reliable, scalable, and easy-to-maintain models that perform well in production. Engineering excellence is a must.

The result? A system that automates a tricky task well enough to pass your statistician’s strict testing bar and deliver the audacious performance a business leader demanded.

Wide versus deep

What the previous two roles have in common is that they both provide high-effort solutions to specific problems. If the problems they tackle aren’t worth solving, you end up wasting their time and your money. A frequent lament among business leaders is, “Our data science group is useless.” And the problem usually lies in an absence of analytics expertise.

Statisticians and machine learning engineers are narrow-and-deep workers — the shape of a rabbit hole, incidentally — so it’s really important to point them at problems that deserve the effort. If your experts are carefully solving the wrong problems, your investment in data science will suffer low returns. To ensure that you can make good use of narrow-and-deep experts, you either need to be sure you already have the right problem or you need a wide-and-shallow approach to finding one.

Excellence in analytics: speed

The best analysts are lightning-fast coders who can surf vast datasets quickly, encountering and surfacing potential insights faster than those other specialists can say “whiteboard.” Their semi-sloppy coding style baffles traditional software engineers — but leaves them in the dust. Speed is their highest virtue, closely followed by the ability to identify potentially useful gems. A mastery of visual presentation of information helps, too: beautiful and effective plots allow the mind to extract information faster, which pays off in time-to-potential-insights.

The result is that the business gets a finger on its pulse and eyes on previously-unknown unknowns. This generates the inspiration that helps decision-makers select valuable quests to send statisticians and ML engineers on, saving them from mathematically-impressive excavations of useless rabbit holes.

Sloppy nonsense or stellar storytelling?

“But,” object the statisticians, “most of their so-called insights are nonsense.” By that they mean the results of their exploration may reflect only noise. Perhaps, but there’s more to the story.

Analysts are data storytellers. Their mandate is to summarize interesting facts and to use data for inspiration. In some organizations those facts and that inspiration become input for human decision-makers. But in more sophisticated data operations, data-driven inspiration gets flagged for proper statistical follow-up.

Good analysts have unwavering respect for the one golden rule of their profession: do not come to conclusions beyond the data (and prevent your audience from doing it, too). To this end, one way to spot a good analyst is that they use softened, hedging language. For example, not “we conclude” but “we are inspired to wonder”. They also discourage leaders’ overconfidence by emphasizing a multitude of possible interpretations for every insight.

As long as analysts stick to the facts — saying only “This is what is here.” — and don’t take themselves too seriously, the worst crime they could commit is wasting someone’s time when they run it by them.

While statistical skills are required to test hypotheses, analysts are your best bet for coming up with those hypotheses in the first place. For instance, they might say something like “It’s only a correlation, but I suspect it could be driven by …” and then explain why they think that. This takes strong intuition about what might be going on beyond the data, and the communication skills to convey the options to the decision-maker, who typically calls the shots on which hypotheses (of many) are important enough to warrant a statistician’s effort. As analysts mature, they’ll begin to get the hang of judging what’s important in addition to what’s interesting, allowing decision-makers to step away from the middleman role.

Of the three breeds, analysts are the most likely heirs to the decision throne. Because subject matter expertise goes a long way towards helping you spot interesting patterns in your data faster, the best analysts are serious about familiarizing themselves with the domain. Failure to do so is a red flag. As their curiosity pushes them to develop a sense for the business, expect their output to shift from a jumble of false alarms to a sensibly-curated set of insights that decision-makers are more likely to care about.

Analytics for decision-making

To avoid wasted time, analysts should lay out the story they’re tempted to tell and poke it from several angles with follow-up investigations to see if it holds water before bringing it to decision-makers. The decision-maker should then function as a filter between exploratory data analytics and statistical rigor. If someone with decision responsibility finds the analyst’s exploration promising for a decision they have to make, they then can sign off on a statistician spending the time to do a more rigorous analysis. (This process indicates why just telling analysts to get better at statistics misses the point in an important way. Not only are the two activities separate, but another person sits in between them, meaning it’s not necessarily any more efficient for one person to do both things.)

Analytics for machine learning and AI

Machine learning specialists put a bunch of potential data inputs through algorithms, tweak the settings, and keep iterating until the right outputs are produced. While it may sound like there’s no role for analytics here, in practice a business often has far too many potential ingredients to shove into the blender all at once. One way to filter down to a promising set of inputs to try is domain expertise — ask a human with opinions about how things might work. Another way is through analytics. To use the analogy of cooking, the machine learning engineer is great at tinkering in the kitchen, but right now they’re standing in front of a huge, dark warehouse full of potential ingredients. They could either start grabbing them haphazardly and dragging them back to their kitchens, or they could send a sprinter armed with a flashlight through the warehouse first. Your analyst is the sprinter; their ability to quickly help you see and summarize what-is-here is a superpower for your process.

The dangers of under-appreciating analysts

An excellent analyst is not a shoddy version of the machine learning engineer; their coding style is optimized for speed — on purpose. Nor are they a bad statistician, since they don’t deal at all with uncertainty, they deal with facts. The primary job of the analyst is to say: “Here’s what’s in our data. It’s not my job to talk about what it means, but perhaps it will inspire the decision-maker to pursue the question with a statistician.”

If you overemphasize hiring and rewarding skills in machine learning and statistics, you’ll lose your analysts. Who will help you figure out which problems are worth solving then? You’ll be left with a group of miserable experts who keep being asked to work on useless projects or analytics tasks they didn’t sign up for. Your data will lie around useless.

When in doubt, hire analysts before other roles. Appreciate them and reward them. Encourage them to grow to the heights of their chosen career (and not someone else’s). Of the cast of characters mentioned in this story, the only ones that every business needs are decision-makers and analysts. The others you’ll only be able to use when you know exactly what you need them for. Start with analytics and be proud of your newfound ability to open your eyes to the rich and beautiful information in front of you. Data-driven inspiration is a powerful thing.

Cassie Kozyrkov is the chief decision scientist at Google.

Article link: https://hbr.org/2018/12/what-great-data-analysts-do-and-why-every-organization-needs-them?

June 1, 2023 | 4 minute read

Nasim Afsar, MD, MBA, MHM Senior Vice President and Chief Health Officer, Oracle Health

More than 80% of your health status is dependent on social determinants of health, such as where you live and work, the air you breathe, your transportation, the water you drink, and whether you use tobacco or other drugs. These factors also can—and do—impact your access to healthcare, critical resources, and opportunities for well-being.

Underprivileged and underserved populations don’t have the same access or opportunities as others in our society, and they feel these inequities acutely with poorer health and higher mortality rates. Yet, everyone feels the effects of unhealthy communities and rising healthcare costs.

Health inequity is entrenched and systemic in global healthcare. The life expectancy gap between low- and high-income countries can be as high as 18 years. Inequities account for more than $320B in annual healthcare spend in the US alone, and this is anticipated to grow to $1T by 2040. This path is repeated in many countries worldwide, which overwhelms the system and isn’t sustainable.

Just as technology has improved so many other parts of our lives, it could also advance health equity by eliminating barriers to the tools, resources, knowledge, and opportunities we all need to be as healthy as possible.

Data is at the heart of advancing health equity. People, whether as patients, clinicians, community leaders, or public health officials, need clean, usable, trustworthy data to take meaningful action. To advance health equity, we need to understand social determinants of health along with information from claims data, research, operations, and community risks. A patient’s physical environment, health-related behaviors, and economic factors make a profound difference.

Bringing all this data together in a meaningful way will be transformative. While clinical data is flowing through information exchanges, most data that impacts a person’s health is siloed and disconnected, existing outside the hospital’s electronic health record system. That’s why we’re building an open, intelligent, cloud-based healthcare platform—to connect clinical and enterprise systems for organizations and to bring in data like social determinants of health or community risk factors. We’re removing the silos and connecting disparate systems.

Just as technology has improved so many other parts of our lives, it could also advance health equity by eliminating barriers to the tools, resources, knowledge, and opportunities we all need to be as healthy as possible.

At an individual level, technology can give providers a holistic view of a patient’s health through a single, longitudinal health record. This enables them to address all aspects of a person’s condition including non-clinical factors. At a community level, technology supports leaders with a full picture of their community’s health and risk. With better information, they can more effectively reach and treat different segments of the population, direct resources, programming, and interventions equitably, and create policies that lead to health justice.

With a cloud-based health platform, we can also extend data across the healthcare ecosystem, making it available for researchers and scientists. Advancing health equity includes ensuring everyone has access to the newest and most innovative therapies available. Technology enables life sciences companies to connect with healthcare providers, their clinicians, patients, and communities to expand access to clinical trials. This results in more diverse study participants, which means researchers have more representative, complete, and comprehensive patient information to validate the safety and efficacy of treatments.

Oracle Health’s Learning Health Network includes over 100 healthcare delivery organizations dedicated to sharing deidentified data to advance clinical research. With more than 100 million patients represented, diversity has become the network’s superpower. Clinical trials run through the Learning Health Network have three times the US average of Black and Hispanic participants. Individuals, clinicians, and communities who have never had the chance to participate in clinical discovery can now do so, gaining access to leading therapeutics, diagnostics, and medications sooner.

Technology comes with its own challenges in equity, so we have an obligation to be responsible stewards of patient data. AI and machine learning could be game changers as we look to solve healthcare’s biggest challenges like burnout and cost. Since healthcare data represents real people and real situations, we need to be careful that any technology relying on this data doesn’t further exacerbate the biases and inequities that exist today.

For example, healthcare providers have been using clinical calculators for decades to estimate risk and predict outcomes. Yet, many of these calculators include race as a data element, which isn’t evidence-based and can cause harm to segments of the population. Ethical AI begins with incorporating equitable and just principles into the design, development, delivery, and analysis of products, solutions, and services. Plus, in partnership across the healthcare ecosystem—especially with researchers—we need to evaluate new technologies for potential bias, eliminate it, and evaluate the results.

In short, with more data and better information, we have an incredible opportunity to create a better healthcare system that improves lives and experiences for patients, clinicians, and communities around the world regardless of economic status or geography. It will take all of us working together to achieve this. Now is our time for action.

This story was originally published on HLTH.

Nasim Afsar, MD, MBA, MHM

Senior Vice President and Chief Health Officer, Oracle Health

As chief health officer, Dr. Nasim Afsar leads Oracle Health’s Health Transformation Office with a commitment to delivering healthy people, healthy workforce, and healthy business. Her team focuses on supporting clinical care, operational efficiency, and financial sustainability, leveraging big data, and advancing the future of the workforce and health equity. She also works on building the larger ecosystem of healthcare, including working closely with payers, retail, and public health agencies.

Dr. Afsar previously served as chief operating officer for University of California, Irvine (UCI) Health with the vision of delivering flawless care for patients in the region while creating the best place to work in healthcare. At UCI Health, she led inpatient and ambulatory operations, resulting in historically high ambulatory growth, inpatient volumes, surgeries, and tertiary care transfers to the institution. During COVID-19, she created a mobile field hospital and drive-thru testing centers, co-led the hospital at home program, and led a large-scale vaccination program. She also led health system contracting, spearheading new value-based products to market. As the executive for population health management, she ran a number of value-based programs, as well as the UCI Health Medicare Shared Savings Program (MSSP) accountable care organization (ACO).

Previously at University of California, Los Angeles (UCLA) Health, Dr. Afsar served as associate chief medical officer leading large-scale health system initiatives in quality, safety, and patient experience, and as chief quality officer for the Department of Medicine, overseeing population health initiatives.

She is past president of the Society of Hospital Medicine and served on its board of directors for eight years.

Article link: https://blogs.oracle.com/healthcare/post/the-80-problem-leveraging-data-to-achieve-health-equity?

For more than 50 years, Japan has offered healthcare without restrictions.

Japan – The Government of Japan

Naoko Kutty

Writer, Forum Agenda

Naoko Tochibayashi

Public Engagement Lead, World Economic Forum, Japan

This article is part of:Centre for Health and Healthcare

- Japan’s early adoption of universal health coverage has attracted attention from around the world.

- It is seen in may quarters as one of the foundations of an equitable society.

- The key challenge is to ensure the funding and HR requirements are in place to make this approach sustainable.

Japan’s early adoption of Universal Health Coverage (UHC) has attracted worldwide attention, as it is the country with the longest healthy life expectancy in the world.

One of the reasons for this is that for more than half a century Japan has maintained a health insurance system that all permanent residents of Japan for more than three months are required to join, allowing people living in Japan to access appropriate healthcare services at a cost they can afford. This is characterized by a free-access system that allows patients to choose any healthcare provider, from small clinics to large hospitals with the latest medical facilities, and all medical services are provided at a uniform price anywhere in Japan.

In addition, the Japanese government has increased the number of medical schools, especially in rural areas, in order to increase the number of physicians under the One Prefecture, One Medical School policy approved by the Cabinet in 1973. This has also contributed to the high quality of healthcare services in the country.

Have you read?

- How can we deliver sustainable healthcare? 3 industry leaders lay out solutions

- Governments urged to invest in healthcare systems despite global economic uncertainty

- Here’s how countries compare on healthcare expenditure and life expectancy

Japan’s initiative for global health

With such a history and system of insured health care, Japan issued the Basic Policy for Peace and Health in 2015, and based on its own experience, has shown a commitment to strengthen the necessary support for mainstreaming universal health coverage in the international community.

At the G7 Ise-Shima Summit and G7 Kobe Health Ministers’ Meeting held in 2016, Japan became the first G7 country to set the promotion of UHC as a major theme at the summit-level meeting. Japan expressed its commitment to play a leading role in international discussions by supporting the establishment of universal health coverage in Africa, Asia, and other regions in cooperation with the international community and organizations.

Subsequently, in 2017, Japan co-hosted the high-level forum on UHC with the World Bank, the World Health Organization (WHO), and the United Nations Children’s Fund (UNICEF). Government leaders from over 30 countries, as well as representatives and experts from international organizations, gathered to discuss how to promote universal health coverage in their countries, and adopted the Tokyo Declaration on UHC, which includes a commitment to accelerate efforts to achieve UHC by 2030.

In May 2022, the Kishida administration set forth its new Global Health Strategy based on the experience of responding to the spread of COVID-19. Placing the achievement of more resilient, equitable and sustainable UHC at the centre of Japan’s international cooperation in the health sector, the strategy provides guidelines for efforts to build a global health architecture and strengthen health systems to prepare for future public health crises, including pandemics.

Corporate contribution to the realization of UHC

Ajinomoto, a Japanese food company, has been developing a project to improve infant nutrition in Ghana since 2009, working with Japan International Cooperation Agency (JICA) to promote baby nutritional supplements to reduce infant mortality due to malnutrition.

In addition, LEBER, Inc. has developed a healthcare app that can connect doctors and users anytime, anywhere, to solve the problems of people living in areas where access to healthcare is difficult. The app enables 24/7 remote doctor consultation via smartphone. With one of the largest networks of doctors in Japan, the app also functions as a physical condition management tool as well as a doctor consultation platform.

Maintaining and operating the mechanism is a challenge

Universal health coverage is attracting attention as an excellent approach, but the key issue is how to maintain and operate the system once it has been realized. Securing an operating budget and training human resources with expertise are essential to making UHC sustainable.