JAMA

Published Online: May 4, 2026

doi: 10.1001/jama.2026.

Key Points

Question How much do nonprofit hospitals spend on management consultants, and following the initiation of a management consulting contract, are there changes in hospital finances, operations, or quality of care?

Findings Nonprofit hospitals in the US (n = 2343) collectively spent more than $7.8 billion on management consulting services from 2009 to 2023. A stacked difference-in-differences design comparing 306 US nonprofit hospitals that used a management consulting firm for the first time with 513 matched hospitals that did not use a management consulting firm during the study period found little evidence of substantial, statistically significant, or systematic changes attributable to management consulting engagements.

Meaning These findings raise questions about the net value that nonprofit hospitals receive from management consulting services and call for careful examination of these contracts.

Abstract

Importance The presence of management consultants in the US health care industry has increased dramatically in recent decades and is now higher than in most other sectors of the US economy. Hospitals hire management consultants to provide external expertise and advise on strategic planning, organizational change, cost cutting, and revenue-enhancement activities. Despite the prominence and influence of these firms, there is no empirical evidence in the US documenting either how much is spent on these services or whether that spending leads to measurable improvements.

Objective To quantify nonprofit hospitals’ spending on management consultants and to evaluate changes in finances, operations, and quality of care following the initiation of a contract with a management consulting firm.

Design, Setting, and Population Observational study using a stacked difference-in-differences design to compare 306 US nonprofit hospitals that used a management consultant firm for the first time in 2010-2022 with 513 matched hospitals that did not use management consultants during 2009-2023.

Exposure First use of a management consulting firm.

Main Outcomes and Measures Financial performance (eg, revenues, expenses, margins, cash reserves, and fixed assets), operational measures (eg, inpatient utilization, staffing, executive and worker compensation, charity care, and community benefits), and quality-of-care measures (eg, claims-based 30-day mortality and readmission for acute myocardial infarction, pneumonia, and stroke).

Results More than 20% of nonprofit hospitals hired management consultants during the study period. Nonprofit hospitals that hired management consultants paid an average of $15.7 million for their services, and nonprofit hospitals collectively spent more than $7.8 billion on these services from 2009 to 2023. Despite this substantial investment, analyses of hospitals’ financial performance, operational decisions, and claims-based patient outcomes revealed little evidence of substantial, statistically significant, or systematic improvements attributable to consulting engagements. Relative changes were estimated for financial measures, such as net patient revenue (−2.22%; 95% CI, −5.11% to 0.76%; P = .14), operating expenses (−1.07%; 95% CI, −3.56% to 1.49%; P = .41), fixed assets (2.05%; 95% CI, −6.54% to 11.42%; P = .65), bad debt (−6.31%; 95% CI, −19.82% to 9.48%; P = .41), days’ cash on hand (−8.56%; 95% CI, −28.00% to 16.13%; P = .46), total margin (−0.19 [95% CI, −1.20 to 0.82] percentage points; P = .71), and operating margin (0.15 [95% CI, −0.94 to 1.23] percentage points; P = .79). Relative changes were estimated for operational measures, such as inpatient length of stay (1.71%; 95% CI, −0.34% to 3.81%; P = .10) and total inpatient days (0.29%; 95% CI, −2.57% to 3.23%; P = .85). Relative changes for quality-of-care outcomes were also generally not significant. The sole exception was 30-day readmission for patients with stroke (1.37 [95% CI, 0.14 to 2.61] percentage points; P = .03), which was not robust to alternative specifications.

Conclusions and Relevance Nonprofit hospitals expend substantial resources on management consultants, but there was no evidence of meaningful changes in hospital finances, operations, or quality of care. These findings raise questions about the net value that nonprofit hospitals receive from management consulting services and suggest the need to carefully examine the widespread use of management consultants by hospitals and other organizations across the health care industry.

The use of management consultants in the US health care industry has grown dramatically in recent years.1Management consultants now play a larger role in health care than in most other sectors of the US economy.2 While some parts of the health care industry have long used management consultants, including pharmaceutical companies, health insurers, and other financially motivated health care organizations, management consultants are also used by nonprofit health care organizations, government-run agencies (eg, Veterans Health Administration, Centers for Disease Control and Prevention), and local public health departments.3 The growing role of management consultants in the health care sector has raised public concern, with several recent news stories linking major public health failures to management consulting firms.4–6 On one hand, these services are expensive and may have little value or even cause harm if they sacrifice care in the service of improving financial or operating performance measures. On the other hand, management consultants may provide health care organizations with valuable expertise on how to improve operational efficiency, increase revenues, set strategic direction, and generally enhance management practices.7 If they do improve management practices, the literature suggests this could ultimately improve health care delivery and quality of care for patients.8–10

Despite the increasing role of management consultants in health care, there is almost no empirical research examining how management consultants affect health care organizations. The notable exceptions are studies focused on the UK National Health Service.11–13 Even outside of health care, little is known about the effect of management consultants owing to the difficulty in observing contracts and measuring their consequences. The only research we could identify was an experimental study based in India and a contemporaneous study using Belgian tax data.14,15

We leveraged detailed tax filings for all nonprofit hospitals in the US to provide conservative estimates of spending on management consultants by nonprofit hospitals. We characterized the nature of these management consulting contracts and leveraged a difference-in-differences approach to quantify their impact on hospital finances, operations, and quality of care. This study provides the first empirical analysis of the impact that management consultants have on the US health care industry. To our knowledge, it also provides the first systematic empirical analysis of management consultants in any US industry.

This research was approved by the institutional review board at the University of Chicago. Results are reported in accordance with the Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) reporting guideline for observational studies.

The Internal Revenue Service (IRS) requires all nonprofit entities to report detailed financial information annually, including income statements, balance sheets, and spending on key line items, such as salaries and various community benefits. Importantly, Form 990 also requires nonprofits to detail their 5 largest external contracts, which may include contracts with management consultants. Form 990 data from 2009 to 2023 were obtained from a commercial vendor, Candid.16

Limitations

Hospitals accepting Medicare submit cost reports to Medicare annually. These filings are publicly available and include detailed financial and operational data, such as information on utilization, discharges, revenues, unreimbursed costs, charges, and expenses. This study used Medicare cost report data from 2006 to 2023, compiled by RAND.17

Hospital Consumer Assessment of Healthcare Providers and Systems

This study used data from the 2009-2023 Hospital Consumer Assessment of Healthcare Providers and Systems (HCAHPS), which surveys patients about their experience during an inpatient stay.18

This study used hospital claims for 100% of Medicare fee-for-service beneficiaries between 2009 and 2019 from the Medicare Provider Analysis and Review (MedPAR) files and patient-level enrollment and demographic information from the Medicare Beneficiary Summary Files. We also identified each beneficiary’s comorbidities from the MedPAR files.

The S&P Capital IQ dataset was used to collect financial data and market intelligence for businesses from 2009 to 2023.

We extracted the following annual financial and operational measures from Medicare cost reports: net patient revenue, operating expenses, total margin, operating margin, cash flow margin, current ratio (current assets/current liabilities), days’ cash on hand, fixed assets (land, buildings, and equipment), average inpatient length of stay, total inpatient days, Medicaid inpatient days, average worker salary, and number of employees on payroll.

We extracted the following operational outcomes from IRS Form 990 data: chief executive officer (CEO) salary, average salary of the top 5 executives, bad debt, total charity care, and total community benefits. We extracted 2 operational outcomes from the HCAHPS data: overall patient experience score and patient recommendation score. Finally, we used Medicare claims to construct 30-day mortality and readmission outcomes for patients admitted into the study hospitals with a primary diagnosis of acute myocardial infarction, stroke, or pneumonia.

Identifying Management Consulting Contracts

IRS Form 990 data were identified for 2343 nonprofit hospitals in the US (eAppendix 1 in Supplement 1). Each Form 990 includes information on the 5 highest compensated external contractors that received more than $100 000, including the amount paid and a description of the services provided. Nearly 80% of hospital-years in the sample indicate 5 or fewer contracts exceeding the $100 000 threshold. For these, the Form 990 captures all contracts above the threshold. For the remainder, there may be some management consulting contracts we did not observe because they are outside the 5 largest.

We then used information from S&P Capital IQ to determine which contractors were management consultants (eAppendix 2 in Supplement 1). The IRS Form 990 also includes a short description of each contract, which was used to exclude contracts with management consultants that were definitively not for management consulting services. For example, contracts with descriptions focused entirely on accounting and auditing services were excluded. Data were collected on other types of consultants, such as human resources consultants.

Drawing on broader management theory for why firms hire management consultants (eTable 1 in Supplement 1), we aimed to characterize the specific objective of these contracts into 1 or more of 5 categories: improving quality of care, reorganizing staffing, enhancing financial performance, integrating or advancing technological capabilities, and assisting with a merger or acquisition (eTable 2 in Supplement 1). However, as IRS Form 990 data include only a short description of each contract, we looked to news stories, public relations material, and publicly available hospital records to characterize the purpose of each contract.

Specifically, 1 analyst conducted structured web searches using both traditional search engines and artificial intelligence search tools (deep research) to identify publicly available information on the services provided (eAppendix 3 in Supplement 1). The analyst verified all information obtained by deep research against original sources. A second analyst then validated all categorizations. For contracts that could not be characterized through this process, a second analyst manually classified contract service descriptions using IRS Form 990. Classifications were independently cross-validated by a third analyst.

We used a stacked difference-in-differences estimator to assess the impact of management consultants on hospital financial, operational, and quality-of-care outcomes (eAppendix 4 in Supplement 1). This approach relies on the parallel-trends assumption that the average trends followed by matched hospitals not using management consultants approximate the average trends that hospitals using management consultants would have followed had they not used management consultants. The treatment event was defined as the first year in which a hospital was observed to contract with a management consulting firm.

We excluded treatment events in 2009 to ensure that each treatment event has at least 1 pre-event year without a management consulting contract. We also excluded treatment events in 2023 to ensure that each treatment event had at least 1 post–event year observation. We included hospitals’ observations from 4 years prior to each treatment event until 4 years after the treatment event (eAppendix 5 in Supplement 1).

To construct a valid control group, a set of matched hospitals was identified that did not use management consultants during the study period. Specifically, we identified up to 2 nonprofit hospitals not using management consultants for each hospital using management consultants by matching on total beds, total inpatient discharges, and operating margin at the start of the event window (event time −4) (eAppendix 6 in Supplement 1).

We identified 306 nonprofit hospitals with treatment events—ie, instances in which a hospital contracted with management consultants for the first time—and matched these cases to 513 hospital observations (among 404 unique hospitals) that did not use management consultants from 2009 to 2023. Conventions were followed in winsorizing all financial and operational measures at the 2.5 and 97.5 percentiles.19,20

When modeling quality-of-care outcomes at the patient level, we adjusted for patient sex, age, race, ethnicity, dual eligibility status, and Charlson-Elixhauser Comorbidity Index (eAppendix 7 in Supplement 1).

For the majority of the analysis of financial and operational outcomes, we applied a log transformation to outcomes and report regression estimates as percentage changes. For some outcomes, such as profit margins or other measures with negative values, we did not apply a log transformation, as doing so would be inappropriate.

As a sensitivity analysis, we performed the staggered difference-in-differences analyses using a Callaway and Sant’Anna estimator.21 The analysis was also performed without matching, using matching based on the full pretreatment period rather than only event time −4, and after eliminating observations during the COVID-19 pandemic. We also demonstrated the robustness of the findings to excluding large firms known for providing accounting services in addition to management consulting services, as well as to limiting the sample to short-term general hospitals. We also performed various subgroup analyses, including based on the intensity of the contract (defined as contract expenditure per hospital bed) and by the objective of the contract. Because sample sizes for analyses based on the objective of the contract were particularly small, P values were computed using a permutation test.

Reported confidence intervals and P values were not adjusted for multiple comparison testing. However, statistical significance of estimates are separately reported based on the Holm-Bonferroni adjustment for multiple comparisons using 26 tests where α = .05.

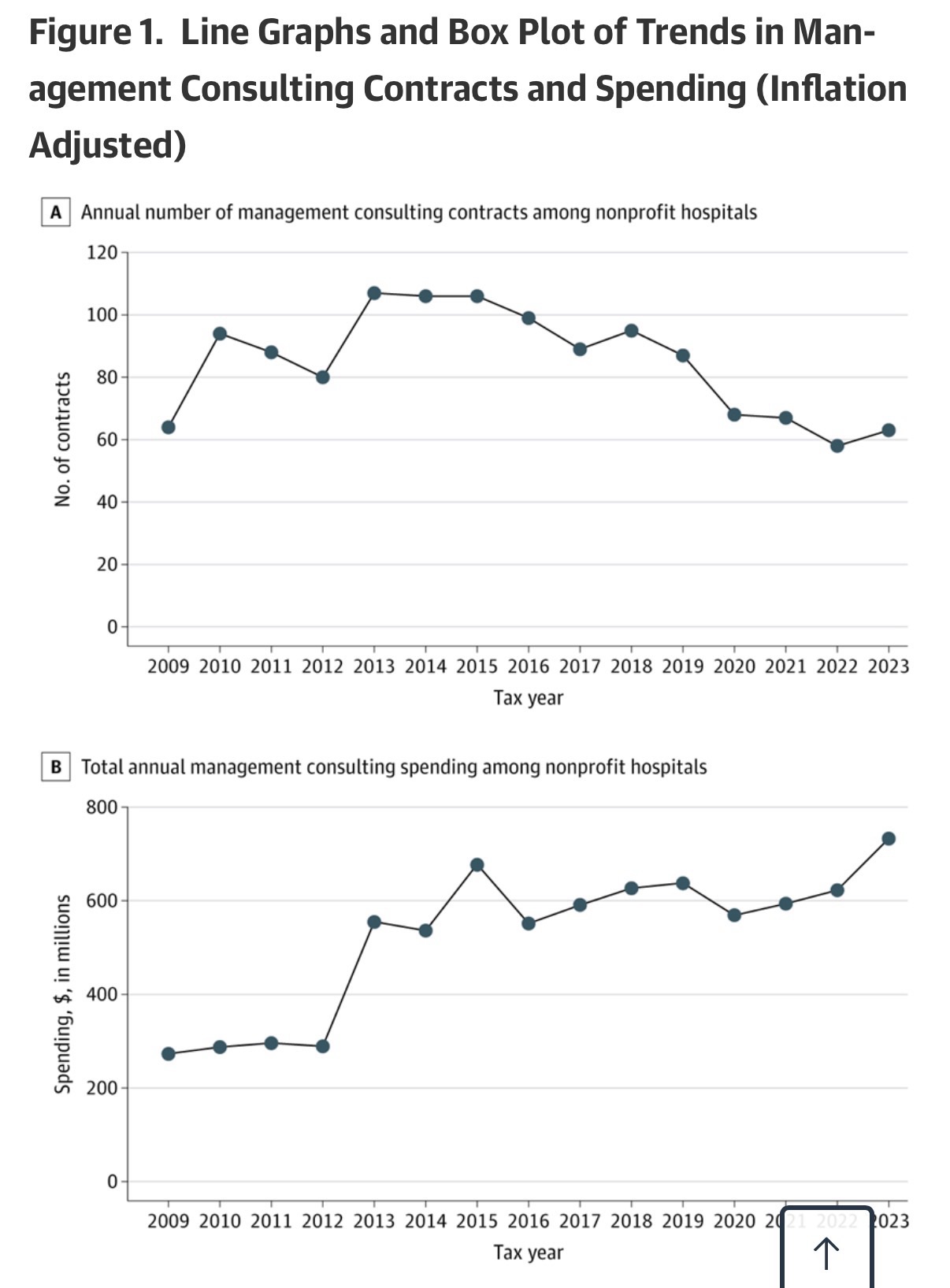

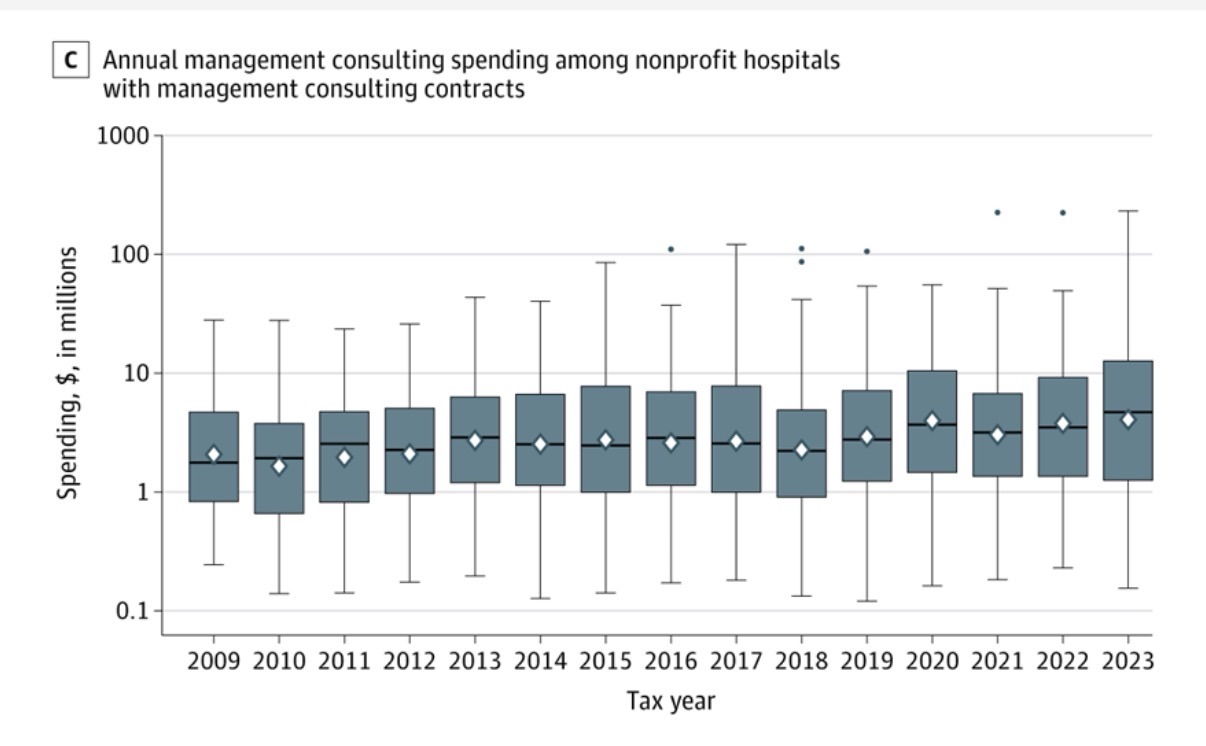

In total, nonprofit hospitals in the US (n = 2343) spent $732.9 million on management consultants in 2023, up from $273.2 million in 2009 (Figure 1). Approximately 21% of nonprofit hospitals had a management consulting contract during the study time frame, with an average of 77.5 nonprofit hospitals using management consultants each year (eFigure 1 in Supplement 1). On average, these hospitals paid $15.7 million (SD, $46.1 million) for management consulting services, with each contract costing an average of $6.2 million (SD, $14.8 million). Management consulting contracts lasted an average of 1.4 years (SD, 0.8 years), and the median number of management consulting contracts during the study period was 1.

In panel A, dots connected by the line represent the total number of contracts active in each year, including both newly initiated contracts and contracts that remained ongoing from previous years. In panel C, diamonds indicate means, horizontal lines indicate medians, box tops and bottoms indicate IQRs, vertical whiskers extend to the largest and smallest observations within 1.5 times the IQRs, and observations beyond that are shown as individual points. In panels B and C, spending values are inflation adjusted to 2023 US dollars using the Consumer Price Index.

Annual spending on consultants other than management consultants also grew over the study period, from $635.4 million in 2009 to almost $1.7 billion in 2023, totaling $17.2 billion across the entire study time frame (eFigure 2 in Supplement 1). The management consulting firms that were paid the most during the study period included Deloitte ($1.2 billion), Accenture ($1.2 billion), Huron Consulting Group ($1.0 billion), PricewaterhouseCoopers ($0.8 billion), Premier ($0.6 billion), and McKinsey & Company ($0.4 billion) (eFigure 3 in Supplement 1).

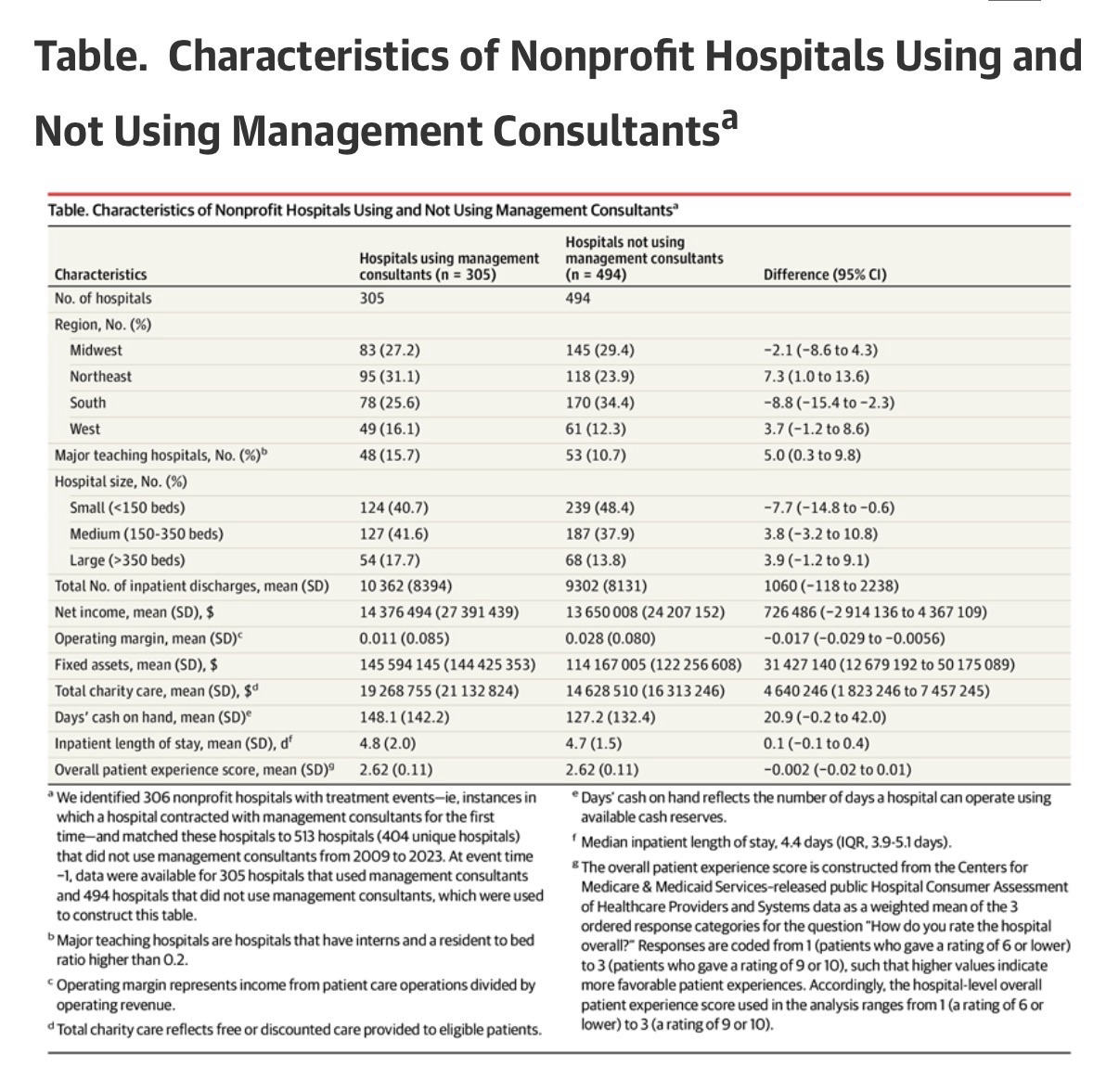

Hospitals using management consultants were geographically dispersed across the country: Midwest (27.2%), Northeast (31.1%), South (25.6%), and West (16.1%) (Table). On average, these hospitals were similar to the matched sample of hospitals not using management consultants on some dimensions, such as net income ($14.4 million vs $13.7 million, respectively; P= .70) and inpatient length of stay (4.8 vs 4.7 days, respectively; P= .36), but differed on other dimensions, such as operating margin (1.1% vs 2.8%, respectively; P= .004), charity care ($19.3 million vs $14.6 million, respectively; P= .001), and fixed assets ($145.6 million vs $114.2 million, respectively; P= .001).

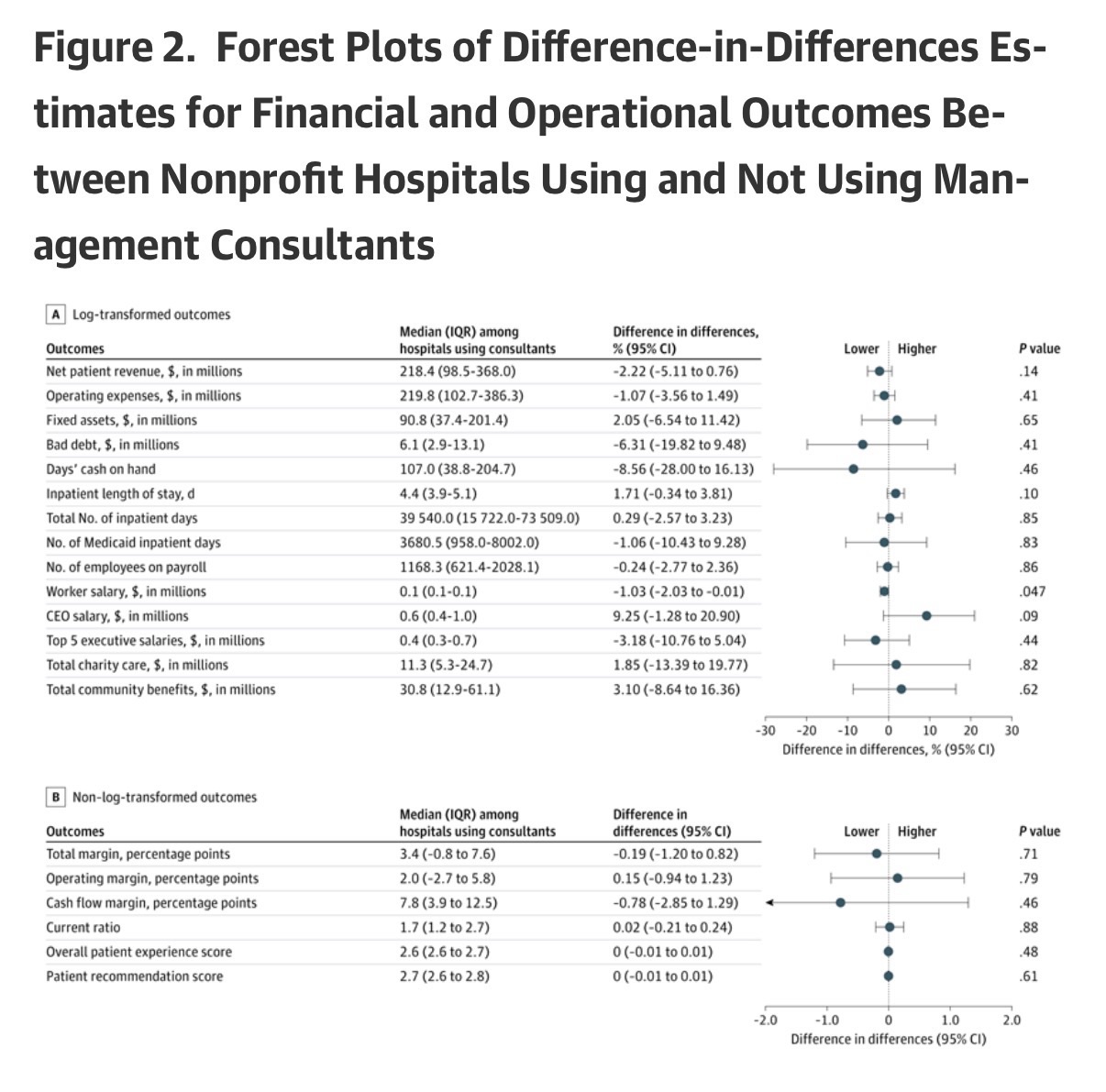

The relative changes between hospitals using management consultants and those not using management consultants for all financial outcome measures were not statistically significant, including net patient revenue (−2.22%; 95% CI, −5.11% to 0.76%; P= .14), operating expenses (−1.07%; 95% CI, −3.56% to 1.49%; P= .41), fixed assets (2.05%; 95% CI, −6.54% to 11.42%; P= .65), bad debt (−6.31%; 95% CI, −19.82% to 9.48%; P= .41), days’ cash on hand (−8.56%; 95% CI, −28.00% to 16.13%; P= .46), total margin (−0.19 percentage points; 95% CI, −1.20 to 0.82 percentage points; P= .71), operating margin (0.15 percentage points; 95% CI, −0.94 to 1.23 percentage points; P= .79), cash flow margin (−0.78 percentage points; 95% CI, −2.85 to 1.29 percentage points; P= .46), and current ratio (0.02; 95% CI, −0.21 to 0.24; P= .88) (Figure 2).

After Holm-Bonferroni adjustment for multiple comparisons, no difference-in-differences estimate remained statistically significant at α = .05. Coefficients for log-transformed outcomes (panel A) were exponentiated, 1 was subtracted, and values were multiplied by 100, which were interpreted as percentage changes. Coefficients for non–log-transformed outcomes (panel B) were interpreted as estimated relative percentage point or unit changes. Median (IQR) values are reported for hospitals using consultants (treated) at relative time −1 to contextualize the magnitude of the estimated effects. Event time 0 (the treatment year) was excluded from pooled estimates to avoid partial exposure. Net patient revenue indicates total revenue from patient care services after contractual adjustments. Operating expenses reflect total costs of hospital operations. Fixed assets include land, buildings, and equipment (net of depreciation). Bad debt reflects unpaid patient care charges deemed uncollectible. Days’ cash on hand reflects the number of days a hospital can operate using available cash reserves. Inpatient length of stay represents the number of days patients remain hospitalized per admission. Total inpatient days represent aggregate days of inpatient care; Medicaid inpatient days reflect the subset attributable to Medicaid patients. Worker salary equals total salary expense divided by number of employees. Data on chief executive officer (CEO) salaries and average top 5 executive salaries were obtained from Internal Revenue Service Form 990. Total charity care reflects free or discounted care provided to eligible patients; total community benefits reflect total spending on community-oriented programs including charity care. Total margin represents overall net income divided by total revenue, and operating margin represents income from patient care operations divided by operating revenue. Cash flow margin reflects operating cash flow relative to revenue. Current ratio equals current assets divided by current liabilities and measures short-term liquidity.

Relative changes for operational outcomes were not statistically significant for almost all outcomes, including inpatient length of stay (1.71%; 95% CI, −0.34% to 3.81%; P = .10), total inpatient days (0.29%; 95% CI, −2.57% to 3.23%; P = .85), Medicaid inpatient days (−1.06%; 95% CI, −10.43% to 9.28%; P = .83), number of employees on payroll (−0.24%; 95% CI, −2.77% to 2.36%; P = .86), average CEO salary (9.25%; 95% CI, −1.28% to 20.90%; P = .09), average top 5 executive salary (−3.18%; 95% CI, −10.76% to 5.04%; P = .44), charity care (1.85%; 95% CI, −13.39% to 19.77%; P = .82), community benefit (3.10%; 95% CI, −8.64% to 16.36%; P = .62), overall patient experience score (0.00; 95% CI, −0.01 to 0.01; P = .48), and patient recommendation score (0.00; 95% CI, −0.01 to 0.01; P = .61). The only statistically significant change was a small decline in average worker salary (−1.03%; 95% CI, −2.03% to −0.01%; P = .047) (Figure 2).

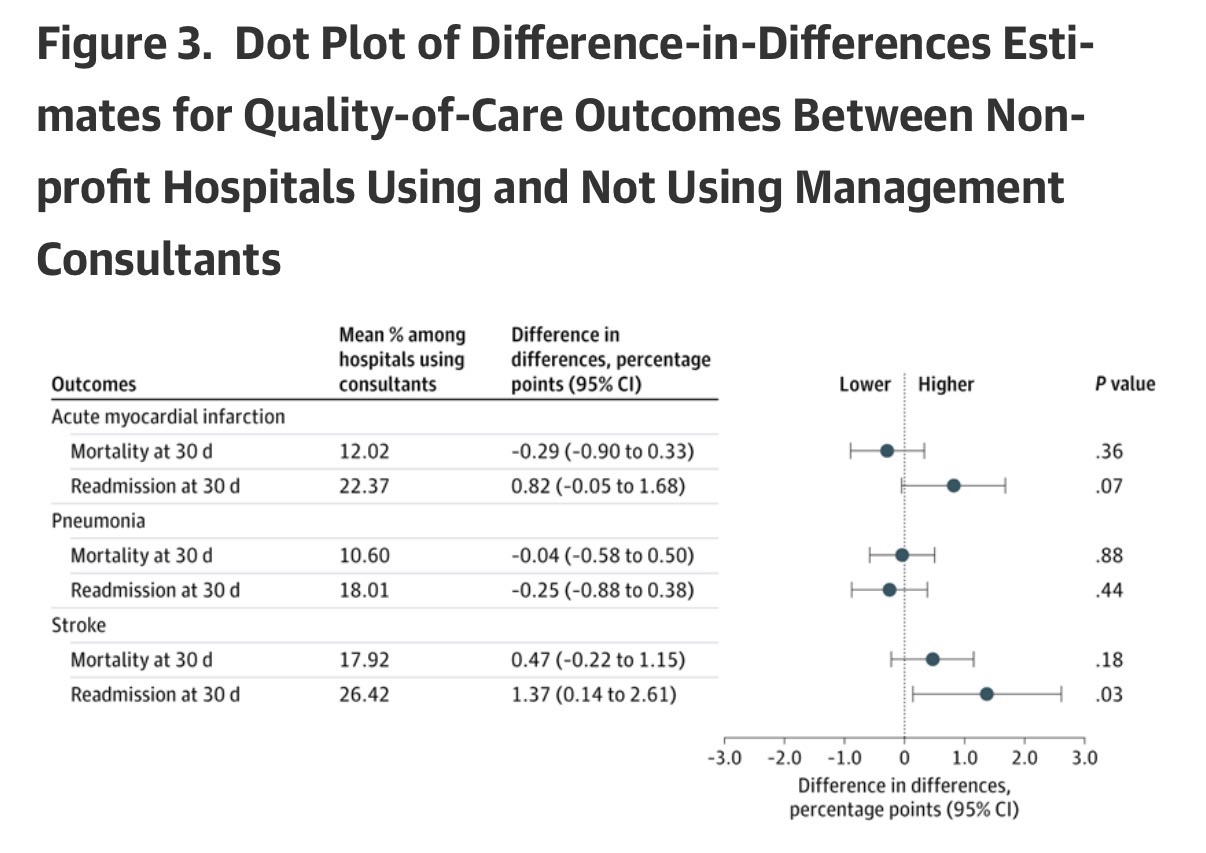

The relative changes in most patient health outcomes were not statistically significant, including 30-day mortality (−0.29 percentage points; 95% CI, −0.90 to 0.33 percentage points; P= .36) and readmission (0.82 percentage points; 95% CI, −0.05 to 1.68 percentage points; P= .07) for patients with acute myocardial infarction, 30-day mortality (−0.04 percentage points; 95% CI, −0.58 to 0.50 percentage points; P= .88) and readmission (−0.25 percentage points; 95% CI, −0.88 to 0.38 percentage points; P= .44) for patients with pneumonia, and 30-day mortality (0.47 percentage points; 95% CI, −0.22 to 1.15 percentage points; P= .18) for patients with stroke (Figure 3). The only notable exception was a relative increase in 30-day readmission for patients with stroke (1.37 percentage points; 95% CI, 0.14 to 2.61 percentage points; P= .03) (Figure 3).

After Holm-Bonferroni adjustment for multiple comparisons, no difference-in-differences estimate remained statistically significant at α = .05. Estimates represent absolute percentage point changes. Mean percentages are reported for hospitals using management consultants (treated) at relative time −1 to contextualize the magnitude of the estimated effects. Event time 0 (the treatment year) was excluded from pooled estimates to avoid partial exposure.

All financial performance, operational measures, and quality-of-care measures were not statistically significant after applying the Holm-Bonferroni method for 26 outcomes at the significance level of α = .05.

We also estimated event studies for more than 2 dozen financial, operational, and quality-of-care measures and did not find evidence of systematic divergence in trends prior to treatment (eFigures 4 and 5 in Supplement 1). This lends credence to the parallel-trends assumption underlying the difference-in-differences estimator. We also did not find evidence of preceding shocks that might bias estimates, such as CEO or board turnover or mergers/acquisitions (eFigure 4 in Supplement 1).

Estimates were also qualitatively similar when using a Callaway and Sant’Anna estimator (eFigures 6 and 7 in Supplement 1), estimating without matching (eFigures 8 and 9 in Supplement 1), matching on the average of all preperiods (event times −1 to −4) (eFigures 10 and 11 in Supplement 1), eliminating observations during the COVID-19 pandemic (eFigure 12 in Supplement 1), excluding management consulting firms known for providing accounting services (eFigures 13 and 14 in Supplement 1), restricting the sample to only short-term general hospitals (eFigures 15 and 16 in Supplement 1), and stratifying by the size of the contract (eFigures 17-20 in Supplement 1).

We were able to identify the nature of 108 management consulting contracts. Of these contracts, 64.8% were aimed at enhancing financial performance, 27.8% at integrating or advancing technological capabilities, 24.1% at improving quality of care, 13.9% at reorganizing staffing, and 13.0% at assisting in a merger or acquisition. (These objectives were not mutually exclusive.) Estimates were mostly null regardless of objective (eFigures 21 and 22 in Supplement 1).

Hospitals’ increasing use of management consultants is emblematic of the health care industry’s trend toward corporatized behavior.22 This study shows that these practices are common even among nonprofit hospitals, at least 21.3% of which have paid contracts to management consultants in recent years. Between 2009 and 2023, these nonprofit hospitals spent $7.8 billion on management consulting services, with many contracts aimed at improving financial performance. Such large outlays have real opportunity costs—for example, among nonprofit hospitals that hired management consulting firms, the average expenditure of $15.7 million could alternatively fund the annual salaries of approximately 46 hospitalists or 167 registered nurses.23,24

Given these hospitals’ nonprofit status and their importance to communities, there is a strong public interest in evaluating the effects of their spending on management consultants. We did not find evidence of clear, statistically discernible benefits or harms to hospitals’ financial, operational, or quality-of-care outcomes from these major expenditures. It may be that changes induced by management consultants are simply too small to detect, that they affect dimensions of performance that we did not observe, or that the activities of these contracts are sufficiently varied as to make effects on any individual dimension difficult to discern. Alternatively, it may be that management consultants are primarily validating decisions or otherwise making recommendations that are very similar to what the hospital would have done without the contract.

Future research may build on and improve this study to further improve understanding of the role of management consultants in the health care industry. First, qualitative research is required to understand the precise nature of these contracts and to determine appropriate measures of benefit. Second, while large effects were ruled out in this study, future research might aim to rule out small effects as well. Third, future research might aim to study the use of management consultants by for-profit hospitals or in other parts of the health care industry, such as by local, state, and federal health agencies. Finally, this study examines only management consulting contracts, which represent just 31% of the nearly $2 billion that nonprofit hospitals spend on consultants each year. Examining other consulting contracts may be an important area for future research.

There are several limitations in this study. First, hospitals using management consultants may be on a different outcomes trajectory than hospitals that do not use management consultants. While event-study estimates do not exhibit concerning pretrends, sharp contemporaneous shocks could bias the estimates. We did not find evidence that mergers or acquisitions, CEO turnover, or board changes in the preperiod drove the results, but unobserved shocks cannot be ruled out. Notably, however, for any large bias-inducing shocks to rationalize the estimated null effects, they would need to be systematically correlated with management consultant use in a way that exactly offsets true effects.

Second, we examined only a subset of the outcomes that management consultants might plausibly affect. While this study analyzed extensive outcomes, the possibility of effects on outcomes not measured or analyzed cannot be ruled out.

Third, we studied only hospitals’ first observed contracts with management consultants. Some hospitals have multiple contracts that may occur simultaneously or in sequence. As such, the estimates are most precisely interpreted as relative changes associated with nonprofit hospitals starting to use the services of management consultants. Importantly, the results are specific to nonprofit hospitals and may not generalize to other health care settings.

Fourth, the data include only the 5 highest-paid contracts for each hospital. Although nearly 80% of hospital-years in the sample indicate 5 or fewer contracts, the remaining 20% may have some smaller management consulting contracts that were not observed.

Fifth, there was very little visibility into the exact nature of each contract. We were able to obtain some information on the objectives of 108 contracts but did not discern a clear pattern in estimates across objectives. Most notably, even for contracts aimed at improving financial performance—the largest subgroup that included 44 hospitals—we did not observe systematic differential effects, including for financial measures. In general, however, we urge caution in interpreting these subgroup analyses, as many subgroup samples were small.

Sixth, the nature of performing an extensive analysis on a modest number of contracts creates some challenges in interpreting estimates. For some outcomes, only large effects could be ruled out. In others, some differences were observed in magnitude, direction, or significance across specifications or subsamples, as is expected when testing many hypotheses and specifications in finite samples. In line with the primary findings, the most consistent pattern across sensitivity and subgroup analyses was a lack of sizeable, clear, or systematic effect.

Conclusions

Nonprofit hospitals spend considerable amounts of money on management consultants, but this study found no clear evidence of meaningful changes in hospital finances, operations, or quality of care. The findings suggest the need for further research and for careful examination of the widespread use of management consultants by health care organizations.

Article Information

Accepted for Publication: March 23, 2026.

Published Online: May 4, 2026. doi:10.1001/jama.2026.5027

Concept and design: Bruch, Fang, Zeng, Gandhi.

Acquisition, analysis, or interpretation of data: All authors.

Drafting of the manuscript: Bruch, Zeng, Gandhi.

Critical review of the manuscript for important intellectual content: All authors.

Statistical analysis: All authors.

Obtained funding: Bruch.

Administrative, technical, or material support: Bruch, Zeng, Parthan, Gandhi.

Supervision: Bruch, Gandhi.

Conflict of Interest Disclosures: Dr Bruch reported receipt of personal fees from the Robert Wood Johnson Foundation; receipt of grants from the Robert Wood Johnson Foundation, Rx Foundation, and Commonwealth Fund; receipt of personal fees from Adasina Social Capital through a grant from the Robert Wood Johnson Foundation; and provision of freelance consulting to McKinsey & Company from 2014 to 2017. No other disclosures were reported.

Data Sharing Statement: See Supplement 2.

Additional Information: Perplexity AI and ChatGPT were used during 2025 to identify additional online information on nonprofit hospital contracts. Dr Bruch takes responsibility for the integrity of the content generated.

References

Grand View Research. Healthcare Consulting Services Market Size, Share and Trends Analysis Report, 2024-2030. Grand View Research; 2024.

Custom Market Insights. Latest global management consulting market size/share worth USD 8,97,442.21 million by 2034 at a 6.56% CAGR. Yahoo Finance. Published February 25, 2025. Accessed February 20, 2026. https://finance.yahoo.com/news/latest-global-management-consulting-market-083000592.html

Bruch JD, Feldman J, Song Z. Use of private management consultants in public health. BMJ. 2021;374:n2145. doi:10.1136/bmj.n2145PubMedGoogle ScholarCrossref

Alderman L. France hired McKinsey for its Covid vaccine rollout. Then came the questions. New York Times. Published February 22, 2021. Accessed February 20, 2026. https://www.nytimes.com/2021/02/22/business/france-mckinsey-consultants-covid-vaccine.html

Forsythe M, Bogdanich W. McKinsey settles for nearly $600 million over role in opioids crisis. New York Times. Updated November 5, 2021. Accessed February 20, 2026. https://www.nytimes.com/2021/02/03/business/mckinsey-opioids-settlement.html

Ferguson C. What went wrong with America’s $44 million vaccine data system? MIT Technology Review. Published January 30, 2021. Accessed February 20, 2026. https://www.technologyreview.com/2021/01/30/1017086/cdc-44-million-vaccine-data-vams-problems/

Canato A, Giangreco A. Gurus or wizards? a review of the role of management consultants. Eur Manag Rev. 2011;8(4):231-244. doi:10.1111/j.1740-4762.2011.01021.xGoogle ScholarCrossref

Tsai TC, Jha AK, Gawande AA, Huckman RS, Bloom N, Sadun R. Hospital board and management practices are strongly related to hospital performance on clinical quality metrics. Health Aff (Millwood). 2015;34(8):1304-1311. doi:10.1377/hlthaff.2014.1282PubMedGoogle ScholarCrossref

Salehnejad R, Ali M, Proudlove NC. The impact of management practices on relative patient mortality: evidence from public hospitals. Health Serv Manage Res. 2022;35(4):240-250. doi:10.1177/09514848211068627PubMedGoogle ScholarCrossref

McConnell KJ, Lindrooth RC, Wholey DR, Maddox TM, Bloom N. Management practices and the quality of care in cardiac units. JAMA Intern Med. 2013;173(8):684-692. doi:10.1001/jamainternmed.2013.3577

ArticlePubMedGoogle ScholarCrossref

Kirkpatrick I, Sturdy AJ, Reguera Alvarado N, Veronesi G. Beyond hollowing out: public sector managers and the use of external management consultants. Public Adm Rev. 2023;83(3):537-551. doi:10.1111/puar.13612Google ScholarCrossref

Kirkpatrick I, Sturdy AJ, Reguera Alvarado N, Blanco‐Oliver A, Veronesi G. The impact of management consultants on public service efficiency. Policy Politics. 2019;47(1):77-95. doi:10.1332/030557318X15167881150799PubMedGoogle ScholarCrossref

Sturdy AJ, Kirkpatrick I, Reguera N, Blanco‐Oliver A, Veronesi G. The management consultancy effect: demand inflation and its consequences in the sourcing of external knowledge. Public Adm. 2022;100(3):488-506. doi:10.1111/padm.12712Google ScholarCrossref

Bloom N, Eifert B, Mahajan A, McKenzie D, Roberts J. Does management matter? evidence from India. Q J Econ. 2013;128(1):1-51. doi:10.1093/qje/qjs044Google ScholarCrossref

Bijnens G, Jäger S, Schoefer B. What Does Consulting Do? NBER Working Paper 34072. National Bureau of Economic Research; 2025. Accessed February 20, 2026. https://www.nber.org/system/files/working_papers/w34072/w34072.pdf

Candid. Look up nonprofits and identify prospects with Candid’s GuideStar. Accessed November 26, 2025. https://www.guidestar.org/search

RAND. CMS hospital cost report data made easy. Accessed November 26, 2025. https://www.hospitaldatasets.org/

Chandra A, Finkelstein A, Sacarny A, Syverson C. Health care exceptionalism? performance and allocation in the US health care sector. Am Econ Rev. 2016;106(8):2110-2144. doi:10.1257/aer.20151080PubMedGoogle ScholarCrossref

Bruch JD, Gondi S, Song Z. Changes in hospital income, use, and quality associated with private equity acquisition. JAMA Intern Med. 2020;180(11):1428-1435. doi:10.1001/jamainternmed.2020.3552

ArticlePubMedGoogle ScholarCrossref

Joynt KE, Orav EJ, Jha AK. Association between hospital conversions to for-profit status and clinical and economic outcomes. JAMA. 2014;312(16):1644-1652. doi:10.1001/jama.2014.13336

ArticlePubMedGoogle ScholarCrossref

Callaway B, Sant’Anna PH. Difference-in-differences with multiple time periods. J Econom. 2021;225(2):200-230. doi:10.1016/j.jeconom.2020.12.001Google ScholarCrossref

Fox DM. Policy commercializing nonprofits in health: the history of a paradox from the 19th century to the ACA. Milbank Q. 2015;93(1):179-210. doi:10.1111/1468-0009.12109PubMedGoogle ScholarCrossref

Today’s Hospitalist. Executive Summary Report of Research Findings From the Today’s Hospitalist 2023 Compensation & Career Survey. Published 2023. Accessed February 20, 2026. https://todayshospitalist.com/wp-content/uploads/2024/06/TH-2023-Adult-Hospitalist-C-C-Exec-Summary.pdf

US Bureau of Labor Statistics. Occupational Outlook Handbook: registered nurses. Accessed November 26, 2025. https://www.bls.gov/ooh/healthcare/registered-nurses.htm

Article link: https://jamanetwork.com/journals/jama/fullarticle/2848641