Rather than destroying jobs, firms should partner with workers to augment human skills and knowledge.

Jun 1, 2026 3 minute read

Pope Leo XIV’s first encyclical, “Magnifica Humanitas: On Safeguarding the Human Person in the Time of Artificial Intelligence,” could not come at a more opportune time. His call to respect workers’ right to a voice in shaping the future of work builds on Pope Leo XIII’s historic 1891 encyclical “Rerum Novarum” and subsequent Catholic social teachings.

“Rerum Novarum,” which championed workers’ rights and unions, was the moral foundation for progressive labor legislation, including the 1935 National Labor Relations Act. That spurred unionization’s growth from less than 10% of the workforce to a peak of a third a decade later.

U.S. workers today again need a stronger voice as AI begins to dominate workplaces. Unions again represent only about 10% of the U.S. workforce, and the big decisions that shape AI and the future of work lie well beyond the reach of most workers — union and nonunion alike.

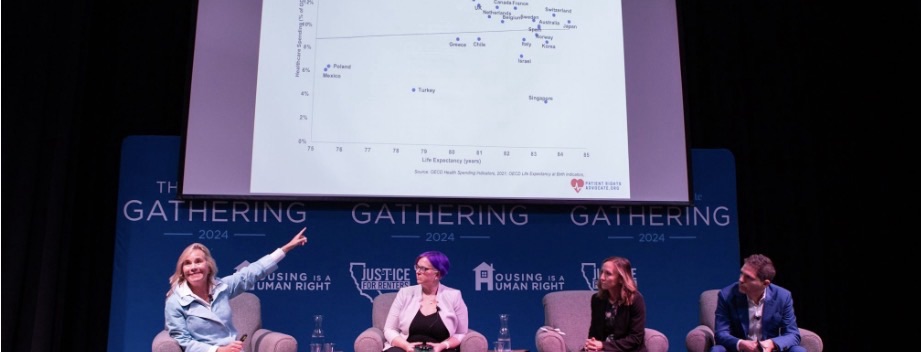

The pope is not the only person speaking out on AI and work. A distinguished panel of the National Academies of Science, Engineering, and Medicine (including MIT economist David Autor) has warned that we need stronger institutions and policies that get workers a voice on AI or we’ll end up with another generation of winners (the big AI developer companies) and losers (unemployed workers). Society cannot afford such consequences.

How do we give workers a voice in AI, concretely? How do we ensure workers share in whatever economic gains they help AI generate? In my direct work on worker voice and generative AI with unions, companies, and other groups, we’ve found a pathway to a seat at the table.

It begins with challenging AI developers’ purpose for AI in the workplace. Their quest for artificial general intelligence is a ticket to using AI to displace as many workers from their jobs as possible — and to destroy jobs for generations. A strong worker voice can redirect the goal to augment human skills and knowledge to improve worker productivity and work quality.

There’s already some promising activity on this pathway. The AFL-CIO and unions representing teachers, communications workers, and the building trades have formed partnerships with Microsoft, OpenAI, and other big developers to explore developing AI tools that improve the quality of workers’ jobs and the services they provide. At Fenway Park in Boston food and beverage workers represented by UNITE HERE negotiated an agreement with Aramark that provides for advance notice and consultation rights on introducing automated beer sellers. It also provides job protections, sensible staffing and work arrangements, and fair compensation for the workers whose jobs may be affected by automation. I served as a mediator in those negotiations. A team of us from MIT recently worked with and observed the healthcare giant Kaiser Permanente and the Alliance of Health Care Unions reach a breakthrough agreement to create a task force of executives and union leaders. They will work in partnership on AI to engage vendors and provide opportunities for front-line workers to propose ideas for using AI to improve their jobs and the services they provide.

RELATED ARTICLES

How to boost pro-worker AI in your company

From robotics to electronic medical systems, research shows that when workers and tech designers collaborate, they generate higher productivity and more satisfying work than designers alone. Workers know how work gets done, not AI developers.

Pope Leo challenges us to use AI to serve humanity and respect the dignity and rights of workers. Dignity includes sharing in the economic benefits of new technologies, with stronger job security and even new, explicit wage-setting norms and formulas. That means building a modern-day social contract to replace what is today broken.

After World War II, the United Auto Workers and General Motors negotiated the so-called Treaty of Detroit, which set future wage increases to match cost-of-living increases and growth in national productivity spurred by the technological and organizational innovations of that era. It became a social contract that built our middle class for the next 30 years.

What better way to honor Pope Leo’s call than to change the trajectory of AI development while establishing new wage norms to build a new social contract for our modern age?

Thomas A. Kochan is the George M. Bunker Professor Emeritus at the MIT Sloan School of Management and a faculty member in the MIT Institute for Work and Employment Research. He is the author of the forthcoming book “Roads Not Taken: Lessons for Building a New Social Contract at Work.”

Article link: https://mitsloan.mit.edu/ideas-made-to-matter/heeding-popes-call-to-ensure-ai-protects-human-dignity?