One word: implementation.

Increasingly, I’m convinced that the underappreciated challenges of implementation describe the ever-expanding gap between the promise of emerging technologies (sensors, AI) and their comparatively limited use in clinical care and pharmaceutical research. (Updated disclosure: I am now a VC, associated with a pharma company; views expressed, as always, are my own.)

Technology Promises Disruption Of Healthcare…

Let’s start with some context. Healthcare, it is universally agreed, is “broken,” and in particular, many of the advances and conveniences we now take for granted in virtually every other domain remain largely aspirational goals, or occasionally pilot initiatives, in medicine.

Healthcare is viewed by many as an ossified enterprise desperately in need of some disruption. As emerging technologies shook up other industries originally viewed as too hide-bound to ever change, there was in many quarters a profound hope that advances like the smart phone or AI, and approaches like agile development and design thinking, could reinvent the way care is delivered, and more generally, help to reconceptualize the way each of us think about health and disease.

In particular, these technologies offered the promise of helping improve care in at least five ways:

- From reactive to anticipatory

- From episodic to continuous

- From a focus on the average patient to a focus on each individual patient

- From care based on precedent (previous patients) to care based on continuous feedback and learning

- From patient-as-recipient of care to patient-as-participant (and owner/driver of care) – a foundational theme of both the PASTEUR translational medicine training program Denny Ausiello and I organized in 2000 (summarized in the American Journal of Medicine, here), as well as of Eric Topol’s The Patient Will See You Now (my Wall Street Journal review here).

As Denny and I wrote in 2013, this time in the context of our CATCH digital health translational medicine initiative, emerging technology:

“…provides a way for medicine to break out of its traditional constraints of time and place, and understand patients in a way that’s continuous rather than episodic, and that strives to offer care in a fashion that’s anticipatory or timely rather than reactive or delayed.”

These technologies also afforded new hopes to pharma, in particular, the ability to:

- better understand disease (assessment of phenotype and genotype that’s at once more granular and more comprehensive);

- better understand illness/patient experience of disease (can capture more completely and perhaps more quantitatively and in more dimensions what it feels to experience a condition);

- forge a close connection with patients, and add value beyond the pill. The idea of moving from assets to solutions is a perennial favorite of consultants (this, for example).

… And How’s That Going?

And yet, here we are. While some consultants suggest we are further along than even their extravagantly optimistic predictions of 2013 had imagined, my own conversations with a range of stakeholders suggests progress has been painfully slow, and the practice of both medicine and drug development generally have not felt the impact of these emerging technologies, to put it very politely (and most experts with whom I’ve spoken over the last several months have been far blunter than that).

As Warner Wolf used to say, let’s go to the videotape. Consider some of the published, peer-reviewed studies evaluating digital health approaches:

Given these data, it may not come as a great surprise that a soon-to-be-published review (previewed by its lead author, Brennan Spiegel, on twitter) representing a systematic evaluation of high-quality randomized control trials reportedly finds that “device enabled remote monitoring does not consistently improve clinical outcomes,” according to Spiegel.

The struggles of digital health to demonstrate value are not new – see this post from January of 2014 – nor are they exceptional in medicine. In fact, as I’ve previously noted, many technologies and approaches thought intuitively to offer obvious benefit turned out not to, from the use of bone marrow transplant in breast cancer to the use of a category of anti-arrhythmic medication following heart attack to the routine use of a pulmonary artery catheter in ICU patients. In each case, benefit was thought so obvious as to question the ethics of even doing a randomized study, and then studies were done refuting the hypothesis.

Technology Adoption In Pharma

Doubts about efficacy are also one of the reasons pharma has been extremely slow to adopt some new technology, concerns that have been validated by some early pilots. For example, I am aware of what seemed to be the perfect case of delivering a solution rather than a product: a company made a surgical product, and invested significant resources in developing a service that provided useful post-op advice and support (delivered by highly trained nurses) to patients who received the product, thus helping the patient, the surgeon (unburdening his or her office, while simultaneously helping drive better outcomes), and the company, by making it’s product more attractive. Result: I have been told that the service ultimately was shut down, because few patients availed themselves of it.

I’ve also heard from many stakeholders of a broader concern about the solution-not-pill approach: from the perspective of many commercial organizations, putting resources towards “solutions” takes money from commercial budgets, and is often felt not to deliver commensurate commercial returns. Translation: it eats into profit margins. Deeper translation: while “solutions” might be appealing in a truly value-based world, where revenue is driven by outcomes, at least today, revenue is driven by sales (more accurately, sales minus rebates), and investing in solutions, in my industry survey, tends to be seen as something that’s championed far more enthusiastically in public than in private.

One more dispiriting (similarly sanitized/anonymized) example: in 2013, I wrote that one of the advantages of capturing patient experience was that it provided a way of advancing a product that offered similar primary endpoints but which delivered a better patient experience. Shortly after that was published, I was told of an example where the exact opposite occurred: a company developed what was essentially an improved version of an existing oncology product, with better tolerability. However, it was killed at a relatively late stage by a pharma’s commercial team, who determined they would never be able to get “premium pricing,” especially since the existing product would be generic relatively soon. Without a significantly improved primary endpoint, the commercial group determined, payors would simply not reimburse for the new product. (Of course, one could argue that improved tolerance might lead to higher adherence and better real-world outcomes, but the commercial team, at least in this case, apparently didn’t anticipate that would be persuasive.) While I assume both pharmas and payors would (and will) push back against this example, I suspect it’s fairly representative of how these decisions are actually made.

Digital Biomarkers, In Context

An area often cited as holding particular promise is “digital biomarkers” – using technology to provide the sort of information we’ve long sought from traditional biomarkers, such as an early read around whether a medicine is doing what it should. The challenge here, though, is one of compounded hype – the extravagant expectations around biomarkers multiplied by the extravagant expectations around digital technology. The reality – as Anna Barker, leader of the National Biomarker Development Association has long emphasized – is that it’s astonishingly difficult to develop a robust, validated biomarker, and requires a methodical assessment process – as traditional medical device and diagnostics makers appreciate all-to-well.

The problem of utilizing biomarkers in early clinical drug development is especially close to home for me, as I spent several years in pharma specifically working in this area. The goal – and the real way biomarkers could save a ton of money – is not to provide reassurance to the clinical team, but to tell them their drug doesn’t seem to be working, and isn’t likely to work. By the time a drug is ready for testing in people, it more or less is what it is – either it will safely work for the intended indication or it won’t. Most won’t, and the sooner you realize that, the better. Thus, if a biomarker can persuade a team to kill a product in Phase 1 rather than after Phase 3, you save a huge amount of time and money.

The problem, however, is that understandably, all the incentives and all the glory (such as it is) in drug development revolve around moving promising products forward, and every team desperately wants to believe that its molecule is going to be the next Sovaldi or Keytruda. It’s in this context that biomarkers must prove their worth, and you can imagine what a high bar it is. While teams welcome data that vaguely support their product, they are far more critical of data that might derail development – understandable given all the work that’s already gone into getting the drug into clinical trials. Your biomarker data needs to be solid enough to persuade them that further effort is futile.

(Of course there are many other uses for biomarkers in clinical development, such as dose selection.)

Tech vs Pharma View of Data: Positive vs Negative Optionality

Taking a step back, from biomarkers specifically to data more generally, one of the most profound differences between pharma and tech companies is how they view data, and the collection of data. Most tech companies strive to collect as much data as possible, as these data represent largely positive optionality – lots of upside (opportunities to capitalize on the knowledge), comparatively little downside. On the other hand – and especially in late development – pharma companies have traditionally viewed data collection in an extremely guarded way, as a risky undertaking that in many ways provides more downside than upside. In my experience, pharmas aren’t seeking to bury relevant safety signals – if a product is harmful, they desperately want to know, at least from what I’ve seen and experienced. Their concern instead is their obligation to pursue anything they might discover, including the slew of false positives that inevitably emerge as you evaluate more and more data. While scientists and regulators (Norman Stockbridge, as I noted this April in the Washington Post, has been thinking about this for years) are increasingly used to handling this risk, and adjusting for false discovery rate, I suspect the concern is basically that trial lawyers and juries may be less interested in such subtleties.

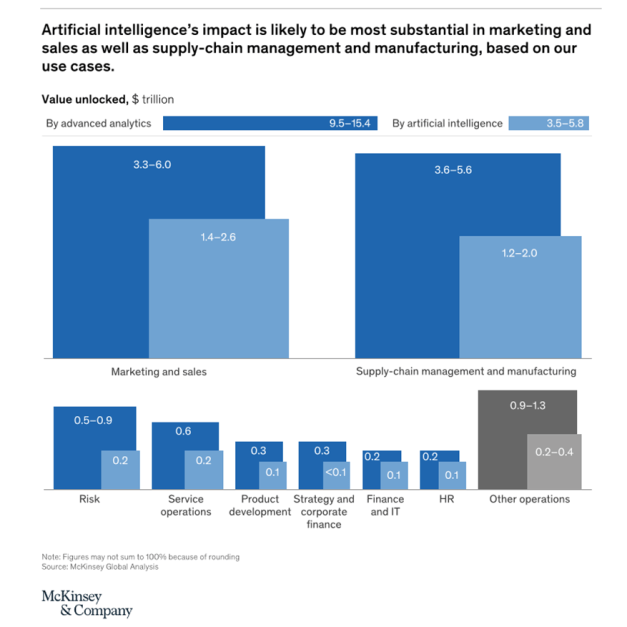

A promising recent trend, however, is that increasingly, pharma companies have started to worry about the other side of this equation – the upside, the opportunities and insights they’re forgoing by not taking a closer and more coherent and integrated look at the data they have, and the data they could be collecting. My sense is that much of this newfound interest in pharma is actually being driven at the board level, down into the organization, as some board members seem concerned about the possibility of a “tech giant” swooping in and figuring out some key insights that were easily available to a pharma company willing to take off its own blinders. The recent AI hype has only added fuel to this fire.

There’s also two sides to pharma’s reputation for being conservative and resistant to change. Most leaders in the industry would acknowledge there’s a lot of truth to this, and many in the trenches would argue it’s for a good reason: someone’s always trying to sell pharma a shiny new object, and the vast majority of these promises have failed to deliver – often after significant investment of time and treasure. Industry insiders who were fans of Nassim Taleb might even invoke his critique of “neomania,” and perhaps assert they prefer assays and approaches that have been “battle-tested,” and have “withstood the test of time.”

On the other hand, many innovators I know within and outside of the industry are beyond frustrated by the glacial pace of change, by the fact that, as UCSF neurologist John Hixon notes (and you can find our 2015 Tech Tonics interview with him here), most pharmas still use paper diaries to assess seizures, even though better metrics and measures are now available. As he and many others have emphasized to me, the mentality seems to be that no one has even gotten fired for effectively executing an approach that’s led to FDA approvals before.

A particularly interesting side note here is that often in this sort of situation, you might anticipate that the innovation would come from the agile upstart company, perhaps a small biotech more willing to take a chance on a promising emerging methodology. But curiously, almost everyone with whom I’ve spoken – including experts at big pharma, small biotechs, and at CROs who serve them (eg this Tech Tonics interview with digital health expert at Medidata) – believes that the experimentation will be driven by big pharma, simply because they are more likely to have the money to explore such an approach. Small biotechs, in contrast, were felt to be less likely to take a chance, as many seek to partner programs with, or to be acquired by big pharmas, and thus need the big pharmas to be comfortable with their data and approach.

So where does this leave us? Is digital health “digital snake oil,” as the head of the AMA suggested last year, or “dead,” as an investor suggested (somewhat tongue-in-cheek) a few months ago? Phrased differently: is the juice worth the squeeze?

Implementation Matters

Which brings us back to implementation (remember implementation? This is a blog post about implementation….)

Most of us think about innovation (especially in technology) as revelation, a singular event that once made visible, radically changes how we think and act.

Yet that turns out to be a poor mental model of how innovation finds its way into our lives. Consider, for instance, the vast distance between the very first automobile, created by Carl Benz in Germany in 1885-1886, and the modern car. Benz’s car was a novelty, but didn’t immediately transform the lives of German citizens and immediately occupy the central role cars have in our lives today. The differences you’d probable notice at first are the construction, the materials, the design of engine. But also consider the world into which Benz’s car was born: there weren’t asphalt highways connecting every conceivable destination, there weren’t gas stations in every town, often at many intersections. There were mostly dirt roads and a lot of horses. In order for Benz’s innovation to be (more) fully realized, to be implemented at scale, there needed to be a series of advances.

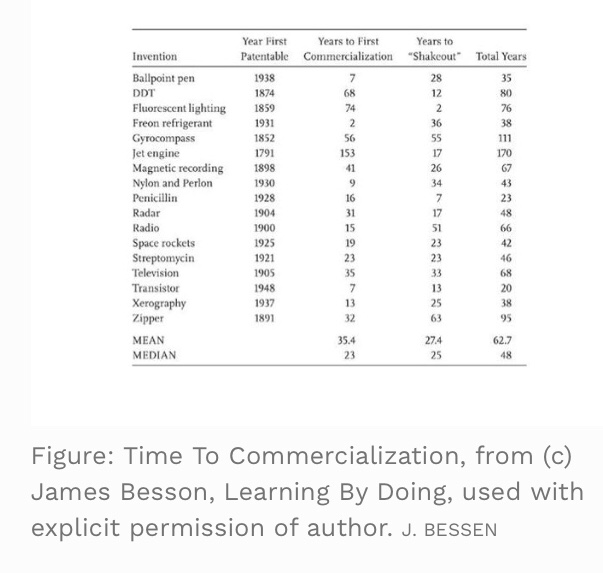

The distinction between innovation and implementation was a key takeaway from a fascinating book written by Boston University economist (and former software engineer and technology CEO) James Bessen, entitled Learning By Doing (and hat-tip to AstraZeneca physician-scientist Kevin Horgan for suggesting it to me). While the book is perhaps best known as a response to the idea (raised in The Second Machine Age and elsewhere) that technology is associated with increased inequality (I don’t have the depth to mediate this debate), it was Bessen’s discussion of implementation that really caught my attention.

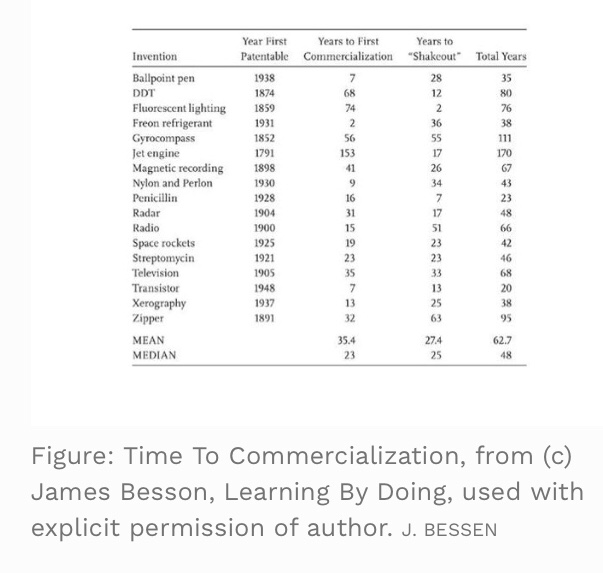

“The distinction between invention and implementation is critical and too often ignored,” Bessen writes, and goes on to cite data suggesting that “75-95% of the productivity gains from many major new technologies were realized only after decades of improvement in the implementation.” (See figure below.)

According to Bessen,

“A new technology typically requires much more than an invention in order to be designed, built, installed, operated, and maintained. Initially much of this new technical knowledge develops slowly because it is learned through experience, not in the classroom.”

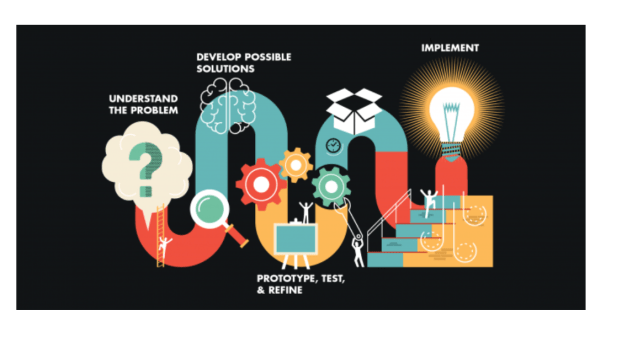

The four hurdles to technology implantation at scale that Bessen outlines will seem familiar to anyone in healthcare:

- Many people in different occupations need to acquire emerging specialized knowledge, skill, and know-how.

- The technology itself often needs to be adapted for different applications.

- Businesses need to figure out how best to use the new technology, and how to organize the workplace.

- New training institutions and new labor markets are often required.

One problem – of particular relevance to healthcare – is what Bessen calls “coordination failure.” As he points out,

“[E]arly-stage technologies typically have many different versions. For example, early typewriters had different keyboard layouts. Workers choose a particular version to learn and firms invest in a particular version, but they need to coordinate their technology choices for markets to work well…. Market coordination won’t happen unless new technology standards are widely accepted, and sometimes that takes decades.”

While Bessen doesn’t offer any magic answers to the challenge of implementation (he does highlight open standards and employee mobility as positive factors), I was compelled by his detailed description of technology implementation, and his framing of it as perhaps at least as important as – but also very different from — the challenge of coming up with a new idea itself. It also seemed like an important reminder that some of the most important success stories at the intersection of health and tech may not come from the team that’s developed the sexiest new technology – the best AI, the most sensitive sensor – but rather the team that’s figured out how best to apply a technology, how best to pragmatically solve the implementation challenge.

There’s a final point that deserves mention, as it’s all too easily to overlook. As we contemplate the challenges of technology implementation, and acknowledge how far we have to go, we must also remind ourselves why we’re trying so passionately in the first place. While it’s undoubtedly true that technology is overhyped – at times obscenely, comically, and dangerously so – it also provides us with radically different ways to see the world, engage the world, and change the world. New tools – as science historian/philosopher Douglas Robertson argued in his 2003 classic Phase Change (also recommended by Kevin Horgan) – play a critical role in driving most paradigmatic change in science, enabling us to ask questions previous generations could perhaps never even contemplate, and tackle them in a fashion previous generations might never have imagined.

Bottom Line

When we survey the landscape at the intersection of technology of health, it’s absolutely true, as critics point out, that the most of these technologies have not yet demonstrated the potential that advocates (to their credit) see and (to their shame) assert has already arrived, whether in precision medicine (as I reviewed in 2015) or digital health (as I reviewed last year). But I also believe there’s a there there. The powerful (and often interrelated) technologies we see emerging, from precision medicine to cloud computing to AI – really do afford the opportunity to radically reconceptualize our world, and more to the point, profoundly refine our understanding of health and disease, and ultimately effect positive change.

But coming up with even transformative technologies is just the first step, and a step that historically has rarely resulted in the sort of instant transformation we’ve naively anticipated. Yet it’s the long and complex path towards implementation that we should have anticipated, and with which many of our most imaginative innovators will now engage.

David Shaywitz Contributor

Article link: https://www.forbes.com/sites/davidshaywitz/2017/12/10/winning-health-tech-entrepreneurs-will-focus-on-implementation-not-fetishize-invention/#52eebfb943c2

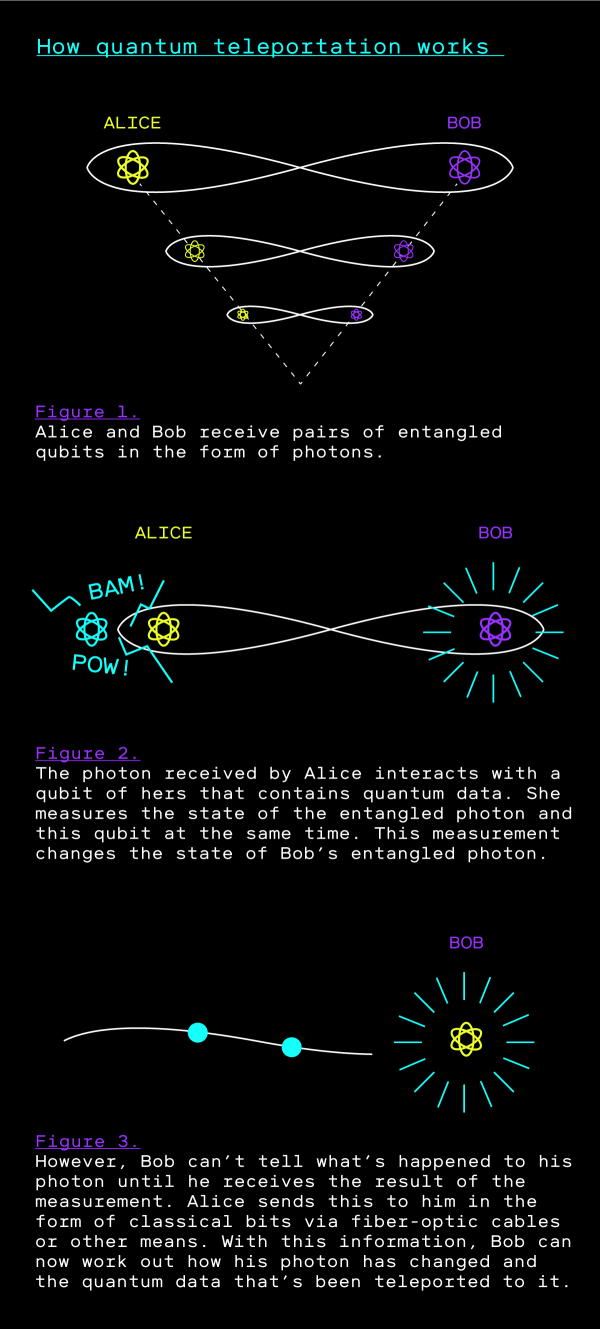

Researchers and companies are creating ultra-secure communication networks that could form the basis of a quantum internet. This is how it works.

Researchers and companies are creating ultra-secure communication networks that could form the basis of a quantum internet. This is how it works.

Materials in cables can absorb photons, which means they can typically travel for no more than a few tens of kilometers. In a classical network, repeaters at various points along a cable are used to amplify the signal to compensate for this.

Materials in cables can absorb photons, which means they can typically travel for no more than a few tens of kilometers. In a classical network, repeaters at various points along a cable are used to amplify the signal to compensate for this. This may sound like science fiction, but it’s a real method that involves transmitting data wholly in quantum form. The approach relies on a quantum phenomenon known as

This may sound like science fiction, but it’s a real method that involves transmitting data wholly in quantum form. The approach relies on a quantum phenomenon known as