By KIRSTEN ERRICKSEPTEMBER 26, 2022

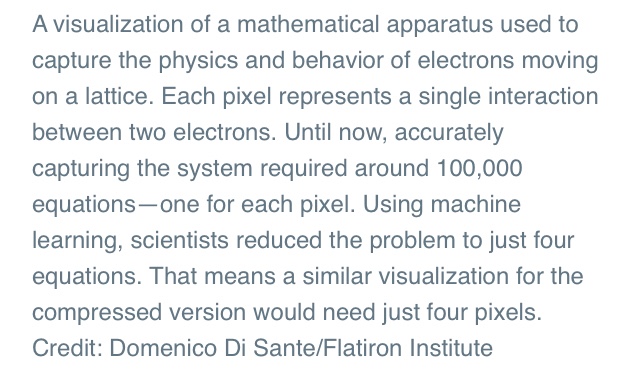

The Government Accountability Office highlighted deficiencies in common data standards, interoperability and public health IT infrastructure.

In light of public health emergencies like the rapidly-evolving COVID-19 pandemic, the importance of health data and the ability to share it is critical, according to a Government Accountability Office report released Thursday.

The watchdog found that public health entities do not have the ability to share new data and other important information in real-time, hindering the ability to respond to health crises quickly.

GAO stated that there are numerous challenges to the way the government manages public health data. In particular, the watchdog noted three deficiencies that the government must address: common standards for collecting data, interoperability and public health IT infrastructure.

Common data standards are “requirements for public health entitles to collect certain data elements, such as patient characteristics (e.g., name, sex, and race) and clinical information (e.g., diagnosis and test results) in a specific way.” A lack of such standards can make it difficult for federal agencies to compare data across different states, a challenge the Centers for Disease Control and Prevention faced in collecting information on the COVID-19 pandemic.

Interoperability is “the ability of data collection systems to exchange information with and process information from other systems.” GAO noted that the lack of interoperability during the early days of COVID-19, made health officials and hospitals to manually input data into several systems, affecting the CDC’s ability to make timely decisions.

Lastly, public health IT infrastructure refers to “the computer software, hardware, networks and policies that enable health entities to report and retrieve data and information.” Not having a public health IT infrastructure can hinder the timeliness and completeness of information shared during public health emergencies. For example, during the early stages of the COVID-19 pandemic, some states manually collected, processed and transferred data, which increased the likelihood of errors.

While the Department of Health and Human Services launched the HHS Protect platform in April 2020 to provide a centralized source of information reflecting COVID-19 numbers, public health and state organizations questioned the completeness and accuracy of the data.

The report noted that federal law requires HHS to establish a national public health situational awareness network with a standard data format that provides secure, real-time information to help detect and respond to infectious diseases. However, this has not been implemented nor have previous issues identified by GAO been addressed. According to a previous GAO report, HHS Protect does not satisfy all of the legal requirements for this network. As a result, the watchdog states that the legally mandated requirements for this network do not currently exist.

Specifically, the report stated, “We agree that HHS has implemented several systems related to public health situational awareness and biosurveillance [including HHS Protect]. However, the law called for a near real-time electronic nationwide public health situational awareness and biosurveillance capability through an interoperable network of systems to share data and information. This capability was to be made up of interoperable systems that would enable the simultaneous sharing of information needed to enhance situational awareness at the federal, state, local and tribal levels of public health. This capability does not exist today.”

According to GAO, “having near real-time access to these data could significantly improve our nation’s preparedness for public health emergencies and potentially save lives. Without the network, federal, state and local health departments, hospitals and laboratories are left without the ability to easily share health information in real-time to respond effectively to diseases.”

GAO stated that it previously made several recommendations related to the three challenges, but these have not been implemented.

For common data standards, GAO recommended that HHS establish an expert committee for data collection and reporting standards and for the CDC to define specific actions and a timeframe for its data modernization efforts.

To address the issue of interoperability, GAO recommended that HHS ensure that its public health situational awareness network includes how interoperability standards will be used.

For public health IT infrastructure, GAO recommended that HHS prioritize developing the network by establishing actions—both near- and long-term—to show progress, for the agency to identify an office to oversee the network’s development as well as to identify and document challenges to information sharing and lessons from the COVID-19 pandemic.

The most recent report did not include how the agencies responded to GAO’s recommendations. However, the previous recommendations were met with mixed agreement by HHS.