June 14, 2023. Jennifer Abbasi

Article Information

JAMA. Published online June 14, 2023. doi:10.1001/jama.2023.10262

A year and a half after US Surgeon General Vivek Murthy, MD, MBA, called attention to increasing symptoms of depression, anxiety, and suicidal ideation among children and adolescents, the Nation’s Doctor is now sounding the alarm on a likely driver of the youth mental health crisis: social media use.

“The most common question parents ask me is, ‘Is social media safe for my kids?’ The answer is that we don’t have enough evidence to say it’s safe, and in fact, there is growing evidence that social media use is associated with harm to young people’s mental health,” Murthy said in a recent statement announcing a new US Surgeon General’s Advisory.

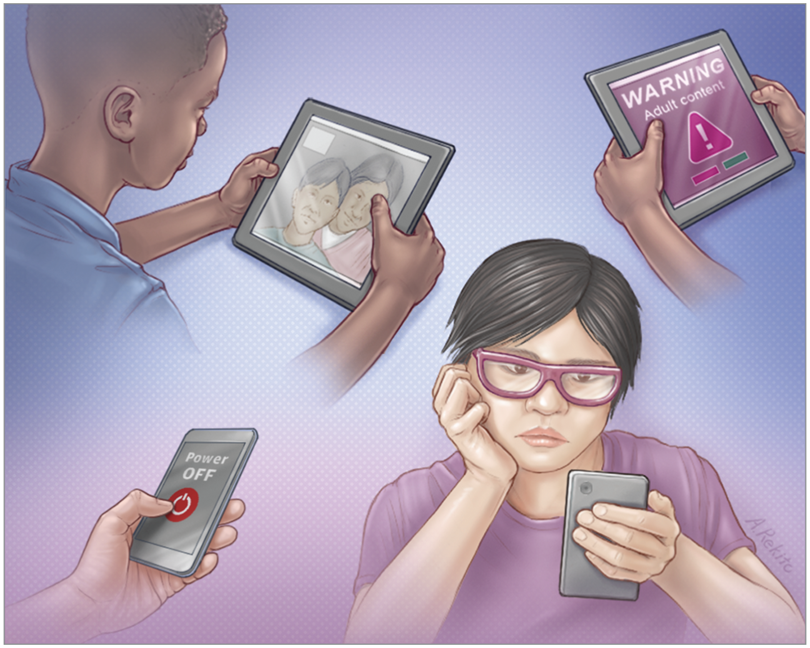

Such advisories are reserved for major public health issues that warrant more awareness and action. Released in late May, the new advisory highlighted increasing concerns about social media’s effects on the mental health of the nation’s youth. It pointed out that nearly all teenagers report using a social media platform and that more than a third say they use at least 1 platform “almost constantly.”Younger children are also active online. Despite a minimum age requirement of 13 years on most US social media platforms, nearly 40% of 8- to 12-year-olds report social media use.

“Children are exposed to harmful content on social media, ranging from violent and sexual content, to bullying and harassment,” Murthy cautioned in the announcement. “And for too many children, social media use is compromising their sleep and valuable in-person time with family and friends. We are in the middle of a national youth mental health crisis, and I am concerned that social media is an important driver of that crisis—one that we must urgently address.”

Although evidence is growing that problematic social media use can negatively affect youth mental health and well-being, the advisory also emphasized that more research is needed to understand the “full scope and scale” of effects on young people—both negative and positive. In the meantime, the burden of protecting children and adolescents should fall not only to their families, but also to technology companies, policy makers, and researchers, the advisory said.

A Wholesale Shift

Leaders of several major medical organizations, including the American Academy of Pediatrics, the American Academy of Family Physicians, and the American Psychiatric Association, welcomed the advisory. “As physicians, we see firsthand the impact of social media, particularly during adolescence—a critical period of brain development,” Jack Resneck Jr, MD, president of the American Medical Association, the publisher of JAMA, said in the announcement.

In emails with JAMA, child and adolescent mental health experts also agreed with the Surgeon General’s message.

“This advisory maps onto what I am concerned about as a clinician, as a researcher, and as a parent,” said Jeremy Veenstra-VanderWeele, MD, a professor of child and adolescent psychiatry at the Columbia University Irving Medical Center and a JAMA Psychiatryeditorial board member.

“We have seen a wholesale shift in how youth are interacting with each other,” he continued. “We see what we think are the impacts of this shift, but we need much more research to understand what we are seeing.” Circumstantially, he said, clinicians have observed “a marked rise in youth anxiety and depression over the same period of time when social media has become so widely used.”

Veenstra-VanderWeele and other experts said the advisory struck the right balance between highlighting the potential harms and benefits of social media for youth. Social media use affects different children in different ways, based on their individual characteristics, as well as on cultural, historical, and socioeconomic factors, the advisory noted. For some young people, social media can offer positive connections and support they may not have in their homes, schools, or neighborhoods. These benefits can be especially important for youth who belong to marginalized groups.

But there can be substantial downsides. “It is staggering how much time youth spend on social media,” Veenstra-VanderWeele said. “It really seems all-consuming for some use, with incredible distress if it is taken away.”

n fact, according to the advisory, teenagers in the University of Michigan’s ongoing Monitoring the Future survey spent an average of 3.5 hours on social media per day in 2021. One in 4 teens reported spending 5 or more hours on the platforms daily. Research published in JAMA Psychiatry found that adolescents who spent more than 3 hours on social media per day had an increased risk of poor mental health outcomes such as depression and anxiety symptoms. And perhaps unsurprisingly, excessive social media use has also been strongly tied to sleep problems in youth.

The hours spent scrolling aren’t the only concern, the advisory noted. There’s also the exposure to harmful messages and behaviors, cyberbullying, and hate-based content. These exposures appear to be taking a toll on the nation’s youth. Nearly half of teenagers—46%—said social media made them feel worse about their body image in a 2022 survey conducted by the Boston Children’s Hospital Digital Wellness Lab. Girls appear to be especially vulnerable to comparing themselves with others on social media, which has been linked with body dissatisfaction, eating disorders, and symptoms of depression.

The advisory acknowledged that the interplay between social media use and youth mental health may be bidirectional and noted that untangling these complex relationships will require more data and transparency than technology companies have been willing to provide so far. It urged these companies to share their data with independent researchers.

Technology companies should also tailor their platforms for children’s developmental capabilities, the American Psychological Association (APA) said in its own health advisory, released in May. Features designed to maximize user engagement—such as displayed “likes,” autoplay content, and infinite scrolling—may not be appropriate for kids.

The Surgeon General’s advisory also outlined actions policy makers, researchers, parents and caregivers, and young people themselves can take immediately. Policy makers can, for example, fund additional research, better protect kids’ privacy, and work to strengthen safety standards, while researchers can prioritize studies that inform those standards. Parents and caregivers, the advisory recommended, can establish technology-free zones in the house to protect sleep and encourage in-person socializing. And young people can aim to adopt healthy practices, such as limiting their social media time.

The Physician’s Role

For Kara Bagot, MD, a New York–based child and adolescent psychiatrist, the advisory is a positive step forward. But she said it falls short in providing a full mitigation plan with resources allocated to address the recommendations.

“This is particularly important as these recommendations are not novel; researchers and clinicians in the field have been urging more action from technology companies, pediatrics and family medicine practitioners, and funding agencies but lack the power or resources to facilitate the changes needed,” said Bagot, who is also an editor at JAMA Psychiatry.

Going forward, “we urgently need to think about testing different approaches to evaluate and potentially mitigate the risks that may be associated with social media use,” Veenstra-VanderWeele said.

Youth should be at the center of addressing the issue of their own social media use, advised Tammy Chang, MD, MPH, an associate professor in the Department of Family Medicine at the University of Michigan and director of the MyVoice National Poll of Youth. The poll has found that many young people already know that social media use has negative impacts and are trying to modulate their use.

“When youth have input and buy-in on initiatives meant to change their behaviors, those initiatives are more likely to succeed,” Chang said, noting that this approach could be critical to the advisory’s success. “Meaningfully partnering with youth now could change the energy around the advisory from something that adults are worried about for youth to a partnership between youth and experts to address something everyone is working on together.”

Pediatricians and family physicians also have an important role. Veenstra-VanderWeele said he believes primary care physicians have a responsibility to discuss safe and balanced use of social media with youth and their parents. These conversations should include setting limits, particularly around sleep.

“In discussions with physicians, youth are often able to describe how they would like to change their use of social media,” he said. “Discussing their desire to change their social media use together with their parents allows collaborative limit-setting, which is an ideal way for youth to align with their parents, rather than generating conflict.”

In their one-on-one discussions with young patients, physicians should also ask about exposure to harmful content or potentially dangerous online interactions, “in the same way that we ask about substance use or sexual activity,” Veenstra-VanderWeele said.

Health professionals can educate families about social media settings that can be adjusted to better customize the platforms for children, such as hiding “like” and “view” counts and restricting time on Instagram, turning off comments and scheduling reminders for “screen time breaks” on TikTok, and toggling off “autoplay” on YouTube.

Physicians can also discuss parents’ screen-related behaviors and how these habits may be affecting their children. “Youth are active observers of their caregivers,” Bagot said. “As such, modeling balanced online behaviors for one’s children is important. Parents need to be educated on [this] and also on what balanced, appropriate behaviors are.”

Ultimately, experts say, efforts to protect youth well-being should not discount the positive interactions that can happen when kids connect on social media. Veenstra-VanderWeele has heard from teens about how important their online community is to them, particularly for those who may not fit in easily with peers at school or in their neighborhood. “We need to figure out how to harness the potential benefits of online social connections while decreasing the potential harms,” he said.

Article link: https://jamanetwork.com/journals/jama/fullarticle/2806277?

Back to top

Article Information

Published Online: June 14, 2023. doi:10.1001/jama.2023.10262

Conflict of Interest Disclosures: Dr Chang reported serving as a committee member of the Board on Children, Youth, and Families at the National Academies of Sciences, Engineering, and Medicine; directing MyVoice, a national poll of youth that has received internal funding from the University of Michigan. No other disclosures were reported.

See More About

Adolescent MedicineMedia and YouthPsychiatry and Behavioral HealthPediatrics