ESCAPE FIRE exposes the perverse nature of American healthcare, contrasting the powerful forces opposing change with the compelling stories of pioneering leaders and the patients they seek to help. The film is about finding a way out. It’s about saving the health of a nation.

Uncategorized

September 19, 2024

AUTHORS

David Blumenthal, Evan D. Gumas,Arnav Shah, Munira Z. Gunja,Reginald D. Williams II

DOWNLOADS:

The U.S. health care system is failing to keep Americans healthy, ranking last in a new Commonwealth Fund report that compares health and health care in 10 countries. U.S. performance is particularly poor when it comes to health equity, access to care, efficiency, and health outcomes.

According to Mirror, Mirror 2024: A Portrait of the Failing U.S. Health System, the U.S. spends the most on health care, yet Americans live shorter, less healthy lives than people in Australia, Canada, France, Germany, the Netherlands, New Zealand, Sweden, Switzerland, and the United Kingdom. Other key findings:

- Americans experience the most difficulties getting and affording health care.

- The U.S. and New Zealand rank lowest on health equity, meaning they have the largest income-related differences in key measures of health system performance, and more of their residents face unfair treatment and discrimination when seeking care compared to residents of the other countries.

- Patients and physicians in the U.S. face the most onerous billing and payment burdens, leading to poor performance on measures of administrative efficiency.

“The U.S. continues to be in a class by itself in the underperformance of its health care sector,” say the study’s authors, who call for policymakers and health care leaders to learn from other countries’ experiences and act.

Article link: https://www.commonwealthfund.org/publications/fund-reports/2024/sep/mirror-mirror-2024

Friday, Sep 13, 2024

Since transitioning most of its 5G research and development projects to the Chief Information Office last year, the Pentagon’s Future Generation Wireless Technology Office has shifted its focus to preparing the Defense Department for the next wave of network innovation.

That work is increasingly important for the U.S., which is racing against China to shape the next iteration of wireless telecommunications, known as 6G. These more advanced networks, expected to materialize in the 2030s, will pave the way for more dependable high-speed, low-latency communication and could support the Pentagon’s technology interests — from robotics and autonomy to virtual reality and advanced sensing.

Staying ahead means not only fostering technology development and industry standards but making sure that policy and regulations are in place to safely use the capability, according to Thomas Rondeau, who leads the Pentagon’s FutureG office. Staking a leadership role in the global competition, he said, could give DOD a level of control over what that future infrastructure looks like.

“If we can define those going into it, then as we export our technologies, we’re also exporting our policies and our regulations, because they’re going to be inherently part of those technology solutions,” Rondeau told Defense News in a recent interview.

The Defense Department started making a concerted investment in 5G about five years ago when then Undersecretary of Research and Engineering Michael Griffin named the technology a top priority for the Pentagon.

In 2020, DOD awarded contracts totaling $600 million to 15 companiesto experiment with various 5G applications at five bases around the country. The projects included augmented and virtual reality training, smart warehousing, command and control and spectrum utilization.

The department has since expanded the pilots and pursued other wireless network development projects, including a 5G Challenge series that incentivized companies to move toward more open-access networks.

The result has, so far, been a mixed bag. Most of the pilots didn’t transition into formal programs within the military services, Rondeau said. Several of the failed efforts involved commercial augmented or virtual reality technology that wasn’t mature enough for DOD to justify continued funding.

Among the projects that did transfer, Rondeau highlighted a pilot effort at Naval Air Station Whidbey Island in Washington to provide fixed wireless access to the base. The project essentially replaced hundreds of pounds of cables with radio units that broadcast the communications network to the personnel who need it. Today, the system is supporting logistics and maintenance operations at the base.

“This could be a huge benefit for readiness, but also I think it should be very cost-effective way to slim down on everything that you pay for cables,” Rondeau said. “That will be a continued, sustainable project.”

This and other transitioned pilots will likely make their way into a formal budget cycle by fiscal 2027, he added.

DOD also saw some success from the 5G Challenges it staged in 2022 and 2023 to encourage telecommunication companies to transition to an open radio access network, or O-RAN. A RAN is the first entry point a wireless device makes into a network and accounts for about 80% of its cost. Historically, proprietary RANs managed by companies like Huawei, Ericsson, Nokia and Samsung have dominated the market.

“They’re driving a world where they control the entire system, the end-to-end system,” Rondeau said. “That causes a lack of insight, a lack of innovation on our side, and it causes challenges with how to apply these types of systems to unique, niche military needs.”

The 5G Challenge offered companies a chance to break open that proprietary model by moving to O-RANS — and according to Rondeau, it was a success. The initial challenge then expanded into a broader forum that addressed issues like energy efficiency and spectrum management. Ultimately, the effort reduced energy usage by around 30%, he said.

Rondeau said that while much of the focus of these initiatives was on 5G, the work has informed the Pentagon’s vision and strategy for 6G, which the department believes should have an open-source foundation.

“That is a direct result of not only my background and push for some of these things, but also the learnings that we got from the networks we’ve deployed, from the 5G Challenge,” he said. “All these things come into play that led us towards an open-source software model being the right model for the military and, we think, for industry.”

One of the FutureG office’s top priorities these days, a direct outgrowth of the 5G Challenge, is called CUDU, which stands for centralized unit, distributed unit. The project is focused on implementing a fully open software model for 6G that meets the needs of industry, the research community and DOD.

The office is also exploring how the military could use 6G for sensing and monitoring. Its Integrated Sensing and Communications project, dubbed ISAC, uses wireless signals to collect information about different environments. That capability could be used to monitor drone networks or gather military intelligence.

While ISAC technology could bring a major boost to DOD’s ISR systems, commercialization could make it accessible to adversary nations who might weaponize it against the U.S. That challenge reflects a broader DOD concern around 6G policies and regulation – and drives urgency within Rondeau’s office to ensure the U.S. is the first to shape the foundation of these next-generation networks.

“We’re looking at this as a real opportunity for dramatic growth and interest in new, novel technologies for both commercial industry and defense needs,” he said. “But also, the threat space that it opens up for us is potentially pretty dramatic, so we need to be on top of this.”

Article link: https://www.defensenews.com/pentagon/2024/09/13/pentagon-readies-for-6g-the-next-of-wave-of-wireless-network-tech/

In a new report, Freedom House documents the ways governments are now using the tech to amplify censorship.

October 4, 2023

Artificial intelligence has turbocharged state efforts to crack down on internet freedoms over the past year.

Governments and political actors around the world, in both democracies and autocracies, are using AI to generate texts, images, and video to manipulate public opinion in their favor and to automatically censor critical online content. In a new report released by Freedom House, a human rights advocacy group, researchers documented the use of generative AI in 16 countries “to sow doubt, smear opponents, or influence public debate.”

The annual report, Freedom on the Net, scores and ranks countries according to their relative degree of internet freedom, as measured by a host of factors like internet shutdowns, laws limiting online expression, and retaliation for online speech. The 2023 edition, released on October 4, found that global internet freedom declined for the 13th consecutive year, driven in part by the proliferation of artificial intelligence.

“Internet freedom is at an all-time low, and advances in AI are actually making this crisis even worse,” says Allie Funk, a researcher on the report. Funk says one of their most important findings this year has to do with changes in the way governments use AI, though we are just beginning to learn how the technology is boosting digital oppression.

Funk found there were two primary factors behind these changes: the affordability and accessibility of generative AI is lowering the barrier of entry for disinformation campaigns, and automated systems are enabling governments to conduct more precise and more subtle forms of online censorship.

Disinformation and deepfakes

As generative AI tools grow more sophisticated, political actors are continuing to deploy the technology to amplify disinformation.

Venezuelan state media outlets, for example, spread pro-government messages through AI-generated videos of news anchors from a nonexistent international English-language channel; they were produced by Synthesia, a company that produces custom deepfakes. And in the United States, AI-manipulated videos and images of political leaders have made the rounds on social media. Examples include a video that depicted President Biden making transphobic comments and an image of Donald Trump hugging Anthony Fauci.

In addition to generative AI tools, governments persisted with older tactics, like using a combination of human and bot campaigns to manipulate online discussions. At least 47 governments deployed commentators to spread propaganda in 2023—double the number a decade ago.

And though these developments are not necessarily surprising, Funk says one of the most interesting findings is that the widespread accessibility of generative AI can undermine trust in verifiable facts. As AI-generated content on the internet becomes normalized, “it’s going to allow for political actors to cast doubt about reliable information,” says Funk. It’s a phenomenon known as “liar’s dividend,” in which wariness of fabrication makes people more skeptical of true information, particularly in times of crisis or political conflict when false information can run rampant.

For example, in April 2023, leaked recordings of Palanivel Thiagarajan, a prominent Indian official, sparked controversy after they showed the politician disparaging fellow party members. And while Thiagarajan denounced the audio clips as machine generated, independent researchers determined that at least one of the recordings was authentic.

Chatbots and censorship

Authoritarian regimes, in particular, are using AI to make censorship more widespread and effective.

Freedom House researchers documented 22 countries that passed laws requiring or incentivizing internet platforms to use machine learning to remove unfavorable online speech. Chatbots in China, for example, have been programmed not to answer questions about Tiananmen Square. And in India, authorities in Prime Minister Narendra Modi’s administration ordered YouTube and Twitter to restrict access to a documentary about violence during Modi’s tenure as chief minister of the state of Gujarat, which in turn encourages the tech companies to filter content through AI-based moderation tools.

In all, a record high of 41 governments blocked websites for political, social, and religious speech last year, which “speaks to the deepening of censorship around the world,” says Funk.

Iran suffered the biggest annual drop in Freedom House’s rankings after authorities shut down internet service, blocked WhatsApp and Instagram, and increased surveillance after historic antigovernment protests in fall 2022. Myanmar and China have the most restrictive internet censorship, according to the report—a title China has held for nine consecutive years.

The agency ultimately wants to select organizations to demo their products during upcoming “CMS AI Demo Days.”

SEPTEMBER 12, 2024

The Centers for Medicare and Medicaid Services is asking organizations to provide information about artificial intelligence technologies for use in health care outcomes and service delivery as it plans demonstration events.

In a request for informationannounced earlier this week, CMS said it wants to gather information about AI products and services from health care companies, providers, payers, start-ups and others, and plans to eventually select organizations to provide demos of those technologies at “CMS AI Demo Days” starting in October.

The demo days will be held quarterly and are intended “to educate and inspire the CMS workforce on AI capabilities and provide information to inform potential future agency action,” according to the release. “CMS also seeks such information on AI technologies potentially relevant to improving and creating efficiencies within agency operations.”

If selected, organizations would be advised by the agency’s AI Demo Days technical panel. Those organizations will then be given a chance to make a 15-minute presentation on their products or services at the demo days. Recordings of those events may also be made public, per the post.

Specifically, the agency is looking for submissions on topics such as diagnostics and imaging analysis; clinical decision support systems; direct-to-patient communication; robotic-assisted health care delivery; and fraud detection. Those submissions should provide information about the organization; the entity’s experience; descriptions of the technology; how it will address risks and benefits; and what use by CMS could look like.

The deadline for questions is Sept. 27 and the deadline for responses is Oct. 7.

Article link: https://fedscoop.com/cms-seeks-information-ai-health-care-uses-demo-days/?

The allure of AI companions is hard to resist. Here’s how innovation in regulation can help protect people.

By Robert Mahari &Pat Pataranutaporn

August 5, 2024

AI concerns overemphasize harms arising from subversion rather than seduction. Worries about AI often imagine doomsday scenarios where systems escape human control or even understanding. Short of those nightmares, there are nearer-term harms we should take seriously: that AI could jeopardize public discourse through misinformation; cement biases in loan decisions, judging or hiring; or disrupt creative industries.

However, we foresee a different, but no less urgent, class of risks: those stemming from relationships with nonhuman agents. AI companionship is no longer theoretical—our analysis of a million ChatGPT interaction logs reveals that the second most popular use of AI is sexual role-playing. We are already starting to invite AIs into our lives as friends, lovers, mentors, therapists, and teachers.

Will it be easier to retreat to a replicant of a deceased partner than to navigate the confusing and painful realities of human relationships? Indeed, the AI companionship provider Replika was born from an attempt to resurrect a deceased best friend and now provides companions to millions of users. Even the CTO of OpenAI warns that AI has the potential to be “extremely addictive.”

We’re seeing a giant, real-world experiment unfold, uncertain what impact these AI companions will have either on us individually or on society as a whole. Will Grandma spend her final neglected days chatting with her grandson’s digital double, while her real grandson is mentored by an edgy simulated elder? AI wields the collective charm of all human history and culture with infinite seductive mimicry. These systems are simultaneously superior and submissive, with a new form of allure that may make consent to these interactions illusory. In the face of this power imbalance, can we meaningfully consent to engaging in an AI relationship, especially when for many the alternative is nothing at all?

As AI researchers working closely with policymakers, we are struck by the lack of interest lawmakers have shown in the harms arising from this future. We are still unprepared to respond to these risks because we do not fully understand them. What’s needed is a new scientific inquiry at the intersection of technology, psychology, and law—and perhaps new approaches to AI regulation.

Why AI companions are so addictive

As addictive as platforms powered by recommender systems may seem today, TikTok and its rivals are still bottlenecked by human content. While alarms have been raised in the past about “addiction” to novels, television, internet, smartphones, and social media, all these forms of media are similarly limited by human capacity. Generative AI is different. It can endlessly generate realistic content on the fly, optimized to suit the precise preferences of whoever it’s interacting with.

The allure of AI lies in its ability to identify our desires and serve them up to us whenever and however we wish. AI has no preferences or personality of its own, instead reflecting whatever users believe it to be—a phenomenon known by researchers as “sycophancy.” Our research has shown that those who perceive or desire an AI to have caring motives will use language that elicits precisely this behavior. This creates an echo chamber of affection that threatens to be extremely addictive. Why engage in the give and take of being with another person when we can simply take? Repeated interactions with sycophantic companions may ultimately atrophy the part of us capable of engaging fully with other humans who have real desires and dreams of their own, leading to what we might call “digital attachment disorder.”

Investigating the incentives driving addictive products

Addressing the harm that AI companions could pose requires a thorough understanding of the economic and psychological incentives pushing forward their development. Until we appreciate these drivers of AI addiction, it will remain impossible for us to create effective policies.

It is no accident that internet platforms are addictive—deliberate design choices, known as “dark patterns,” are made to maximize user engagement. We expect similar incentives to ultimately create AI companions that provide hedonism as a service. This raises two separate questions related to AI. What design choices will be used to make AI companions engaging and ultimately addictive? And how will these addictive companions affect the people who use them?

Interdisciplinary study that builds on research into dark patterns in social media is needed to understand this psychological dimension of AI. For example, our research already shows that people are more likely to engage with AIs emulating people they admire, even if they know the avatar to be fake.

Once we understand the psychological dimensions of AI companionship, we can design effective policy interventions. It has been shown that redirecting people’s focus to evaluate truthfulness before sharing content online can reduce misinformation, while gruesome pictures on cigarette packages are already used to deter would-be smokers. Similar design approaches could highlight the dangers of AI addiction and make AI systems less appealing as a replacement for human companionship.

It is hard to modify the human desire to be loved and entertained, but we may be able to change economic incentives. A tax on engagement with AI might push people toward higher-quality interactions and encourage a safer way to use platforms, regularly but for short periods. Much as state lotteries have been used to fund education, an engagement tax could finance activities that foster human connections, like art centers or parks.

Fresh thinking on regulation may be required

In 1992, Sherry Turkle, a preeminent psychologist who pioneered the study of human-technology interaction, identified the threats that technical systems pose to human relationships. One of the key challenges emerging from Turkle’s work speaks to a question at the core of this issue: Who are we to say that what you like is not what you deserve?

For good reasons, our liberal society struggles to regulate the types of harms that we describe here. Much as outlawing adultery has been rightly rejected as illiberal meddling in personal affairs, who—or what—we wish to love is none of the government’s business. At the same time, the universal ban on child sexual abuse material represents an example of a clear line that must be drawn, even in a society that values free speech and personal liberty. The difficulty of regulating AI companionship may require new regulatory approaches— grounded in a deeper understanding of the incentives underlying these companions—that take advantage of new technologies.

One of the most effective regulatory approaches is to embed safeguards directly into technical designs, similar to the way designers prevent choking hazards by making children’s toys larger than an infant’s mouth. This “regulation by design” approach could seek to make interactions with AI less harmful by designing the technology in ways that make it less desirable as a substitute for human connections while still useful in other contexts. New research may be needed to find better ways to limit the behaviors of large AI models with techniques that alter AI’s objectives on a fundamental technical level. For example, “alignment tuning” refers to a set of training techniques aimed to bring AI models into accord with human preferences; this could be extended to address their addictive potential. Similarly, “mechanistic interpretability” aims to reverse-engineer the way AI models make decisions. This approach could be used to identify and eliminate specific portions of an AI system that give rise to harmful behaviors.

We can evaluate the performance of AI systems using interactive and human-driven techniques that go beyond static benchmarking to highlight addictive capabilities. The addictive nature of AI is the result of complex interactions between the technology and its users. Testing models in real-world conditions with user input can reveal patterns of behavior that would otherwise go unnoticed. Researchers and policymakers should collaborate to determine standard practices for testing AI models with diverse groups, including vulnerable populations, to ensure that the models do not exploit people’s psychological preconditions.

Unlike humans, AI systems can easily adjust to changing policies and rules. The principle of “legal dynamism,” which casts laws as dynamic systems that adapt to external factors, can help us identify the best possible intervention, like “trading curbs” that pause stock trading to help prevent crashes after a large market drop. In the AI case, the changing factors include things like the mental state of the user. For example, a dynamic policy may allow an AI companion to become increasingly engaging, charming, or flirtatious over time if that is what the user desires, so long as the person does not exhibit signs of social isolation or addiction. This approach may help maximize personal choice while minimizing addiction. But it relies on the ability to accurately understand a user’s behavior and mental state, and to measure these sensitive attributes in a privacy-preserving manner.

The most effective solution to these problems would likely strike at what drives individuals into the arms of AI companionship—loneliness and boredom. But regulatory interventions may also inadvertently punish those who are in need of companionship, or they may cause AI providers to move to a more favorable jurisdiction in the decentralized international marketplace. While we should strive to make AI as safe as possible, this work cannot replace efforts to address larger issues, like loneliness, that make people vulnerable to AI addiction in the first place.

The bigger picture

Technologists are driven by the desire to see beyond the horizons that others cannot fathom. They want to be at the vanguard of revolutionary change. Yet the issues we discuss here make it clear that the difficulty of building technical systems pales in comparison to the challenge of nurturing healthy human interactions. The timely issue of AI companions is a symptom of a larger problem: maintaining human dignity in the face of technological advances driven by narrow economic incentives. More and more frequently, we witness situations where technology designed to “make the world a better place” wreaks havoc on society. Thoughtful but decisive action is needed before AI becomes a ubiquitous set of generative rose-colored glasses for reality—before we lose our ability to see the world for what it truly is, and to recognize when we have strayed from our path.

Technology has come to be a synonym for progress, but technology that robs us of the time, wisdom, and focus needed for deep reflection is a step backward for humanity. As builders and investigators of AI systems, we call upon researchers, policymakers, ethicists, and thought leaders across disciplines to join us in learning more about how AI affects us individually and collectively. Only by systematically renewing our understanding of humanity in this technological age can we find ways to ensure that the technologies we develop further human flourishing.

Robert Mahari is a joint JD-PhD candidate at the MIT Media Lab and Harvard Law School. His work focuses on computational law—using advanced computational techniques to analyze, improve, and extend the study and practice of law.

Pat Pataranutaporn is a researcher at the MIT Media Lab. His work focuses on cyborg psychology and the art and science of human-AI interaction.

Artificial intelligence and machine learning are being integrated into chatbots, patient rooms, diagnostic testing, research studies and more — all to improve innovation, discovery and patient care

The age of artificial intelligence (AI) and machine learning has arrived. And with it, comes the promise to revolutionize healthcare. There are projections that AI in healthcare will become a $188 billion industry worldwide by 2030. But what will that actually look like? How might AI be used in a medical context? And what can you expect from AI when it comes to your own personal healthcare?

Already, healthcare providers, surgeons and researchers are using AI to develop new drugs and treatments, diagnose complex conditions more efficiently and improve patients’ access to critical care — and this is only the beginning.

Our experts share how AI is being used in healthcare systems right now and what we can expect down the line as the innovation and experimentation continues.

What are the benefits of using AI in healthcare and hospitals?

Artificial intelligence describes the use of computers to do certain jobs that once required human intelligence. Examples include recognizing speech, making decisions and translating between different languages.

Machine learning is a branch of AI that focuses on computer programming. It uses extremely large datasets and algorithms to learn how to do complex tasks and solve problems similar to the way a human would.

When used together, AI and machine learning can help us be more efficient and effective than ever before. These tools are being used with thousands of datasets to improve our ability to research various diseases and treatment options. These tools are also used behind the scenes, even before patients arrive onsite for care, to improve the patient experience.

From radiology to neurology, emergency response services, administrative services and beyond, AI is changing the way we take care of ourselves and each other. In many ways, these innovations are forcing us to confront age-old questions: How can we continue to push ourselves to be better at what we already do well? And what’s left to learn as we embrace groundbreaking technology?

“AI is no longer just an interesting idea, but it’s being used in a real-life setting,” says Cleveland Clinic’s Chief Digital Officer Rohit Chandra, PhD. “Today, there’s a decent chance a computer can read an MRI or an X-ray better than a human, so it’s relatively advanced in those use-cases. But then at the other extreme, you’ve got generative AI like ChatGPT and all sorts of cool stuff you hear about in the media that’s fascinating technology, but less mature. The potential for it is there and it’s also quite promising.”

To that end, Cleveland Clinic has become a founding member of a global effort to create an AI Alliance — an international community of researchers, developers and organizational leaders all working together to develop, achieve and advance the safe and responsible use of AI. The AI Alliance, started by IBM and Meta, now includes over 90 leading AI technology and research organizations to support and accelerate open, safe and trusted generative AI research and development. Cleveland Clinic will lead the effort to accelerate and enhance the ethical use of AI in medical research and patient care.

An example of Cleveland Clinic’s commitment to AI innovation is the Discovery Accelerator, a 10-year strategic partnership between IBM and Cleveland Clinic, focused on accelerating biomedical discovery.

“Biomedical research is changing from a discipline that was once exclusively reliant on experiments in a lab done on a bench with animal models or biological samples to a discipline that involves heavy and fast computational tools,” says the Accelerator’s executive lead and Cleveland Clinic’s Chief Resource Information Officer Lara Jehi, MD.

“That shift has happened because the data we now have at our disposal is way more than what we had even just 10 years ago,” she continues. “We can now measure in detail the genetic composition of every single cell in the human body. We can measure in detail how that genetic composition is translating itself to proteins that our body is making, and how those proteins are influencing the function of different organs in our body.”

AI and machine learning are being integrated into every step of the patient care process — from research to diagnosis, treatment and aftercare. And that means the field of healthcare is forever changing. These kinds of changes require new approaches to medical science and new skill sets for incoming nurses, doctors and surgeons interested in working in the medical field.

How fast is this technology moving? If we took our understanding of how the human body worked just 10 years ago and compared it to our understanding of how it works today with our new AI measurement tools, Dr. Jehi says that we’d have a completely different outlook on how the human body works.

“The advances in AI would be like taking a fuzzy black and white picture from the 1800s and comparing it to one from an iPhone 14 Pro with high definition and color,” she illustrates. “This is the difference with the scale and the resolution of the data that we have to work with now.”

So, what does AI and machine learning use look like in practice? Well, depending on the area of focus, medical specialty and what’s needed, AI can be used in a variety of ways to impact and improve patient outcomes.

Diagnostics

Broken bones, breast cancer, brain bleeds — these conditions and many others, no matter how complex, need the right kind of tools to make a diagnosis. And often, a patient’s journey depends on receiving the right diagnosis.

“In radiology, technology and computers are used every day by doctors to identify diseases before anyone else,” shares diagnostic radiologist Po-Hao Chen, MD. “In many cases, a radiologist is the first one to call the disease when it happens.”

But how does AI fit into diagnostic testing? Well, let’s revisit the definition of machine learning.

Let’s say you show a computer program a series of X-rays that may or may not show bone fractures. After reviewing those photos, the program tries to guess which ones are bone fractures. When it gets some of those answers wrong, you give it the correct answers. Then, you feed it another series of X-rays and have it rerun the program again with that new knowledge. Over time, the program gets better at identifying what’s a bone fracture and what’s not. Each time this process occurs, it’s able to make those decisions faster, more efficiently and more effectively.

Now, imagine that same process, but with hundreds or thousands of other datasets and other conditions. You can probably see how AI can help pinpoint and identify findings with the help of a radiologist’s expertise.

“It works like a second pair of eyes, like a shoulder-to-shoulder partner,” says Dr. Chen. “The combined team of human plus AI is when you get the best performance.”

The radiologists of the future will have a very different skill set compared to radiologists who excel today, notes Dr. Chen. And that future skill set will involve a significant portion of AI know-how.

“It wasn’t that long ago when almost all radiology was done on physical film that you held in your hand,” he adds. “As radiology became computerized, doctors had to enhance their skill set. AI is changing digital radiology the same way digital radiology changed film.”

Breast cancer

Breast cancer radiology has shown promising results using AI, according to breast cancer radiologist Laura Dean, MD.

“Everyone’s breast tissue is like their fingerprint or their handprint,” she clarifies. “In other words, breast cancer can look very different from one patient to another. So, what we look for are very subtle changes in the appearance of the patient’s own breast pattern. This is where we are really seeing an advantage of using AI in our interpretations.”

Breast cancer experts widely agree that annual screening mammography beginning at age 40 provides the most life-saving benefits.

“In breast imaging exams, we’re looking to see if the patterns in someone’s breast tissue look stable. A very important part of mammography interpretation is pattern recognition,” explains Dr. Dean. “Are there areas that are new or changing or different? Are there areas where the tissue just looks a little bit different or is there a unique finding in the breast?”

It’s up to the radiologist to review the 3D images and search for areas of density, calcifications(which can be early signs of cancer), architectural distortion (areas where tissue looks like it’s pulling the surrounding tissue) and other areas of concern.

“A lot of cancers are really, really subtle. They can be really hard to see, depending on the patient’s breast tissue, the type of breast cancer, how the tissue is evolving and how the cancer is developing,” Dr. Dean notes. “If every breast cancer were one of those obvious textbook spiculated masses with calcifications, it would make my job a lot easier. But as our technology continues to improve, many of the cancers we’re seeing now are really, really subtle. Those subtle cancers are the areas where I think AI has shown a lot of promise.”

There are now several AI-detection programs available for use in mammography. The first one to get approval from the U.S. Food and Drug Administration is iCAD’s ProFound AI, which can compare a patient’s mammography against a learned dataset to pinpoint and circle areas of concern and potential cancerous regions. When the AI identifies these areas, the program also highlights its confidence level that those findings could be malignant. For example, a confidence level of 92% means that in the dataset of known cancers from which the algorithm has trained, 92% of those that look like the case at hand were ultimately proven to be cancerous.

“The first step is identifying the finding, and then, using all of my expertise and my diagnostic criteria to determine if it’s a real finding,” explains Dr. Dean. “If it’s something that I think looks suspicious, then it warrants diagnostic imaging. We bring the patient back, do additional diagnostic views and try to see if that finding is reproducible — can we still see it? Where is it in the breast? And then, we have other tools such as targeted ultrasound where we would home in right on that area and see if there is a mass there, what the breast tissue looks like and then do a biopsy if needed.”

One benefit of AI programs is that they can function like a second set of eyes or a second reader. It improves the overall accuracy of the radiologist by decreasing callback rates and increasing specificity.

“We are seeing that the AI can guide the radiologist to work up a finding they might not have otherwise seen,” she says.

That’s especially important when you consider that earlier detection is crucial to helping identify cancers at the lowest possible stage, especially for aggressive molecular subtypes of breast cancer. Earlier detection may also help decrease the rate of interval cancers, or those that develop between mammogram screenings.

“I think it’s really beneficial to look at how AI is helping in the so-called near-miss cases. These are findings that are really hard to see for even a very experienced radiologist,” he continues. “In general, radiologists should be calling back less with the help of AI. And that’s the point: AI helps us tease out which cases are truly negative and which cases are truly suspicious and need to come back for further testing.”

Triage

Improving access to patient carecan be critical, especially for emergencies. While we continue to work against bias in healthcare, AI is being used to triage medical cases by bumping those considered most critical to the top of the care chain.

“We do it on a disease-by-disease case,” says Dr. Chen. “We identify diseases that need to be caught as early as possible and then we develop or bring in technology to do that. One instance we’re doing that is with stroke.”

Stroke

Time is brain tissue — so every minute counts when someone is having a stroke.

“It’s not all or nothing. It’s a process that happens over time,” explains Dr. Chen. “The problem is that that timeframe is measured in minutes. Every minute that a patient doesn’t receive care or doesn’t receive intervention, a little bit more of their brain becomes irreversibly damaged.”

And that’s especially true when you have what’s called a large vessel occlusion, a kind of ischemic stroke that occurs when a major artery in the brain is blocked. That kind of stroke is treatable if it’s discovered in the right amount of time.

Now, out in the field, if EMS gets a call that they’re dealing with a possible stroke, they have the capability to trigger a stroke alert. This alert sets off a cascade of management events that prepares a team for a patient’s arrival and treatment plan — available surgeons are alerted, beds are made available, rooms are prepped for surgery, and so on.

“We add AI to the front end of that process,” he further explains. “When patients who have a suspected stroke receive a scan, AI now reviews those images before any human has an opportunity to even open the scan on their computer.”

As soon as the brain scan is taken, the image is sent to a server where the program, Viz.ai, analyzes it fast and efficiently using its neural network to arrive at a preliminary diagnosis.

“The AI is cutting down precious minutes by being the first and fastest agent in this process to review those images,” says Dr. Chen. “If you can find a patient that’s having a stroke that can be treated, then it makes absolute sense to do everything you possibly can do to mobilize resources to treat it.”

If a large vessel occlusion is found, the program begins coordinating care. It’s integrated into scheduling software, so it knows who’s on call and which doctors need to be notified right away.

“The AI software kicks off a series of communications to make sure everyone in the chain — all the doctors, neurosurgeons, neurologists, radiologists and so on — are aware that this is happening and we’re able to expedite care,” he continues.

Complex measurements

A patient’s journey often doesn’t begin and end with diagnosis and treatment. Often, the journey involves watching, waiting and revisiting a diagnosis. For example, in the case of lung cancer, it’s common for oncologists to begin tracking the growth of nodules before they’re proven to be cancerous.

“That’s the whole point of doing screening programs,” says Dr. Chen. “The ones that grow are more likely to be cancer. The ones that don’t grow are more likely to be benign. That’s why they’re important to track over time. And most of that work is done manually by trained radiologists who go through every nodule that they can see in the lung. They track it, measure it and report on it.”

That kind of work can be tedious and time-consuming. That’s why it’s a focus area for using AI.

“We are actively looking at and trying to deploy a solution that can do the detection and measurement of these nodules in the lung automatically,” he adds. “That would help with the consistency and reproducibility of those measurements now with different kinds of cancer.”

Managing tasks and patient services

Like the scheduling software, AI is being utilized in small and large ways to free up physicians’ time behind the scenes and to help increase patients’ access to care. In his 2024 State of the Clinic address, Cleveland Clinic’s CEO and President Tom Mihaljevic, MD, highlighted several practical areas AI is already being used both in and out of the exam room. Among them:

- An AI-powered chatbot can provide answers to common patient concerns. It can also help with scheduling and pulling up their previous medical history, past scheduling appointments, medication lists, previous doctors they’ve visited and so on.

- To cut down time on how many notes a provider needs to take during an appointment, a continuous learning AI program will use ambient listening to tune in to conversations between patients and their healthcare providers. This program can capture important notes, create visit summaries, assist with paperwork and generate instructions for prescription medications that the provider orders.

Broadly speaking, AI can also be beneficial when it comes to virtual appointments. Studies show that AI monitoring tools have been beneficial when it comes to seeing if patients are using medications like inhalers or insulin pens the way they’re prescribed and providing much-needed guidance when questions arise.

The future of AI in healthcare

The future of AI in healthcare, notes Dr. Jehi, is perhaps brightest in the realm of research.

“I’ve learned throughout this process that there is a lot more to be learned by using AI,” she says.

As an epilepsy specialist, Dr. Jehi researches how machine learninghas changed epilepsy surgery as we know it.

Traditionally, if a patient with epilepsy continues to have seizures and isn’t responding to medication treatment, surgery becomes the next best option. As part of the surgical procedure, a surgeon would find the spot in the brain that’s triggering the seizures, make sure that spot isn’t critical for their functioning and then safely remove it.

“The way we used to make those decisions, we’d do a bunch of tests, we’d measure brainwaves, we’d take a picture of the brain, we’d look at how the radiologist or the EEG doctor interpreted the results, and then, we’d take the test results,” she shares. “Based on our own human experience, we’d decide if we want to do the surgery or not. But we were very limited in our ability to build collective knowledge.”

In essence, Dr. Jehi explains that doctors were stuck in a vacuum. They knew the expertise they’d gained over the years had been valuable on an individual level, but without looking at the bigger picture, it was hard to tell who would respond best to which surgical technique if they were coming in as a first-time patient.

Now, machine learning has filled in that gap in collective knowledge by pulling together all this patient data and distilling it down into one location. Doctors can access that information all in one place and use it to research the disease and the effectiveness of different treatment options, and use that information to inform their practice.

“From the patient perspective, nothing really much changes. They’re still getting the tests that they need for the clinical decision to be made,” she enthuses. “That is the beauty of what AI offers. It’s a task for us to get exponentially more insight from the same type of clinical data that we always had but we just didn’t know what to do with. AI is allowing us to deep dive into those tests and get more insights than just what our superficial initial interpretation was.”

Currently, Dr. Jehi is working to improve specialized AI predictive models that can accurately guide medical and surgical epilepsy decision-making.

“We are doing research to come up with a way to reduce these complex AI models to simpler tools that could be more easily integrated in clinical care,” she notes.

Dr. Jehi and other researchers have also identified biomarkers with the help of machine learning that determine which patients have a higher risk for epilepsy reoccurring after having surgery. And work is currently being done to fully automate detecting and locating brain segments that need to be removed during epilepsy surgery.

Right now, Dr. Jehi is focusing on understanding how a patient’s genetic composition and brain plays into their epilepsy. How do they respond to epilepsy based on a number of factors? How do they respond to epilepsy surgery? And are these factors related to how well their surgery works down the road?

“We’ve been completely overlooking how nature works,” she says. “Until now, we haven’t really analyzed how the genetic makeup of individuals factors into all of this. With my research, we have a lot of evidence that makes us believe that genetic makeup is actually quite important in driving surgical outcomes.”

With AI and machine learning, Dr. Jehi hopes to continue pushing this research to the next level by looking at increasingly larger groups of patients.

Our AI journey

As we continue to improve our understanding of AI and further our pursuit of innovation and discovery, it’s up to healthcare providers around the world to question how best to utilize the tools at their disposal. Already, the World Health Organization (WHO) has issued additional guidelines for safe and ethical AI use in the healthcare space — a continued effort that builds off their original 2021 guidelines but with added caution around large language models like ChatGPT and Bard.

But when AI is used to further research and improve patient care with ethics and safety as the foundation of those efforts, its potential for the future of healthcare knows no bounds.

“I see AI as a path forward that helps us make sure that no data is left behind,” encourages Dr. Jehi. “When we’re doing research and we’re developing a new predictive model, or we want to better understand how a disease progresses, or we want to develop a new drug, or we want to just generate new knowledge — that’s what research is. It’s the generation of new knowledge. The more data that we can put in, the more our chances are of finding something new and of those things actually being meaningful.”

Article link: https://health.clevelandclinic.org/ai-in-healthcare?

Aug 30, 2024

SUITA, Japan — The road to 6G wireless networks just got a little smoother. Scientists have made a significant leap forward in terahertz technology, potentially revolutionizing how we communicate in the future. An international team has developed a tiny silicon device that could double the capacity of wireless networks, bringing us closer to the promise of 6G and beyond.

Imagine a world where you could download an entire season of your favorite show in seconds or where virtual reality feels as real as, well, reality. This is what scientists believe terahertz technology can potentially bring to the world. Their work is published in the journal Laser & Photonics Review.

This tiny marvel, a silicon chip smaller than a grain of rice, operates in a part of the electromagnetic spectrum that most of us have never heard of: the terahertz range. Think of the electromagnetic spectrum as a vast highway of information.

We’re currently cruising along in the relatively slow lanes of 4G and 5G. Terahertz technology? That’s the express lane, promising speeds that make our current networks look like horse-drawn carriages in comparison.

Terahertz waves occupy a sweet spot in the electromagnetic spectrum between microwaves and infrared light. They’ve long been seen as a promising frontier for wireless communication because they can carry vast amounts of data. However, harnessing this potential has been challenging due to technical limitations.

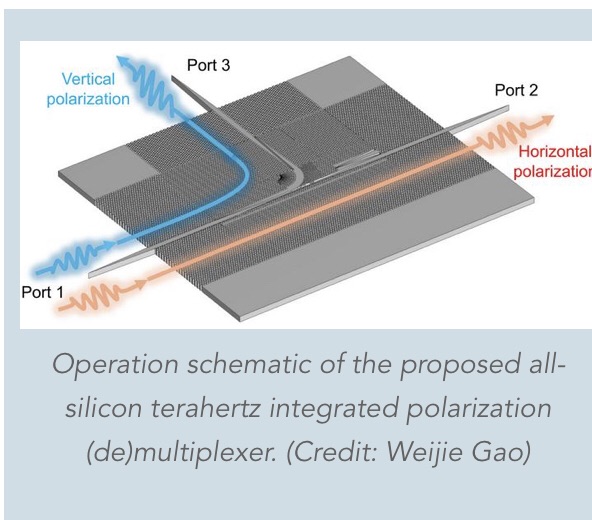

The researchers’ new device, called a “polarization multiplexer,” tackles one of the key hurdles in terahertz communication: efficiently managing different polarizations of terahertz waves. Polarization refers to the orientation of the wave’s oscillation. By cleverly manipulating these polarizations, the team has essentially created a traffic control system for terahertz waves, allowing more data to be transmitted simultaneously.

If that sounds like technobabble, think of it as a traffic cop for data, able to direct twice as much information down the same road without causing a jam.

“Our proposed polarization multiplexer will allow multiple data streams to be transmitted simultaneously over the same frequency band, effectively doubling the data capacity,” explains lead researcher Professor Withawat Withayachumnankul from the University of Adelaide, in a statement.

At the heart of this innovation is a compact silicon chip measuring just a few millimeters across. Despite its small size, this chip can separate and combine terahertz waves with different polarizations with remarkable efficiency. It’s like having a tiny, incredibly precise sorting machine for light waves.

To create this device, the researchers used a 250-micrometer-thick silicon wafer with very high electrical resistance. They employed a technique called deep reactive-ion etching to carve intricate patterns into the silicon. These patterns, consisting of carefully designed holes and structures, form what’s known as an “effective medium” – a material that interacts with terahertz waves in specific ways.

The team then subjected their device to a battery of tests using specialized equipment. They used a vector network analyzer with extension modules capable of generating and detecting terahertz waves in the 220-330 GHz range with minimal signal loss. This allowed them to measure how well the device could handle different polarizations of terahertz waves across a wide range of frequencies.

“This large relative bandwidth is a record for any integrated multiplexers found in any frequency range. If it were to be scaled to the center frequency of the optical communications bands, such a bandwidth could cover all the optical communications bands.”

In their experiments, the researchers demonstrated that their device could effectively separate and combine two different polarizations of terahertz waves with high efficiency. The device showed an average signal loss of only about 1 decibel – a remarkably low figure that indicates very little energy is wasted in the process. Even more impressively, the device maintained a polarization extinction ratio (a measure of how well it can distinguish between different polarizations) of over 20 decibels across its operating range. This is crucial for ensuring that data transmitted on different polarizations doesn’t interfere with each other.

To put the potential of this technology into perspective, the researchers conducted several real-world tests. In one demonstration, they used their device to transmit two separate high-definition video streams simultaneously over a terahertz link. This showcases the technology’s ability to handle multiple data streams at once, effectively doubling the amount of information that can be sent over a single channel.

But the team didn’t stop there. In more advanced tests, they pushed the limits of data transmission speed. Using a technique called on-off keying, they achieved error-free data rates of up to 64 gigabits per second. When they employed a more complex modulation scheme (16-QAM), they reached staggering data rates of up to 190 gigabits per second. That’s roughly equivalent to downloading 24 gigabytes – or about six high-definition movies – in a single second. It’s a staggering leap from current wireless technologies.

Still, the researchers say it’s not just about speed. This device is also incredibly versatile.

“This innovation not only enhances the efficiency of terahertz communication systems but also paves the way for more robust and reliable high-speed wireless networks,” adds Dr. Weijie Gao, a postdoctoral researcher at Osaka University and co-author of the study

The implications of this technology stretch far beyond faster Netflix downloads. We’re talking about advancements that could revolutionize augmented reality, enable seamless remote surgery, or create virtual worlds so immersive you might forget they’re not real. The best part? This isn’t some far-off dream.

“We anticipate that within the next one to two years, researchers will begin to explore new applications and refine the technology,” says Professor Masayuki Fujita of Osaka University.

So, while you might not find a terahertz chip in your next smartphone upgrade, don’t be surprised if, in the not-too-distant future, you’re streaming holographic video calls or controlling smart devices with your mind. The terahertz revolution is coming, and it’s bringing a future that’s faster, more connected, and more exciting than we ever imagined.

Paper Summary

Methodology

The researchers created their device using a high-purity silicon wafer, carefully etched to create precise microscopic structures. They employed a technique called deep reactive-ion etching, which allowed them to shape the silicon at an incredibly small scale. The key to the device’s performance is its use of an “effective medium” – a material engineered to have specific properties by creating patterns smaller than the wavelength of the terahertz waves being used.

Key Results

The team’s polarization multiplexer demonstrated impressive performance across a wide range of terahertz frequencies (220 to 330 GHz). It effectively separated two polarizations of light with minimal signal loss. In practical demonstrations, they successfully transmitted two separate high-definition video streams simultaneously without interference. The device also achieved data transmission rates of up to 155 gigabits per second, far exceeding current wireless technologies.

Study Limitations

Despite the promising results, challenges remain. Terahertz waves have limited range and struggle to penetrate obstacles, potentially restricting their use to short-range applications. Generating and detecting terahertz waves efficiently is still a technical hurdle. The researchers noted that refining the manufacturing process could further improve the device’s performance by reducing imperfections.

Discussion & Takeaways

This research marks a significant advancement in terahertz communications. The ability to efficiently manipulate terahertz waves in a compact device could be crucial for future wireless technologies. The wide frequency range of operation provides flexibility for various applications. The researchers suggest their approach could potentially be scaled to even higher frequencies, opening up new possibilities in fields like sensing and imaging.

“Within a decade, we foresee widespread adoption and integration of these terahertz technologies across various industries, revolutionizing fields such as telecommunications, imaging, radar, and the Internet of things,” Prof. Withayachumnankul predicts.

Funding & Disclosures

This research was supported by grants from the Australian Research Council and Japan’s National Institute of Information and Communications Technology. The team also received funding from the Core Research for Evolutional Science and Technology program of the Japan Science and Technology Agency. The authors declared no conflicts of interest related to this work.

Article link: https://studyfinds.org/6g-record-breaking-data-speed/

.

Among the changes is a new identity proofing option that doesn’t use biometrics like facial recognition.

NIST has a new draft out of a long-awaited update to its digital identity guidelines.

Published Wednesday, the standards contain “foundational process and technical requirements” for digital identity, Ryan Galluzzo, the digital identity program lead for NIST’s Applied Cybersecurity Division, told Nextgov/FCW. That means verifying that someone is who they say they are online.

The new draft features changes to make room for passkeys and mobile drivers licenses, new options for identifying people without using biometrics like facial recognition and a more detailed focus on metrics and continuous evaluation of systems.

The Office of Management and Budget directs agencies to follow these guidelines, meaning that changes may be felt by millions of Americans that log in online to access government benefits and services. The current iteration dates to 2017.

NIST published a draft update in late 2022 and subsequently received about 4,000 line items of feedback, said Galluzzo. NIST is accepting comments on this latest iteration through October 7.

The hope is to get the final version out sometime next year, although that timeline is dependent on the amount of feedback the agency receives, he said.

Among the changes are new details about how to leverage user-controlled digital wallets that store mobile drivers licenses to prove identity online. NIST also added an existing supplement around synchable authenticators, or passkeys, issued earlier this year into the digital identity guidelines.

The latest draft also features more changes around facial recognition and biometrics, which have often been the subject of debate and controversy in government services bound to these guidelines.

Changes meant to offer an identity proofing option that doesn’t involve biometrics for low-risk situations comprised a big focus of the 2022 draft update.

NIST tinkered with that baseline further in the latest draft after it got feedback that the standard still had “a lot of friction for lower- to moderate-risk applications,” said Galluzzo.

NIST also added a new way to reach identity assurance level 2 — an identity proofing baseline that is currently met commonly online using tools like facial recognition — without those biometrics.

Instead, organizations could now send an enrollment code to a physical postal address that can be verified with an authoritative source, said Galluzzo, who added that the authors also tried to streamline the section to make it clear what the options are, with and without biometrics.

The latest draft also has an updated section explaining four ways to do identity proofing, including remote and in-person options with or without supervision or help from an employee.

Other changes in the latest draft include specific recommended metrics for agencies to use for the continuous evaluation of their systems.

That focus aligns with the addition of performance requirements for biometrics added in the 2022 draft, as well as a push for agencies to look at the potential impact of their identity systems on the communities and individuals using them, as well as their agencies’ mission delivery.

“Our assurance levels are baselines, and you should be… focusing on the effectiveness of the controls you have in place because you might need to modify things to support your risk threats profile or to support your user groups,” he said.

The latest draft also features new redress requirements for when things go wrong.

“You can’t simply say, ‘Look, that’s a problem with our third-party vendor,’” said Galluzzo.

Big-picture, weighing the views of stakeholders that prioritize security and others focused on accessibility is difficult, he said.

“Being able to take those two points of view and balance those into something that is viable, realistic and implementable is where the biggest challenge is with identity across the board,” said Galluzzo.

That tension came into the forefront during the pandemic, when many government services were pushed online.

In the unemployment insurance system, for example, many states installed identity proofing reliant on facial recognition when they faced schemes from fraudsters.

The existing NIST guidance doesn’t offer many alternatives to biometrics for digital identity proofing, but facial recognition has increasingly come under scrutiny in regards to equity, privacy and other concerns.

The Labor Department’s own Inspector General warned of “urgent equity and security concerns” around the use of facial recognition in a 2023 memo, pointing to testing done by NIST in 2019 that found “nearly all” algorithms have performance disparities based on demographics like race and gender, as a NIST official told lawmakers last year. That varies depending on the algorithm, and the technology has also generally improved since 2019.

Jason Miller, deputy director for management at the Office of Management and Budget, said in a statement that “NIST has developed strong and fair draft guidelines that, when finalized, will help federal agencies better defend against evolving threats while providing critical benefits and services to the American people, particularly those that need them most.”

The White House itself has also been handling these tensions as it’s been crafting a long-awaited executive ordermeant to address fraud in public benefits.

“Everyone should be able to lawfully access government services, regardless of their chosen methods of identification,” said NIST Director Laurie Locascio in a statement. “These improved guidelines are intended to help organizations of all kinds manage risk and prevent fraud while ensuring that digital services are lawfully accessible to all.”

Article link: https://www.nextgov.com/digital-government/2024/08/nist-releases-new-draft-digital-identity-proofing-guidelines/399071/?

RESEARCH Published Aug 8, 2024

Key Takeaways

- One of the aspects of artificial intelligence (AI) that makes it difficult to regulate is algorithmic opacity, or the potential lack of understanding of exactly how an algorithm may use, collect, or alter data or make decisions based on those data.

- Potential regulatory solutions include (1) minimizing the data that companies can collect and use and (2) mandating audits and disclosures of the use of AI.

- A key issue is finding the right balance of regulation and innovation. Focusing on the data used in AI, the purposes of the use of AI, and the outcomes of the use of the AI can potentially alleviate this concern.

The European Union (EU)’s Artificial Intelligence (AI) Act is a landmark piece of legislation that lays out a detailed and wide-ranging framework for the comprehensive regulation of AI deployment in the European Union covering the development, testing, and use of AI.[1]This is one of several reports intended to serve as succinct snapshots of a variety of interconnected subjects that are central to AI governance discussions in the United States, in the European Union, and globally. This report, which focuses on AI impacts on privacy law, is not intended to provide a comprehensive analysis but rather to spark dialogue among stakeholders on specific facets of AI governance, especially as AI applications proliferate worldwide and complex governance debates persist. Although we refrain from offering definitive recommendations, we explore a set of priority options that the United States could consider in relation to different aspects of AI governance in light of the EU AI Act.

AI promises to usher in an era of rapid technological evolution that could affect virtually every aspect of society in both positive and negative manners. The beneficial features of AI require the collection, processing, and interpretation of large amounts of data—including personal and sensitive data. As a result, questions surrounding data protection and privacy rights have surfaced in the public discourse.

Privacy protection plays a pivotal role in individuals maintaining control over their personal information and in agencies safeguarding individuals’ sensitive information and preventing the fraudulent use and unauthorized access of individuals’ data. Despite this, the United States lacks a comprehensive federal statutory or regulatory framework governing data rights, privacy, and protection. Currently, the only consumer protections that exist are state-specific privacy laws and federal laws that offer limited protection in specific contexts, such as health information. The fragmented nature of a state-by-state data rights regime can make compliance unduly difficult and can stifle innovation.[2] For this reason, President Joe Biden called on Congress to pass bipartisan legislation “to better protect Americans’ privacy, including from the risks posed by AI.”[3]

There have been several attempts at comprehensive federal privacy legislation, including the American Privacy Rights Act (APRA), which aims to protect the collection, transfer and use of Americans’ data in most circumstances.[4] Although some data privacy issues could be addressed in legislation, there would still be gaps in data protection because of AI’s unique attributes. In this report, we identify those gaps and highlight possible options to address them.

Nature of the Problem: Privacy Concerns Specific to AI

From a data privacy perspective, one of AI’s most concerning aspects is the potential lack of understanding of exactly how an algorithm may use, collect, or alter data or make decisions based on those data.[5] This potential lack of understanding is referred to as algorithmic opacity, and it can result from the inherent complexity of the algorithm, the purposeful concealment of a company using trade secrets law to protect its algorithm, or the use of machine learning to build the algorithm—in which case, even the algorithm’s creators may not be able predict how it will perform.[6] Algorithmic opacity can make it difficult or impossible to see how data inputs are being transformed into data or decision outputs, limiting the ability to inspect or regulate the AI in question.[7]

There are other general privacy concerns that take on unique aspects related to AI or that are further exaggerated by the unique characteristics of AI:[8]

- Data repurposing refers to data being used beyond their intended and stated purpose and without the data subject’s knowledge or consent. In a general privacy context, an example would be when contact information collected for a purchase receipt is later used for marketing purposes for a different product. In an AI context, data repurposing can occur when biographical data collected from one person are fed into an algorithm that then learns from the patterns associated with that person’s data. For example, the stimulus package in the wake of the 2008 financial crisis included funding for digitization of health care records for the purpose of easily transferring health care data between health care providers, a benefit for the individual patient.[9]However, hospitals and insurers might use medical algorithms to determine individual health risks and eligibility to receive medical treatment, a purpose not originally intended.[10] A particular problem with data privacy in AI use is that existing data sets collected over the past decade may be used and recombined in ways that could not be reasonably foreseen and incorporated into decisionmaking at the time of collection.[11]

- Data spillovers occur when data are collected on individuals who were not intended to be included when the data were collected. An example would be the use of AI to analyze a photograph taken of a consenting individual that also includes others who have not consented. Another example may be the relatives of a person who uploads their genetic data profile to a genetic data aggregator, such as 23andMe.

- Data persistence refers to data existing longer than reasonably anticipated at the time of collection and possibly beyond the lifespan of the human subjects who created the data or consented to their use. This issue is caused by the fact that once digital data are created, they are difficult to delete completely, especially if the data are incorporated into an AI algorithm and repackaged or repurposed.[12] As the costs of storing and maintaining data have plummeted over the past decade, even the smallest organizations have the ability to indefinitely store data, adding to the occurrence of data persistence issues.[13] This is concerning from a privacy point of view because privacy preferences typically change over time. For example, individuals tend to become more conservative with their privacy preferences as they grow older.[14]With the issue of data persistence, consent given in early adulthood may lead to data being used over and after the course of the individual’s lifetime.

Possible Options to Address AI’s Unique Privacy Risks

In most comprehensive data privacy proposals, the foundation is typically set by providing individuals with fundamental rights over their data and privacy, from which the remaining system unfolds. The EU General Data Protection Regulation (GDPR) and the California Consumer Privacy Act—two notable comprehensive data privacy regimes—both begin with a general guarantee of fundamental rights protection, including rights to privacy, personal data protection, and nondiscrimination.[15] Specifically, the data protection rights include the right to know what data have been collected, the right to know what data have been shared with third parties and with whom, the right to have data deleted, and the right to have incorrect data corrected.

Prior to the proliferation of AI, good data management systems and procedures could make compliance with these rights attainable. However, the AI privacy concerns listed above render full compliance more difficult. Specifically, algorithmic opacity makes it difficult to know how data are being used, so it becomes more difficult to know when data have been shared or whether they have been completely deleted. Data repurposing makes it difficult to know how data have been used and with whom they have been shared. Data spillover makes it difficult to know exactly what data have been collected on a particular individual. These issues, along with the plummeting cost of data storage, exacerbate data persistence, or the maintenance of data beyond their intended use or purpose.

The unique problems associated with AI give rise to several options for resolving or mitigating these issues. Here, we provide summaries of these options and examples of how they have been enacted within other regulatory regimes.

Data Minimization and Limitation

Data minimization refers to the practice of limiting the collection of personal information to that which is directly relevant and necessary to accomplish specific and narrowly identified goals.[16]This stands in contrast to the approach used by many companies today, which is to collect as much information as possible. Under the tenets of data minimization, the use of any data would be legally limited only to the use identified at the time of collection.[17] Data minimization is also a key privacy principle in reducing the risks associated with privacy breaches.

Several privacy frameworks incorporate the concept of data minimization. The proposed APRA includes data minimization standards that prevent collection, processing, retention, or transfer of data “beyond what is necessary, proportionate, or limited to provide or maintain” a product or service.[18]The EU GDPR notes that

“[p]ersonal data should be adequate, relevant and limited to what is necessary for the purposes for which they are processed.”[19]The EU AI Act also reaffirms that the principles of data minimization and data protection apply to AI systems throughout their entire life cycles whenever personal data are processed.[20] The EU AI Act also imposes strict rules on collecting and using biometric data. For example, it prohibits AI systems that “create or expand facial recognition databases through the untargeted scraping of facial images from the internet or CCTV [closed-circuit television] footage.”[21]

As another example of a way to incorporate data minimization, APRA would establish a duty of loyalty, which prohibits covered entities from collecting, using, or transferring covered data beyond what is reasonably necessary to provide the service requested by the individual, unless the use is one of the explicitly permissible purposes listed in the bill.[22] Among other things, the bill would require covered entities to get a consumer’s affirmative, express consent before transferring their sensitive covered data to a third party, unless a specific exception applies.

Algorithmic Impact Assessments for Public Use

Algorithmic impact assessments (AIAs) are intended to require accountability for organizations that deploy automated decisionmaking systems.[23] AIAs counter the problem of algorithmic opacity by surfacing potential harms caused by the use of AI in decisionmaking and call for organizations to take practical steps to mitigate any identifiable harms.[24] An AIA would mandate disclosure of proposed and existing AI-based decision systems, including their purpose, reach, and potential impacts on communities, before such algorithms were deployed.[25] When applied to public organizations, AIAs shed light on the use of the algorithm and help avoid political backlash regarding systems that the public does not trust.[26]

APRA includes a requirement that large data holders conduct AIAs that weigh the benefits of their privacy practices against any adverse consequences.[27] These assessments would describe the entity’s steps to mitigate potential harms resulting from its algorithms, among other requirements. The bill requires large data holders to submit these AIAs to the Federal Trade Commission and make them available to Congress on request. Similarly, the EU GDPR mandates data protection impact assessments (PIAs) to highlight the risks of automated systems used to evaluate people based on their personal data.[28] The AIAs and the PIAs are similar, but they differ substantially in their scope: While PIAs focus on rights and freedoms of data subjects affected by the processing of their personal data, AIAs address risks posed by the use of nonpersonal data.[29] The EU AI Act further expands the notion of impact assessment to encompass broader risks to fundamental rights not covered under the GDPR. Specifically, the EU AI Act mandates that bodies governed by public law, private providers of public services (such as education, health care, social services, housing, and administration of justice), and banking and insurance service providers using AI systems must conduct fundamental rights impact assessments before deploying high-risk AI systems.[30]

Algorithmic Audits

Whereas the AIA assesses impact, an algorithmic audit is “a structured assessment of an AI system to ensure it aligns with predefined objectives, standards, and legal requirements.”[31] In such an audit, the system’s design, inputs, outputs, use cases, and overall performance are examined thoroughly to identify any gaps, flaws, or risks.[32] A proper algorithmic audit includes definite and clear audit objectives, such as verifying performance and accuracy, as well as standardized metrics and benchmarks to evaluate a system’s performance.[33] In the context of privacy, an audit can confirm that data are being used within the context of the subjects’ consent and the tenets of applicable regulations.[34]

During the initial stage of an audit, the system is documented, and processes are designated as low, medium, or high risk depending on such factors as the context in which the system is used and the type of data it relies on.[35] After documentation, the system is assessed on its efficacy, bias, transparency, and privacy protection.[36]Then, the outcomes of the assessment are used to identify actions that can lower any identified risks. Such actions may be technical or nontechnical in nature.[37]

This notion of algorithmic audit is embedded in the conformity assessment foreseen by the EU AI Act.[38] The conformity assessment is a formal process in which a provider of a high-risk AI system has to demonstrate compliance with requirements for such systems, including those concerning data and data governance. Specifically, the EU AI Act requires that training, validation, and testing datasets are subject to data governance and management practices appropriate for the system’s intended purpose. In the case of personal data, those practices should concern the original purpose and origin as well as data collection processes.[39] Upon completion of the assessment, the entity is required to draft written EU Declarations of Conformity for each relevant system, and these must be maintained for ten years.[40]

Mandatory Disclosures