By Dan Drollette Jr | March 12, 2026

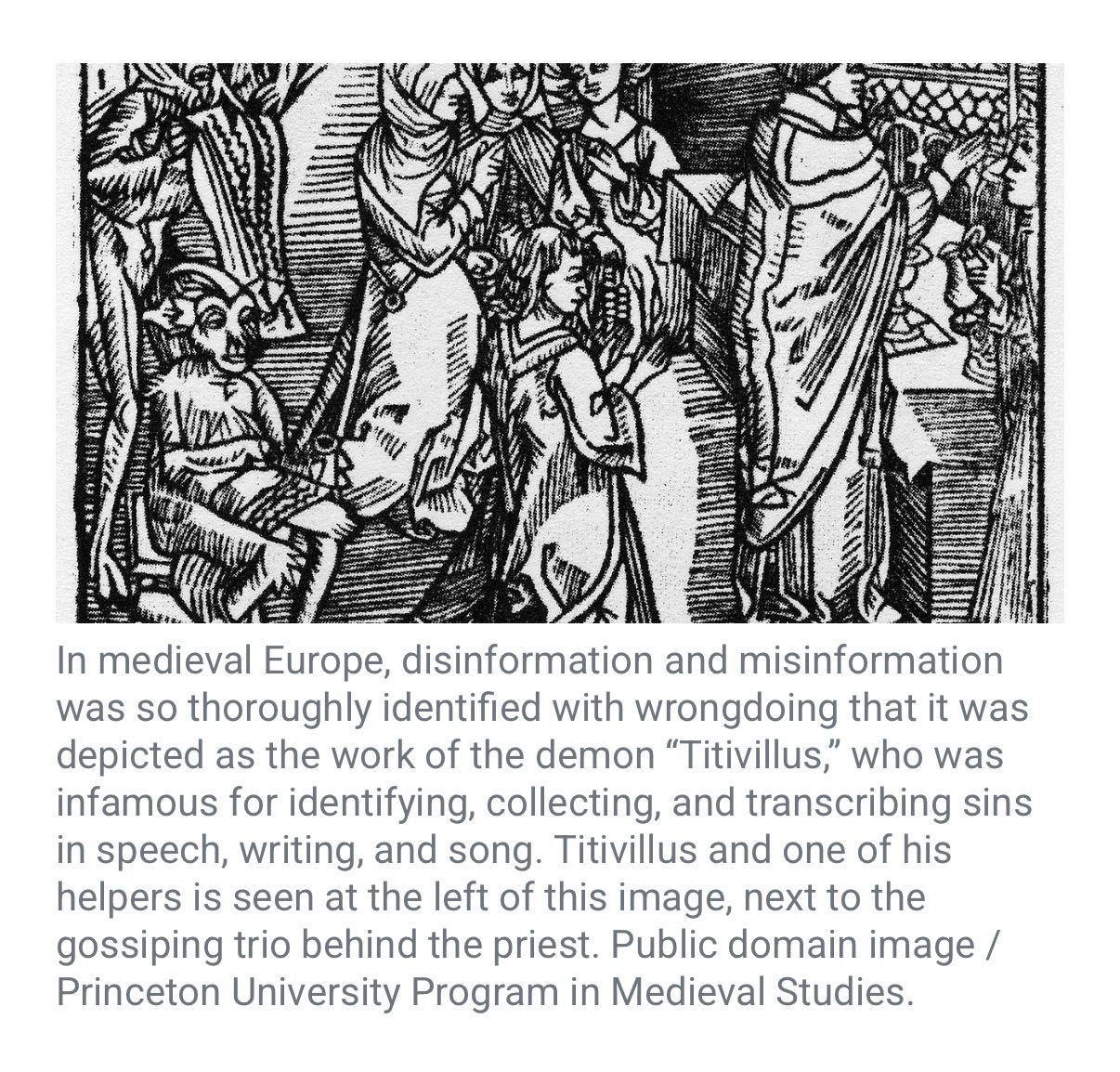

Deception, disinformation, and fakery are nothing new in the world.

Long before the current era (BCE), the ancient Greeks used deceptive tactics against their enemies during the Trojan War, when they constructed a gigantic, hollow wooden statue of a horse with a small, select team of soldiers hidden inside. Sometime in the 12th or 13th century BCE, they left the horse—with its hidden cargo—immediately outside the gates of Troy, their enemy’s capital city, and pretended to sail away. The city’s defenders then hauled the horse inside the city walls as a victory trophy—and later that night, the hidden soldiers crept out of the horse and opened the gates of the city to the rest of the Greek army (which had returned under the cover of night), allowing them to enter and utterly destroy the city.

They were so successful, in fact, that the phrase “Trojan horse” entered the lexicon, to describe any strategy that tricks a target into letting an enemy enter a protected inner sanctum.

Thousands of years later, that phrase is still used; in the world of computing, a “Trojan horse attack” describes how a certain type of malicious computer program is designed to disguise itself as a harmless, legitimate piece of software—and trick users into willingly letting it into a secure system where it can then steal data, create backdoors, install other malware, or spy on user activity. In the cyber world, Trojan horse attacks have likely been around since at least 1971, which is when they were mentioned in passing in one of the first Unix software manuals.

But while trickery is old, what is new is the very high level at which realistic -looking and -sounding “deepfake” photos and videos, synthetic feeds, and fabricated accounts can now be made—and the sheer volume that can be produced, on relatively short notice. With the rapid advance of artificial intelligence or AI, the situation is likely to only get worse, overwhelming any timely evidence-based effort to sort out what is real and what is not in the information ecosystem. “Consequently, AI brings a significant possibility of elevating nuclear escalation risks by amplifying disinformation, overloading analysts, compressing decision timelines, and exploiting cognitive and institutional vulnerabilities in sociotechnical systems for nuclear command and control,” writes analyst (and former Bulletin Science and Security Board member) Herb Lin in his essay for this issue of the magazine.

Lin lays out three hypothetical scenarios where AI could have a role in nuclear escalation and act as a threat multiplier—by shaping perceptions, contaminating intelligence, and destabilizing the nuclear “signaling” that nuclear-armed countries use to indicate their intentions to one another. His article, “AI in the information ecosystem and its impact on nuclear escalation,” is chilling in its graphic, specific, concrete detail, showing how this technology could cause events to rapidly spiral out of control.

And while the scenarios Lin portrays may seem implausible at first, recent history shows otherwise—as can be seen in “At the brink: How Moscow’s ‘dirty bomb’ disinformation campaign risked a NATO-Russia war in October 2022.” The author, Polina Sinovets, argues that Russian president Vladimir Putin used deepfakes and other disinformation to promote phony allegations that Ukraine was going to detonate a “dirty bomb” on the battlefield in the autumn of 2022, in order to justify in advance his own possible use of a Russian tactical nuclear weapon. His goal was to intimidate the Ukrainians and their allies, so that Russian forces would not be wiped out at a particularly critical juncture of the war, when Russia was attempting—and initially bungling—the withdrawal of 20,000 to 30,000 of its troops from a large part of southern Kherson and across the Dnipro River to safety.

And the role of modern disinformation is not just confined to warfare. The deepfake zeitgeist is percolating throughout society, leading to a general distrust of evidence and expertise—which seriously imperils just about everything, from healthcare to climate change to journalism and democracy. In such an environment, conspiracy theories flourish, even when they are unsupported by any hard facts. And without a basic shared reality, it is hard to get much accomplished: “The US Department of Health and Human Services is now run by conspiracy theorists who believe that the American public health system is hiding key data on vaccine safety and who spend their days spreading health misinformation,” as Lisa Fazio notes in her article “How to counter health misinformation when it’s coming from the top.”

But all is not lost. Disinformation and misinformation may be a complex problem with no simple solutions—made particularly difficult when it is spread by people in power (and at a time when social media companies seem to be abandoning any effort at fact-checking). But by targeting the supply, demand, distribution, and uptake of misinformation, it is possible to improve the information environment and help people make informed decisions.

And sometimes, the act of improving the information environment means calling out misinformation, disinformation, and conspiracy-mongering—even when it comes from one’s nearest and dearest. It can feel awkward but still must be done says Joseph Uscinski, a political science professor at the University of Miami who organized the first international conference on conspiracy theories more than a decade ago, and has written two books on the topic: American Conspiracy Theories, and Conspiracy Theories and the People Who Believe Them.In his Bulletin interview, Uscinski argues that “Being tolerant and compassionate [about disinformation-riddled conspiracy thinking] isn’t the same as pretending that their behavior isn’t their behavior… I have compassion for them, but I hold them responsible for their beliefs and behaviors.”

Article link: https://thebulletin.org/premium/2026-03/introduction-disinformation-as-a-multiplier-of-existential-threat/